We Rust

Consulting gives you a lot of opportunities to talk to a lot of people and deal with interesting issues.

Recently it occurred to me that a lot of people seem to confer magic button status to a lot of things that always seem to be If-I-Could-Only-Do-This features that would solve all their problems, and similarly a Thing-That-Solved-One-Problem-Once turned into something that got used everywhere.

Go figure, right?

Let’s talk about some of them, so maybe I won’t have to talk this over with you someday, dear reader.

Partitioning

How this ended up being everyone’s top unexplored performance feature is beyond me. I always seem to hear that someone really wants to partition dbo.tblSomeBigTable because queries would be faster if they could eliminate partitions.

Maybe if you’re using clustered column store indexes it would, but for the rest of us, you’re no better off with a partitioned table than you are with a table that has decent indexing. In a lot of cases, partitioning can make things worse, or just more confusing.

Few people seem to consider the amount of work that goes into partitioning a really big table, either. It doesn’t matter if you want to do it in place, or use a batch process to copy data over.

Even fewer people talk about Partitioning for what it’s good for, which is managing partitions. Just make sure all those indexes are aligned.

Fill Factor

At this point, I’d expect everyone to understand why Flash and SSD storage is better than old spinning disks. Lack of moving parts, less pushing random I/O patterns, etc.

And yet, without a single page split being measured or compared, fill factor gets dropped down to 80 (or lower) just in case.

I call this Spinning Disk Mentality, and it hurts to see it out in the wild, especially when:

- You’re on Standard Edition

- You already have way more data than memory

- You’re intentionally making data less compact

- Your entire workload is stalled out on PAGEIOLATCH_XX waits

I truly appreciate the problem that lowering fill factor used to solve, but let’s join the CURRENT_CENTURY on this one.

Unless you have a good reason to add physical fragmentation to your indexes, how about we skip that?

In-Memory OLTP (Hekaton)

This is a hugely misunderstood feature. Everyone thinks it’s gonna make queries faster because tables will be in memory without reading the fine print.

- If you have problems with throughput on very hot data, this might be a good solution for you.

- If you’ve got a bunch of run-0f-the-mill queries that get blocked sometimes and performance generally stinks on, this isn’t really what you need to focus on.

I think the most common useful pattern I’ve seen for this feature is for “shock absorber” tables, where things like event betting, ticket sales, and online ordering all need to happen very quickly for a very small amount of data, and after the initial rush can be shuffled to regular disk-based tables.

If your table is already cached in memory when queries are hitting it, using this feature isn’t gonna make it any more in memory.

You’ve got other problems to solve.

Dirty Reads

Getting blocked sucks. It doesn’t matter if it’s in a database, at a bar, in traffic, or an artery. Everyone wants their reads instantly and they don’t wanna hear a darn word about it.

I’m not here to trample all over NOLOCK — I’ve defended people using it in the past — but I am here to ask you nicely to please reconsider dousing all your queries with it.

In many cases, READPAST is a better option, so your query can skip over locked rows rather than read a bunch of in-flight changes. This can be the wrong choice too, but it’s worth considering. It can be especially useful for modification queries that are just out looking for some work to do.

We’ll talk about my favorite option in tomorrow’s post.

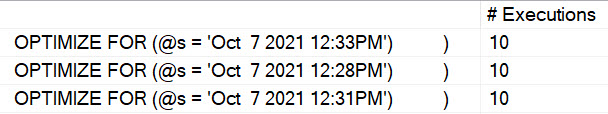

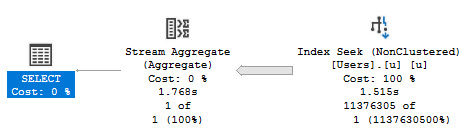

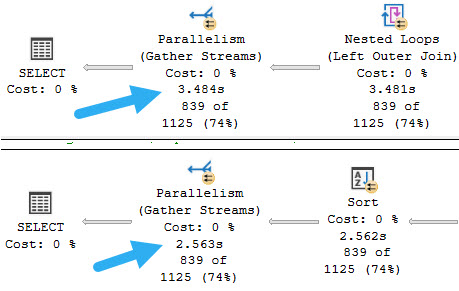

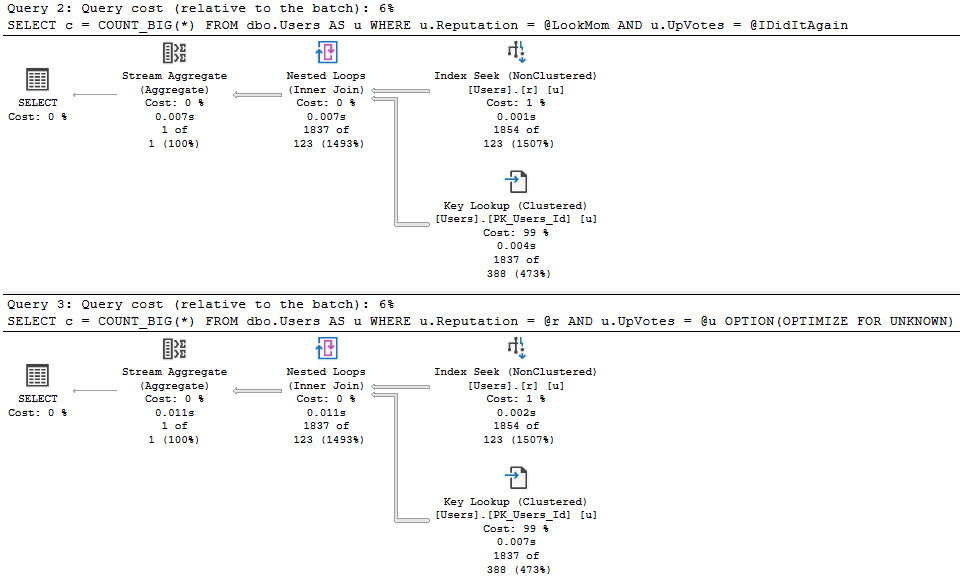

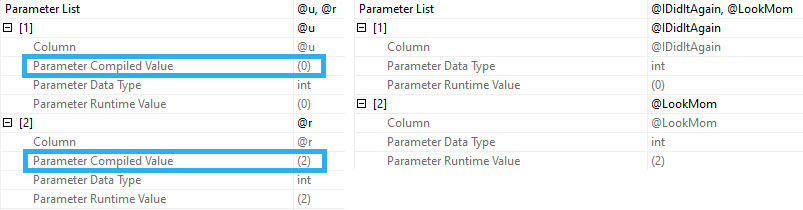

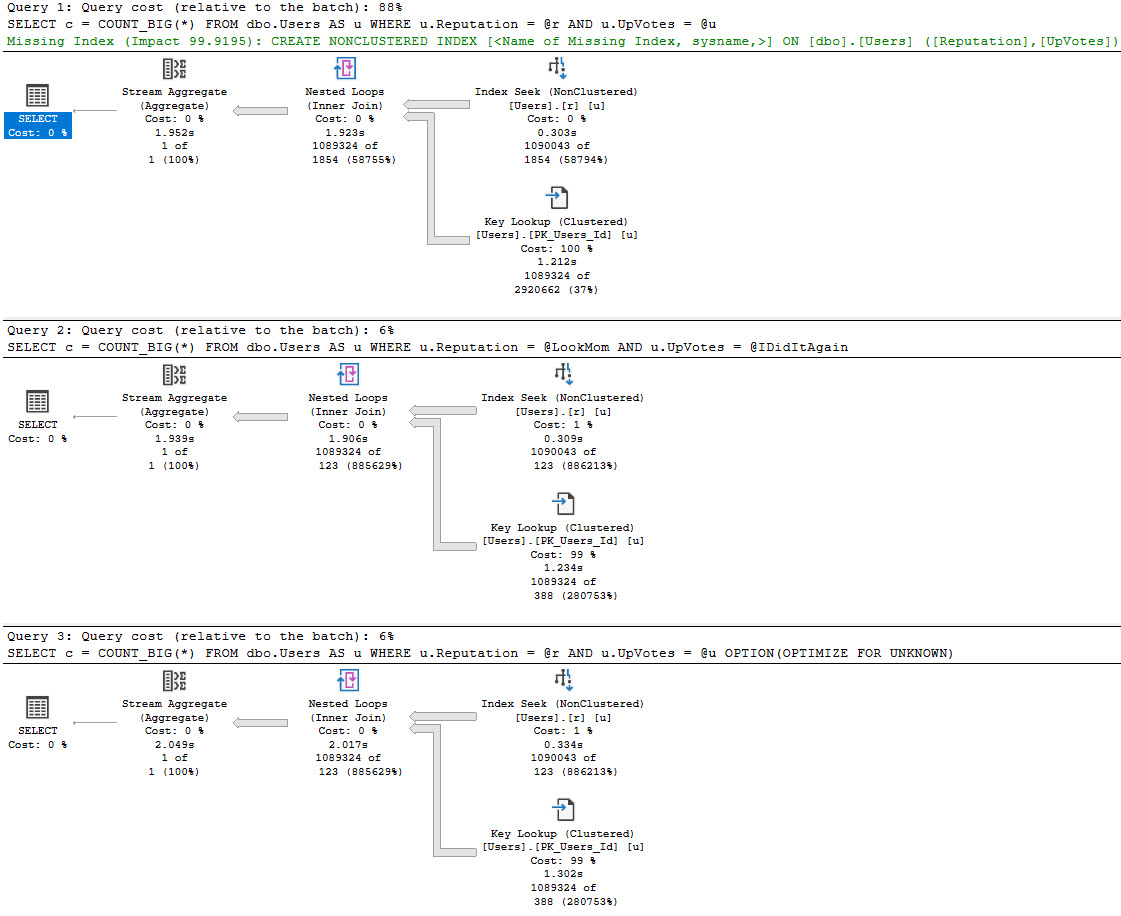

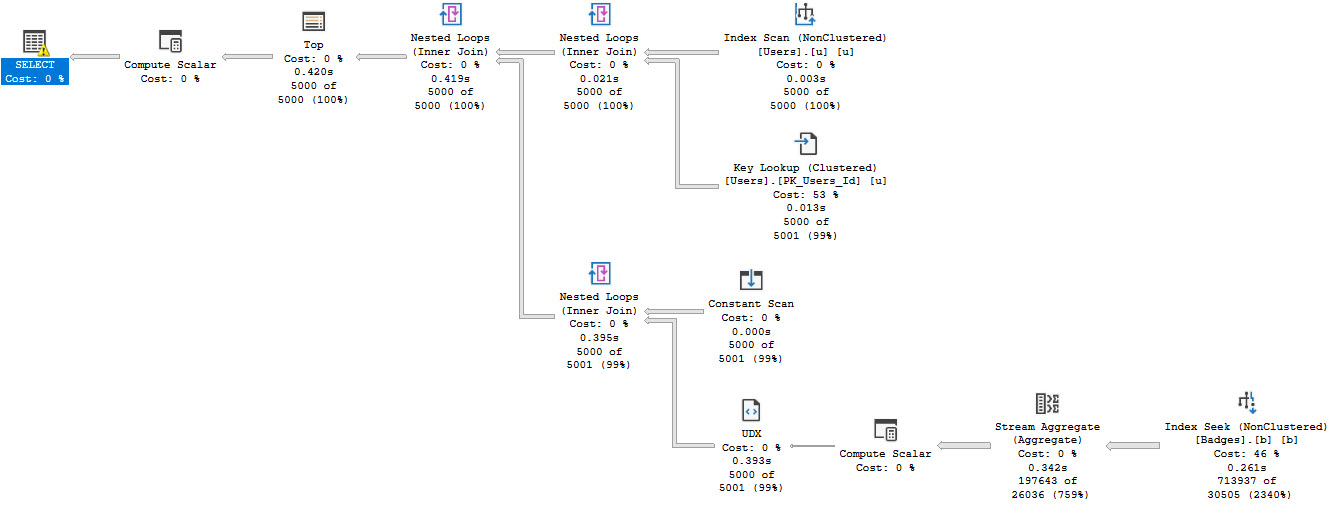

Recompiling All The Things

Look, you wanna recompile a report or something, fine. I do, too. I love doing it, because then I don’t have one less random issue to think about.

Weirdly sniffed parameter? No mas, mon ami.

Magick.

Especially in cases where bigger code changes are hard/impossible, this can be sensible, like dealing with a million local variables.

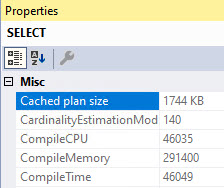

Just be really careful using it everywhere, especially in code that executes a ton. You don’t wanna spend all your time constantly coming up with query plans any more than you wanna get parameter sniffed.

Plus, since Query Store captures plans with recompile hints, you can still keep track of performance over time. This can be a great way to figure out a parameter sniffing problem, too.

Gotcha

Basic understanding often is often just camouflage for complete confusion. Often, once you dig past the documentation marketing materials, you’ll find every feature has a whole lot of drawbacks, trade-offs, blind spots, and interoperability issues.

Databases being databases, often just getting your schema to a state where you can test new features is a heroic feat.

No wonder so many millions of hours have been spent trying to replace them.

Thanks for reading!