Yo, Is That Mary?

Video Summary

In this video, I dive into the quirky behavior of indexed views in SQL Server, particularly focusing on what happens when you alter an index view. I share my personal experience with a six-year-old daughter who recently discovered that I’m on YouTube, which led to a priceless moment of realization for her. The main takeaway is that altering an index view can drop any indexes created on it, whether they are clustered or nonclustered. This can be quite surprising and potentially problematic if you’re not aware of this behavior. I walk through the process step-by-step using a simple example where creating or altering a view called “top comments” results in the disappearance of its index when altered. The video is light-hearted, filled with personal anecdotes, and aimed at making what could be a complex topic more approachable for SQL Server enthusiasts.

Full Transcript

This is gonna be one of those videos that you watch maybe because you see it on Twitter and then you’ll see it in a blog post a month later and be disappointed that it’s just a blog post with a video that you saw a month ago. But them’s the breaks when you have 30 blog posts already lined up. What do you want me to tell you? My six-year-old daughter recently discovered that I am on YouTube and the look on her face when she discovered that was priceless. Anyway, I’m here to talk, I shouldn’t introduce myself. My name’s Erik. I am, I am the janitor here at Erik Darling Data and I’m here to talk about something kind of funny that happens. with indexed views. Now, an indexed view is a pretty cool thing. It’s a, it’s a, it materializes a view. It makes that view a real, a real person like Pinocchio, what makes that view a real boy. And, I’m, it doesn’t matter if you use create or alter or create or alter here. It’s always the same thing, which I’ll, I’ll, I’ll walk through, but let’s create or alter a view called top comments. Now, if you want to create an index view, there are a whole bunch of rules that you have to follow.

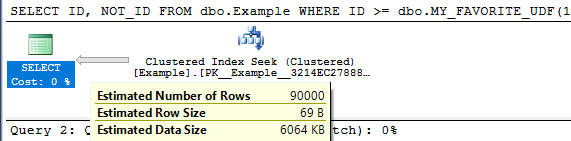

There’s far too many rules for me to get into here, but a couple, one, a couple that we need for this particular index view are schema binding, which you need for all index views and account big column if you have an aggregate. Now, you can create index views without an aggregate that don’t have account big column because that wouldn’t make any sense. But, if you’re going to aggregate, uh, data in an index view, which is kind of like the whole point of an index view, or the whole point of most index views that I see, well, this is a pretty good, well, this is something you have to do anyway. So, let’s create this view and, uh, we’ll go look at that view and object explorer. You can see all the stupid things that I create, uh, writing demos. And that one was called, uh, top comments, I believe.

And if you go look, there is no index here, right? There’s no index on this view. And if we come back over here and we execute this, we’ll have an index on that view now. And now if we refresh, oh yeah, we’ve got to go up here to refresh. I promise I know what I’m doing. If we go here, refresh, and we look at top comments, we will now have an index on that view. So, we do have an index on that view now. Watch. Pay very careful, close attention to what’s going to happen next.

I’m going to say, I’m going to get rid of this window by hitting control and R. And I’m going to create or alter my view. And let’s go back and look. Let’s refresh this whole thing again. And let’s go into top comments. And look, my index is gone. My index has been dropped.

Now, if I go create that index again, it’ll show back up in there. And if I just run this as an alter view, I realize that not everyone is on at least SQL Server 2016. What’s that, 16? Then everyone can do create or alter. But if I do alter view, and I run this, and we go back and we look at top comments by refreshing, because we’re smart people and we know what we’re doing now, my index will be gone. Fun, right? So, moral of the story, be careful when you’re altering index views.

When you alter an index view, it drops any indexes you create on it. So, it will drop the clustered index. If you create additional nonclustered indexes on your index views, it will drop those too. And, I don’t know. You might not be lucky enough to have a very easy time recreating your indexes on your views.

It might not always be as painless a process as I have here on my view on my nice 64 gig of RAM laptop. Yes. 64 gig of RAM laptop. Anyway, I’m Eric, and I, of course, am the head chef at Erik Darling Data.

Thank you for watching. Thank you. I hope you learned something. I hope you at least enjoyed me rambling. It was probably better than whatever else you were going to do for the last four and a half minutes. I mean, probably not.

Anyway, thanks for watching, and I will see you in another video, another time, another place. Goodbye. What do you think I do? Have Bert Hold Faith? I order you. I started out before you. You did it to me, whatever’s all because I have a mythOL time.

Video Summary

In this video, I dive into the quirky behavior of indexed views in SQL Server, particularly focusing on what happens when you alter an index view. I share my personal experience with a six-year-old daughter who recently discovered that I’m on YouTube, which led to a priceless moment of realization for her. The main takeaway is that altering an index view can drop any indexes created on it, whether they are clustered or nonclustered. This can be quite surprising and potentially problematic if you’re not aware of this behavior. I walk through the process step-by-step using a simple example where creating or altering a view called “top comments” results in the disappearance of its index when altered. The video is light-hearted, filled with personal anecdotes, and aimed at making what could be a complex topic more approachable for SQL Server enthusiasts.

Full Transcript

This is gonna be one of those videos that you watch maybe because you see it on Twitter and then you’ll see it in a blog post a month later and be disappointed that it’s just a blog post with a video that you saw a month ago. But them’s the breaks when you have 30 blog posts already lined up. What do you want me to tell you? My six-year-old daughter recently discovered that I am on YouTube and the look on her face when she discovered that was priceless. Anyway, I’m here to talk, I shouldn’t introduce myself. My name’s Erik. I am, I am the janitor here at Erik Darling Data and I’m here to talk about something kind of funny that happens. with indexed views. Now, an indexed view is a pretty cool thing. It’s a, it’s a, it materializes a view. It makes that view a real, a real person like Pinocchio, what makes that view a real boy. And, I’m, it doesn’t matter if you use create or alter or create or alter here. It’s always the same thing, which I’ll, I’ll, I’ll walk through, but let’s create or alter a view called top comments. Now, if you want to create an index view, there are a whole bunch of rules that you have to follow.

There’s far too many rules for me to get into here, but a couple, one, a couple that we need for this particular index view are schema binding, which you need for all index views and account big column if you have an aggregate. Now, you can create index views without an aggregate that don’t have account big column because that wouldn’t make any sense. But, if you’re going to aggregate, uh, data in an index view, which is kind of like the whole point of an index view, or the whole point of most index views that I see, well, this is a pretty good, well, this is something you have to do anyway. So, let’s create this view and, uh, we’ll go look at that view and object explorer. You can see all the stupid things that I create, uh, writing demos. And that one was called, uh, top comments, I believe.

And if you go look, there is no index here, right? There’s no index on this view. And if we come back over here and we execute this, we’ll have an index on that view now. And now if we refresh, oh yeah, we’ve got to go up here to refresh. I promise I know what I’m doing. If we go here, refresh, and we look at top comments, we will now have an index on that view. So, we do have an index on that view now. Watch. Pay very careful, close attention to what’s going to happen next.

I’m going to say, I’m going to get rid of this window by hitting control and R. And I’m going to create or alter my view. And let’s go back and look. Let’s refresh this whole thing again. And let’s go into top comments. And look, my index is gone. My index has been dropped.

Now, if I go create that index again, it’ll show back up in there. And if I just run this as an alter view, I realize that not everyone is on at least SQL Server 2016. What’s that, 16? Then everyone can do create or alter. But if I do alter view, and I run this, and we go back and we look at top comments by refreshing, because we’re smart people and we know what we’re doing now, my index will be gone. Fun, right? So, moral of the story, be careful when you’re altering index views.

When you alter an index view, it drops any indexes you create on it. So, it will drop the clustered index. If you create additional nonclustered indexes on your index views, it will drop those too. And, I don’t know. You might not be lucky enough to have a very easy time recreating your indexes on your views.

It might not always be as painless a process as I have here on my view on my nice 64 gig of RAM laptop. Yes. 64 gig of RAM laptop. Anyway, I’m Eric, and I, of course, am the head chef at Erik Darling Data.

Thank you for watching. Thank you. I hope you learned something. I hope you at least enjoyed me rambling. It was probably better than whatever else you were going to do for the last four and a half minutes. I mean, probably not.

Anyway, thanks for watching, and I will see you in another video, another time, another place. Goodbye. What do you think I do? Have Bert Hold Faith? I order you. I started out before you. You did it to me, whatever’s all because I have a mythOL time.

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.