A Rears

Thanks for watching!

Video Summary

In this video, I delve into the intricacies of how data caching affects query performance in SQL Server. Starting with a 37-gig table, I demonstrate how having all necessary data cached in memory can drastically reduce execution times from 14 seconds to just half a second, showcasing the power of efficient buffer pool utilization. As we move on to larger datasets, I explore scenarios where memory limitations and inappropriate indexing strategies can lead to prolonged page IO latch wait times, emphasizing the importance of optimizing both memory allocation and index design for optimal performance.

Full Transcript

Silly, shameful, slut of a charlatan. Silly, shame… Dang! My vocal coach is not going to be happy with me. Erik Darling here with Erik Darling Data, the Darlingest Data of them all, I hear. And on this rainy afternoon, I am enjoying a glass of champagne. If I were drinking a cocktail, I would go over the ingredients with you. Well, since I’m drinking a one-ingredient cocktail, it is a Paul Lenoir composition number three, and it is a 100% Chardonnay Grand Cru of some sort.

I did my best to memorize everything on the label, but I have probably failed you as miserably as I have failed my voice training coach. And anyway, getting to the point, today’s video… Actually, this is one of two videos I’m recording today. The other one is different, but I love when one demo spawns two things I can talk about.

So stay tuned for that useless information that has no bearing on your life whatsoever, or your Saturday afternoon. Unless you’re going to watch it, which… Thanks. Thanks for watching. But this video is going to continue on with a theme that I have been talking about in blog posts and other videos lately about how queries can steal space from the buffer pool, where you store your data pages that SQL Server gives out to queries, and how important it is to make sure that you have the appropriate hardware for your workload.

Because oftentimes when I’m working with clients, they go, well, how do I know if I have the right hardware? How do I know this out of the other thing? And I’m going to show you a little bit about that, and also a little bit about how to tell things are good, bad, or ugly for you. So, what we’re going to look at first, because I have to explain a little bit about this environment, is what it looks like.

So, this server, this VM, here we go, let’s go deep. This VM has 16 virtual processors. Mm-hmm. 16 of them.

This is a laptop. I’m very impressed. And this VM has 96 gigs of memory, because the right thing to do is to multiply 96 by 1024, and you get that number. Or else you’re a metric idiot.

So, we have that. And the laptop itself, my laptop, this 17-inch thing sitting next to me working very hard, is like so. So, we have this processor in there, with eight real cores, and let’s call it eight fake cores.

Actually, I don’t know which ones are real and fake. It could go this way, too. It could have real and fake in that direction.

I don’t really know. I don’t really care. Hyper-threaded like a loser anyway. It doesn’t matter which ones are fake. Half of them are fake. And so, I guess the 16 virtual cores that the VM have are like really virtual. I don’t know.

Extra virtual. Eight of them are fake. Eight of them are wrong. Eight of them are not things. And my laptop. Again, my laptop has 128 gigs of memory. If your production SQL Server has less than this, and you are concerned about performance, boy, howdy.

I will gladly open up my darling data’s cloud to you. Just watch out when I run demos. Things get a little hairy.

And you’ll notice that I have SSDs in this thing. I don’t know why disk D is zero. It’s a brave choice, but let’s move on. If we measure the disks that I have in here, and the only one I ran was the top line, because that’s the only one that I really care about for these purposes.

You can see that I can read, and this is gigabytes of, oh, I’m sorry. I forgot to hit a button. This is gigabytes a second.

All right. Gigabytes, not megabytes. I can read data at 3.2 gigs a second. I can write data right around 2.6 gigs a second. So that’s nice.

It’s pretty sweet, right? 3.2 gigs a second. I like that. I like the sound of that. That sounds good. And, you know, when I’m working with clients and talking about sort of like the correct hardware for SQL Server, at some point someone is always going to jump up on their desk and talk, we need faster disks, damn it.

Well, technically the fastest disk out there is memory. So let’s focus on that. But most people who I talk to are not using direct attached storage. Most people who I talk to are virtualized in some way and using a SAN in some way.

And so they are not going to get this. They’re not going to get all this goodness here. They are going to get much different speeds.

And it’s not going to be the fault of their disks. When they talk about getting faster and faster disks, that’s great. But the data still has to get to those disks somehow. And it’s usually the getting to those disks that doesn’t work well.

I mean, you can make it work well, but most people don’t. And the point of this all is to say that I have very fast disks. They are undeniably fast disks.

And I want to show you two different things here. So on that 2019 server, I have two copies of the Stack Overflow database. I have a full, let’s call it a full Stack Overflow, a full stack over here.

And the full stack database, I forget, I think the last date is at the end of 2019 or so. Maybe, yeah, the end of 2019. So it’s a pretty recent copy and it’s a pretty big copy.

And then I have this other copy of Stack Overflow that ends in 2013. So the last date in here is Christmas Eve of, or New Year’s Eve. Not Christmas Eve.

New Year’s Eve of 2013. And that’s technically when the world should have ended anyway, if God still listened to me. Which I don’t know why God stopped listening to me. I give such great advice about everything else.

Getting rid of the planet Earth was right in the plan since day one, end in 2013. I don’t know why mine’s got changed. Anyway.

What we have here is a few things. And these are helper views that I use in some of my demonstrations. And I will have links to the GitHub links to these up in the YouTube description and in the blog post, hopefully, if I, as long as I remember. And so what happens here?

What we’re going to do is we’re going to look at how big this index is. We’re going to clear out memory. We’re going to get the execution plan for this count query from the post table. We’re going to look at what’s in memory afterwards.

Right? We know that since we’re clearing out memory that nothing’s going to be in there. And then we’re going to run the query again afterwards, again getting the execution plan. And we’re going to do the same.

So this is in the 2013 database. That’s that context. And then we’re going to do that again in the big Stack Overflow database. And if you’ll notice, I have this hint on the, on this, this query, because apparently the nice people who make the dynamic management views in SQL Server are not terribly good at, at designing them and performance stinks unless you tinker with things a little bit.

So Microsoft, if you would like some consultation on how to make these things faster, I am available for you. I care about your health and wellness and happiness, especially that of Joe Sack. Everyone else?

I’m kidding. I’m kidding. You’re all fine people. So let’s look at what happens here. Let’s look. So at the very beginning, right, we have this. And this tells us how big the, the clustered index on the post table is.

Because I don’t have any nonclustered indexes right now. And by gosh, I’m a terrible DBA for that, huh? So this copy, right, 2013 is about 37 gigs.

And a number that I have a lot of physical agony trying to round between 47 and 40, between 4.7 gigs and 4.8 gigs. I just don’t know where to go with it. It’s just so in the middle.

But you can see there, you decide for yourself. It is 4741.96, yada, yada, yada, megabytes. You can go to gigabytes with that any way you want.

Any way you want, baby. So we run this query. We have the execution plan for it. We look at what’s in memory afterwards, which is basically the entire table, or at least all the pages that we needed to get a count. Which is great.

The count again to make sure we didn’t cheat. We didn’t mess around here. We didn’t count fewer rows. That is the number of rows in the table if you look at the nice matching row count there. Wow, that database sure is consistent.

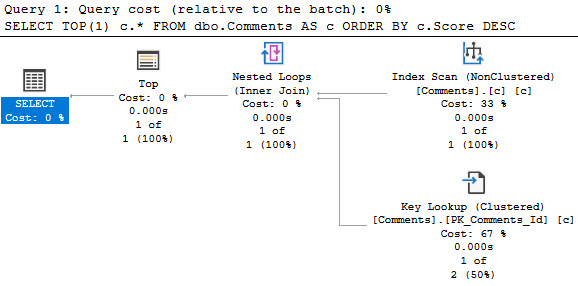

And then let’s look at the query plans. So the first time this runs, we get a query that takes about 14 seconds. That’s reading from clean Bufferville.

We had to get everything from disk. And it took about 14 seconds for us to read about 37 gigs from disk up into memory. You can see all that time spent right in here.

13.916 seconds. Ooh, wee, ooh, ah, ah. Charming, I’m sure.

And if you look at the properties over here, because we are on such a spankin’ new version of SQL Server. SQL Server 2019 probably patched up to the latest. Again, I’m a terrible DBA, so I don’t really know these things off the top of my head.

But if you look at the weight stats over here, so very important thing whenever you’re looking at query plans, especially actual execution plans, actual factual plans, is to be hitting the properties of different operators and looking at the stuff that comes up in this window.

Because all sorts of fun, interesting things show up there that just don’t show up in the tooltips. If you look at that tooltip, there’s hardly any information there. If you look at what’s in the properties pane, boy howdy.

Whoo! Whoo! If you’re data-driven, you could spend days driving around in there. So let’s look at the weight stats of this thing. And way up at the top, way, way, way up at the top, I think the rest of these, honestly, the rest of these weight stats in here are going to be completely useless, but way up at the top, we spend 10 seconds reading pages from disk into memory.

So for about 37-gig table, reading data at 3.2 gigs a second takes 10 seconds. Would you believe that? Would you believe that?

Would you believe that math? Would you believe that math to get 32 gigs of pages into memory? Well, I guess it’s a little bit worth a lot of pages.

I don’t know. Math works out. It’s there. It’s perfectly fine. Don’t worry about it. I’m sure there were other things involved. I’m sure there were other things involved. So that’s what happened there, right?

Cool. 14 seconds. And now let’s look at the execution plan for the second run when everything was already there. Quite a remarkable difference, isn’t it?

Hmm? Quite remarkable. About half a second to run that query. If you go look at the wait stats for this, we will no longer have 10 wait stats here. And on top of that, we will no longer have, well, I mean, crossing my fingers now that I’ve said it, we don’t have any weights on page IO.

I didn’t actually didn’t look at this before I ran it. Again, on top of being a terrible DBA, I’m also a terrible presenter. So just never watch anything I do.

You’ll be horrified. So we look at this and what do we have? No weights on reading pages from disk. We have some internal weights for SQL Server to do things that it has to do, but we don’t have any weights on disk anymore. Wonderful.

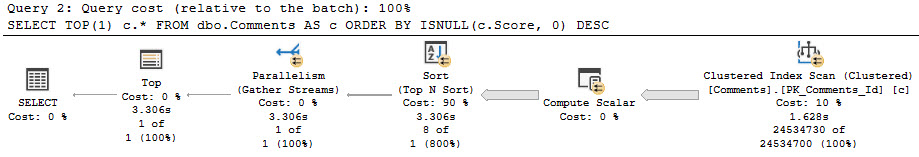

And that solved a 14 second problem for us by about 13 and a half seconds, having that data already in there. This gets worse when we have bigger data, bigger data, big, big data. What we’re going to see here is a slightly different scenario running through the exact same thing as in the other one.

We have slightly different information. This table, rather than being 37 gigs, is about 120 gigs with 22 gigs of lob data. I told you the world should have ended in 2013 and you didn’t listen to me.

And then after we read pages from disk into memory, notice now that we don’t have the entire table cached in memory anymore. We do not have that. We do not have enough space in the 96 gigs of data or 96 gigs of memory that we have assigned to this server.

We’re hobbled a little bit by the max server memory setting. If you go with the properties and we look at memory, you will see that I have about 88 gigs. Again, if you divide 90112 by 1024 because you’re a smart person, you will get back 88 gigs.

So we have 88 gigs of memory assigned to this. We read about 83 gigs of this table up into memory. I’m sure there’s other memory needs on here.

There’s other stuff going on that the TGL server needs some memory for. So we use about 83 gigs of space for the buffer pool and we have that hanging about in there. Wonderful.

Perfect. Glorious. Wonderful. Like the champagne. I’m told that I’m not allowed to drink on camera by my lawyer. I’m just going to smell it.

Mmm. That smells delicious. So let’s look at this when we have a bigger table. All right.

So we have to, when we read this one from disc, that takes 40, well, rounding this isn’t bad. This takes about 43 seconds. I’m willing to round there. I’m willing to go the extra mile for you. And if we, again, because we are very smart performance, we’re terrible DBAs, but we are very smart performance tuners.

If we go look at the properties of this. Now we look at the weight stats here. We have, oh, that’s a tough rounder. Oh, it’s so close. It’s right in the middle.

Oh, I can’t make these decisions. We now have about 32-ish seconds of page IO latch weight. So about 32-ish seconds of our lives were spent reading pages from disc up into memory. And if we go look at that second execution plan, this one’s going to be different.

Isn’t it? This one is going to have run for 34 seconds. Why?

Because we had to read stuff back into memory. We only had some of this stuff in memory. We didn’t have the right stuff in memory. If we go look at weight stats and we look at the top one, we will have spent a little bit less time reading pages from disc into memory, but we still had to read a whole bunch of pages from disc into memory, right?

So less, but still not great. If we crack that first one back open, so we go from, oh, wait, that’s the wrong one. I went back too far. Demo over.

Leave. Leave. All of you. If we go back to the source one, it’s 42 seconds versus 34 seconds. So that didn’t turn out too much better, did it? And again, this is reading data very fast. This is not slow data.

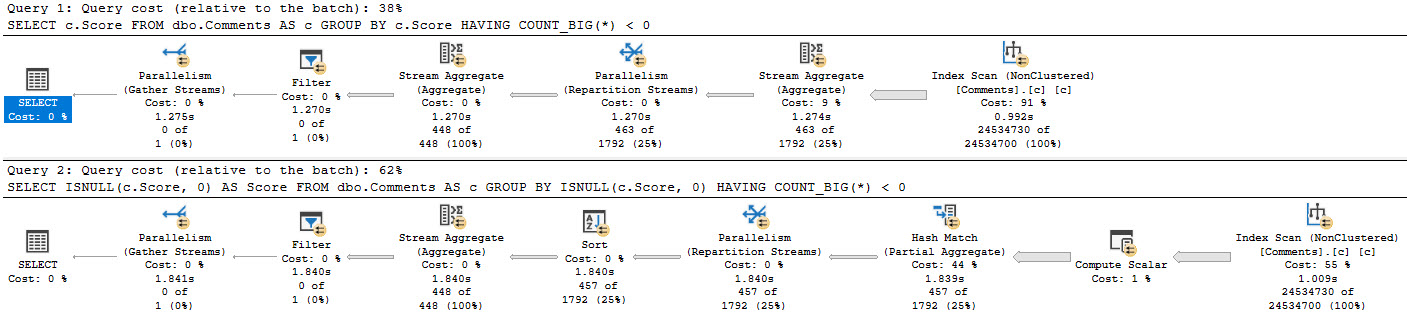

This is fast data. I like my data big. I like my data fast. I like the smell of that champagne. It’s a, it’s the, these are the few of my favorite things. And so the point here is that if you are looking at your server, if you’re looking at the weight stats on your server and you see that you are waiting a lot on page IO latch weight.

So again, we come back to these weight stats over here. If you find yourself waiting on page IO latch underscore S to the H, you most likely have a deficiency. Now, your deficiency could be in one of three areas.

You could have too little memory. That would be an obvious deficiency. You could have inappropriate indexes for your workload, either too many indexes, right? Cause too many things competing for space in the buffer pool that you have to keep reading up and flushing out and bring them back and come on again, off again and missing indexes, right?

So you could have a lack of opportune indexes for your queries. That’s another one. And you could also have queries that are battling your buffer pool for memory, for memory grants, right?

So things like sorts and hashes that require memory that will take memory away from your buffer pool. So those are three places where you could have some room to improve. Often when I look at servers, all three are true.

Often when I look at servers, they are laughably smaller than my production, my production laptop, which is again, this. Oh, wait, I should go back to the CPU graph so you can see all my fake CPUs again. So this and this, all right, that’s my laptop.

Cost me about four grand from Lenovo. It was a good sale, but you know. Put some money into your production SQL Server that runs your business.

I put some money into the production SQL Server that runs mine. It’s just what we do. Got to spend money to make money in here.

So anyway, what we talked about today, a little bit, sort of in a nutshell, is, God, I forget. Well, there was a champagne. That was good.

There was the size of my laptop, the size of the VM, the size of the two stack overflow databases, and the size, two different sizes of the post table. And how having more, and how having the data fully in memory when we needed to read it was very, very helpful. That query went from 14 or seconds down to about half a second.

But when we had a table that didn’t fully fit into memory, even reading from it again with some of the data in memory didn’t save us all that much time. We still ended up in a pretty tough spot. And we also talked about how if you see your servers waiting a lot on page IOLatch waits.

Now it could be more than .underscore sh. There are also page IOLatch underscore ex and underscore up and I think KP and KL and some other ones. But the ones that you’ll see the most often are page IOLatch sh and page IOLatch ex.

That is an exclusive page IOLatch and that is when modification queries need data. The SH is shared latches for select queries for the most part there. So that’s what you would look at.

And if you see a lot of those waits, if you like yours, if the amount of time that queries are waiting on those waits is significant, then you have some work to do. You have to look at how you have sized your server. You have to look at how you have designed your indexes.

And you have to look at how your queries are asking for memory. If you need help with that stuff, I guess that’s where someone like me comes in. But I don’t know, you’re watching this YouTube video for free.

Apparently you like free. So who knows? Anyway, thank you for watching. I hope you learned something. I’m going to take another sniff of my champagne and enjoy my Saturday. Bye.

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.