Watch It

Video Summary

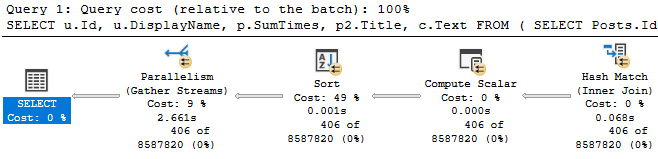

In this video, I delve into the often-overlooked impact of over-indexing on SQL Server’s transaction log. Drawing from my experience and recent blog post videos, I explore how excessive indexing can lead to issues beyond just the buffer pool and locking, specifically focusing on its effects on the transaction log. Through a detailed demonstration using the Stack Overflow database, I illustrate how different lock granularities and additional indexes influence the number of locks taken and logged in the transaction log. This is crucial for understanding the full extent of logging overhead introduced by over-indexing, especially in high-throughput transactional environments where every byte counts.

Full Transcript

Hey, hey, hey, hey, hey, uh, I mean, who knows how much longer databases are going to be a thing anyway? Uh, I wanted to talk, so I’ve done some, um, I don’t know, blog post videos, one of, one of, at least one of those things about how, uh, over-indexing, uh, can cause issues with the buffer pool, with, uh, locking, I mean, goes to say it with, with blocking as well. And, uh, I wanted to go another step in that sort of same direction and look at how, uh, over-indexing can impact the transaction log, which isn’t something that I normally care about all that much because, uh, I don’t know, I, I haven’t taken a transaction log back up since 2014 or so, so I’m not really sweating it, but, uh, for people with, uh, you know, the, the kind of workloads that depend on throughput of transactions, uh, it’s the kind of thing that you might care about, something, something that you might be interested in.

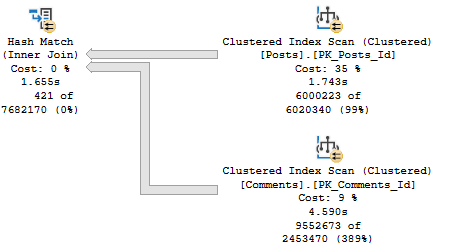

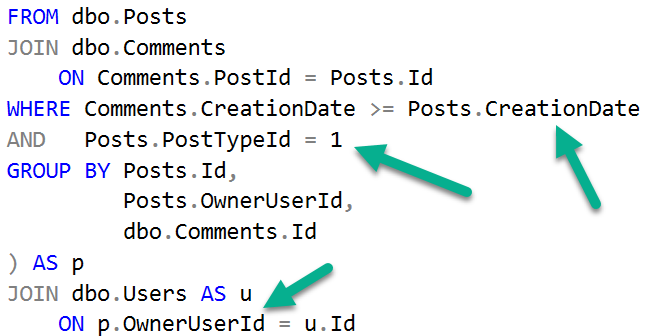

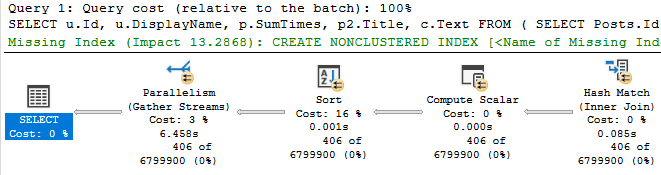

So, I put together, uh, this demo here to, uh, show you, uh, I don’t know, this, a few different things. One is how, uh, lock granularity can, uh, can impact the number of locks taken, which kind of goes without saying, but I wanted to, uh, show it, show, show you how it gets, um, how that gets, uh, uh, translated into the transaction log and also how additional indexes thrown into the mix can also, uh, impact that. So, what I’m gonna do is make sure that all my, any, any unnecessary indexes are currently dropped.

I’m gonna run a checkpoint. My database is in simple recovery, and this will be the same whether you’re in simple or full recovery. There’s a big, big, big, big, big, big, big, big misconception that if you have your database in simple recovery model, that somehow less is logged. While that’s true for some bulk operations, like, uh, minimally logged inserts, some, uh, some index rebuilds, it’s not true for regular user transactions.

Regular user stuff still has to get fully logged in order to be rolled back or forward. The only difference is that SQL Server manages the transaction log for you when things get flushed out. So, taking transaction log backups doesn’t, I mean, you can’t even do it, but it wouldn’t make a difference here.

It wouldn’t, uh, wouldn’t have any impact. But the stuff that happens still has to get fully logged in there. So, stop being goofy about that.

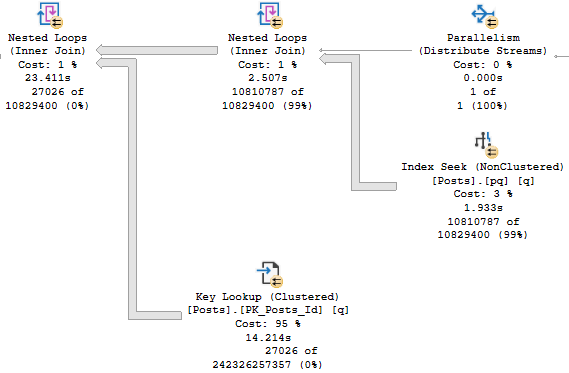

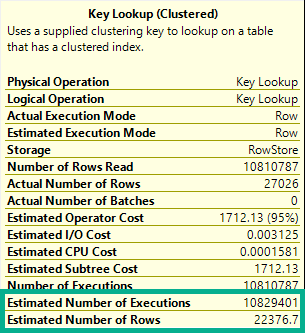

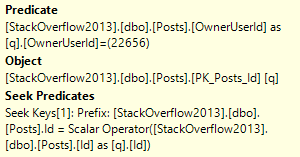

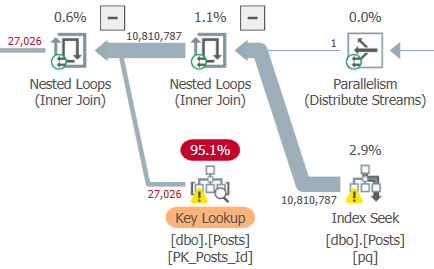

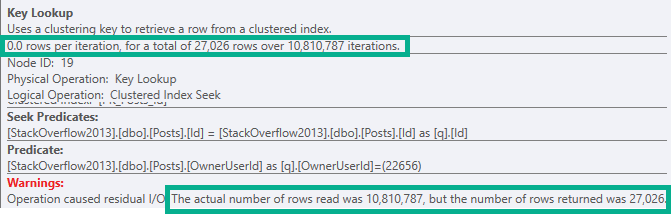

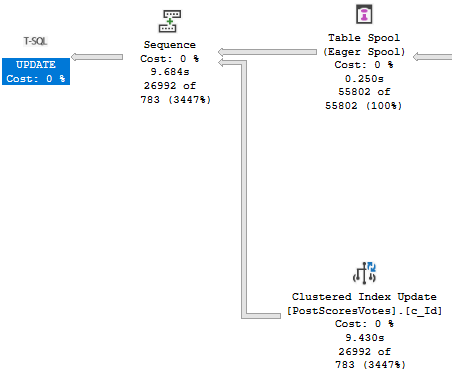

So, this is gonna run a checkpoint to make sure there’s nothing, uh, currently in the transaction log. Uh, open a transaction, um, update. Uh, this table that I have in my copy of the Stack Overflow database called Total Score by User.

Uh, and then after the update runs, which is gonna hit exactly 10,000 rows, uh, I’m going to look at the transaction log. We’re gonna take a full view of all the stuff that happened in there, and then we’re just gonna get a summary of the locking information that went in the transaction log. So, I’m gonna show you a few different things with lock granularity, and then a few, a couple different, uh, well, I guess what happens when we, when we start adding indexes, how those also get logged.

So, let’s run this, and then that runs pretty quickly, and we can see, ooh, goodness me, excuse me. Don’t know where that came from. I apologize.

I, that was, that was rather gross. Um, so, what we’ll see is for every row that ends up in here, all right, we can see all these different things happening. Well, we can see all these different locks ending taken.

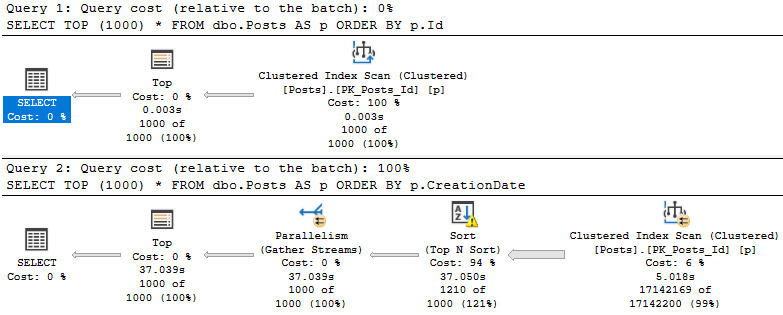

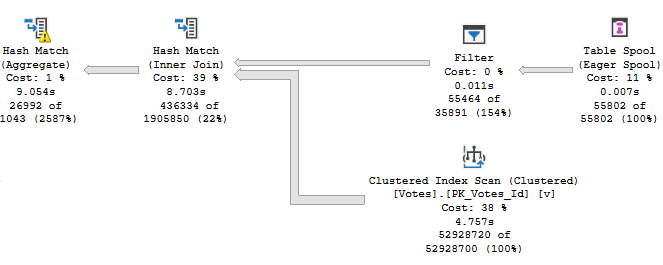

Uh, we get a, uh, three locks, uh, for each row doodaddy thing, and, uh, that’s fun, right? That’s interesting. And then if we switch this to be a page lock end, what happens?

Uh, that goes down to two, and we get sort of a, a more round number of locks. And if we switch this over to be a table lock, uh, we still get 10,000 records. So, notice that there were 10,000 records, whether we took a row, page, or, uh, or, uh, object lock, right?

Every single one gave 10,000 records here, but the number of locks that get, that shows up here will change with each one, right? Because we took, well, like, a lot more, uh, individual locks for row or page level versus the object level, right? Cool.

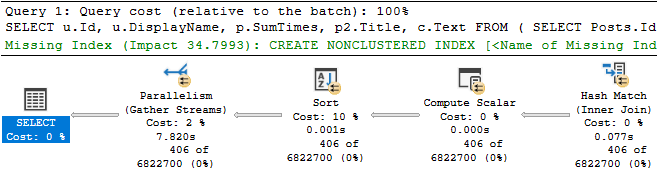

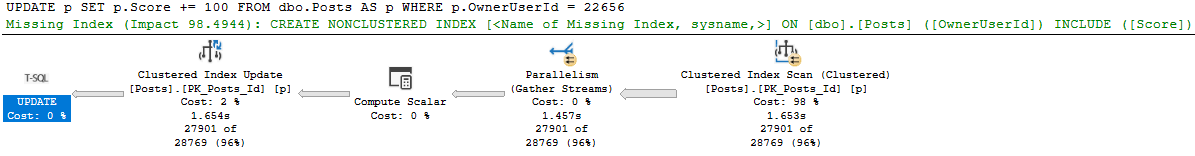

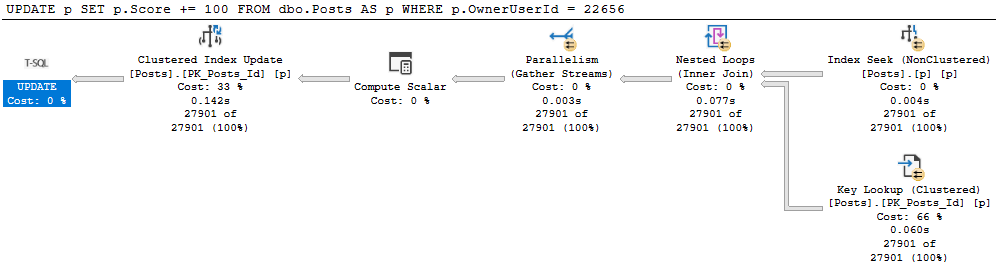

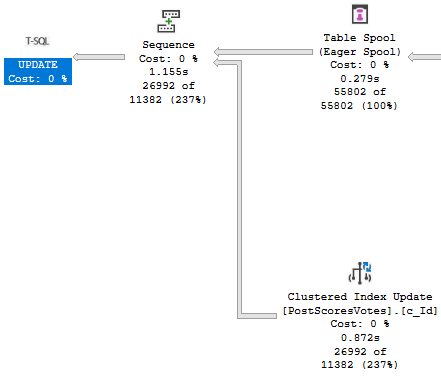

Perfect. So, uh, to keep things a little bit more simple, I’m going to leave the tab lock hint in place here. And then I’m going to add a couple indexes to the table, all right? I’m going to add these.

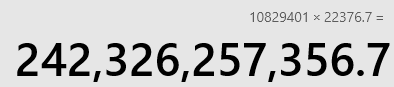

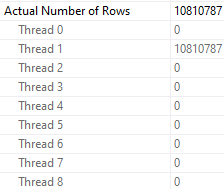

Do, do, do. All right. Those are on there. And now I’m going to rerun this. And it took a little bit longer. And we have a little bit more information in here.

So now we’re going to see, um, each of our indexes ended up in here, right? And we can see the number of locks that got taken on each of them. We can see the primary key was also logged in here.

And we can see the, uh, number of locks and the number of records. Now, if I switch this to be row lock or something, right? We would see more in there because you would be taking more individual locks. Now this runs and this goes, and now we have slightly higher numbers in here.

So that’s fun, I guess. Anyway, uh, a lot of people don’t realize, um, how much, uh, locking stuff gets stuck in the transaction log. And beyond that, a lot of people also don’t realize that all the changes to indexes that, uh, also get logged in there.

Each individual index is, you know, I know I’ve said this before, a separate structure on disk and memory. You have to back it up, check it for corruption, all that stuff. Like everything you have to, like every different index is something that you have to, uh, account for.

So, if you have very, very busy tables with, uh, with like, you know, modifications to data. I mean, I know no one deletes data. I’ve, I’ve seen your tables.

No one has ever deleted data from them. Everything is inserted in and then maybe updated at some other point. But if you have very busy tables, very busy transactional tables, uh, the more indexes you have on them, the more you are logging as data goes into those tables and gets modified. Now, uh, this is, uh, something that, you know, um, also, uh, tends to harm people who design very wide tables, who don’t do a good job of normalizing tables.

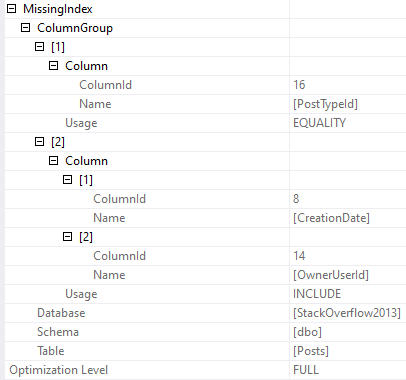

Because, uh, you have tables within tables and all of those tables that people want to, uh, all those, uh, different, like groups of columns that people tend to want to, um, to pull data from in sort of their own little chunks. Uh, you know, the, you’ll have lots of wacky missing index requests. You’ll end up with, um, you know, lots and lots more indexes because there are lots of different, lots more different ways, lots of different columns to filter the table, to, you know, select from the table.

So it just kind of, uh, exacerbates the issue. Anyway, I think that was it. I, I ran out of things to say.

So I’m going to, I’m going to turn this thing off. Thank you for watching.

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.