You know, work

Video Summary

In this video, I dive into a fascinating case study where using `NOT EXISTS` instead of a `LEFT JOIN` led to significant performance issues for a client query. I walk through the process of reproducing and optimizing the problematic SQL queries using the Stack Overflow database as an example. The key takeaway is how row goals can mislead the optimizer, leading to inefficient execution plans. I also highlight why a seemingly selective index request was actually counterproductive due to the use of `COALESCE` on a non-nullable column, demonstrating that `ISNULL` would have been a better choice in this scenario. By sharing these insights, I hope to help you avoid similar pitfalls and optimize your queries more effectively.

Full Transcript

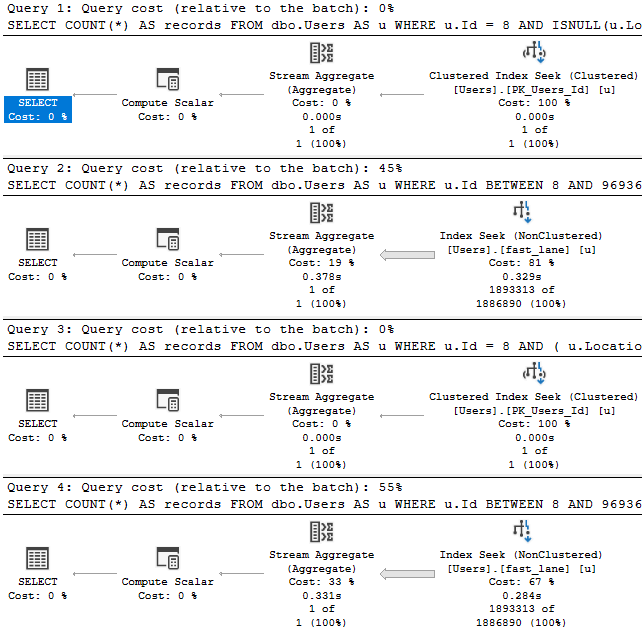

Yep. So, good morning. Good afternoon and good evening. Thank you, thank you, thank you. You’re far too kind. Erik Darling here with Erik Darling Data. Still, I have managed to not fire myself for almost an entire year. The one thing that made me come really close is that I just I just deleted 60 gigs of unusable video recordings. And I know that’s probably hard for you to believe because you may have seen my other videos and thought to yourself, is there anything that he won’t publish? And here we are. Yes. The answer is yes. There was nearly 60 gigs of unusable video footage. So there’s that. But I wanted to record this because I thought it was kind of an interesting process where I had to record this. I had a client and say, we need you to help us with this one query. And normally, that’s not the kind of thing that people want me to do. I’m not like a tune this one query kind of guy. They’re like, we have a lot of problems and we need a lot of things tuned. And that’s kind of more my deal. But these nice people had one big problem. And they said, we need kind of embarrassing. Is that, they read a post on my site. And they tried something. And it actually detuned the query. The query got slower. When they tried the thing that I said they should do. So that was personally a little bit embarrassing. Now, the thing that they tried that didn’t go well was using not exists instead of a left join with the is not know the figure out where rows didn’t already exist in a table. This was data that was part of an ETL process where data was being moved from a staging table into a real table. We don’t need to do an insert or anything to mimic the problem. I just need to run two queries that are written kind of similar. And I was able to reproduce a lot of the behavior and simplified queries using the Stack Overflow database. When we look at these two query plans, you can see that even though they have the same logic and the same, the same, they return the same results over here, same results, right? Same results up and down, 10 rows, 10 rows, everything the same, good. Even though they do that, they get two very different query plans.

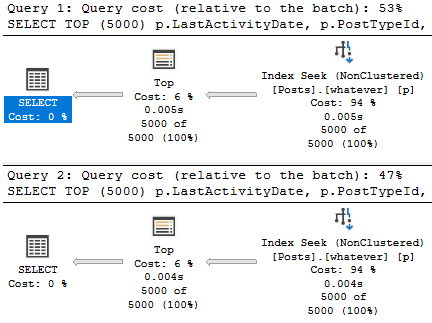

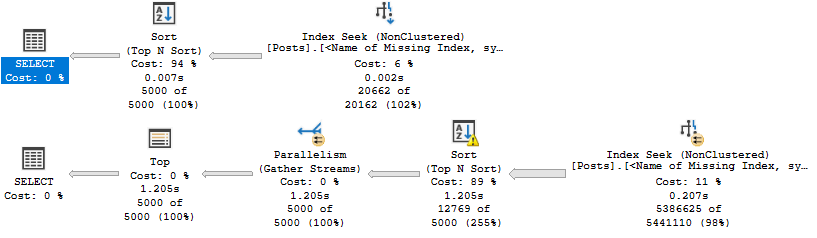

So you can see the top query plan. So you can see the top query plan is just god awful for this scenario. And I’ll talk a little bit about why. So what’s happened here is with the top 10 in the not exists over here. SQL Server has identified an optimization opportunity to use a row goal in the query. And what that row goal does is it significantly cheapens some operations. The optimizer wagers that some things will be very, very cheap to do. It’ll be very, very easy to find a few, like a very small number of roads very quickly.

Now, this is not only a good demo for when a row goal is quite counterproductive, but it’s also a very good demo to show you that operator costs and execution plan costing can be very, very, very wrong. If we look at this execution plan up top and we zoom in a little bit, we can see the costs that the optimizer has assigned to certain things. If we look over here, 34%. And if we look over here, 61%. So we have 95% of the query cost up here. And that’s a pretty good chunk.

But when we look at the times that these things ran for, 179 milliseconds. Now, in row mode execution plans, these operator times are cumulative going from right to left. So the sort ran for 406 milliseconds minus 179 milliseconds, whatever that is. But you can see that these are very high cost operations in the plan. So the second one is, if we look at the execution plan, we spend almost no time in them. If we go look at where we did spend time, we have an operator that cost 4% and an operator that costs 6%.

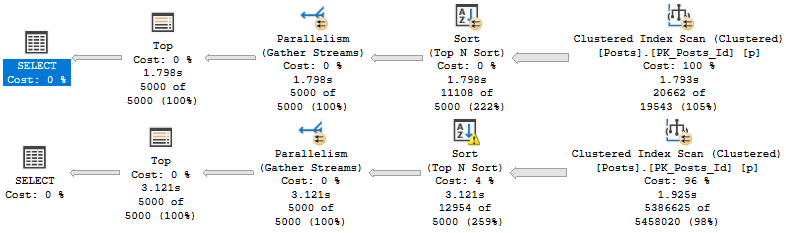

But this is where we spent 45 seconds of execution time that is hanging out in here. Now, this is a, I mean, I don’t want to say common anti-pattern, but it is sort of a known anti-pattern where if you have, you have an anti-semi join up over here, oops, that’s the wrong button. Let’s do control instead. If you have an anti-semi join up over here. And SQL Server has chosen, SQL Server has a top operator in here. And then you have a scan underneath that top.

What I think Paul White once called a useless top and Andy Malin once giggled about. But if you have a nested loops join and a useless top and a clustered index scan under the useless top, you most likely are facing this particular anti-pattern. What’s going on is that the SQL Server has chosen the loop join. So it’s chosen to take some rows from over here and for each row that comes out of there, loop down over here and then execute this.

Because it thinks that it won’t have to do that too much in order to figure out what exists or doesn’t exist in that other table. It thinks that it can do that relatively quickly, easily, and cheaply just by, you know, scanning in and grabbing some rows. But it ends up, that ends up not being the case. That ends up being a pretty significant burden.

That does not happen in the bottom query. In the bottom query, we just do one big scan and one big scan and one big join. And then we filter out rows that we’re not interested in after the join. Now, this particular pattern is the one that I talked about in my blog post that I thought was a generally bad one for these queries.

But in this case, this actually turns out a lot better. It turns out a lot better than that Rogel plan, which this one only runs for about two and a half seconds, which is pretty good, right? Pretty good. Now, if you want to learn a whole lot more about Rogels, if you want to get into the article where Paul White identifies a useless top, that’s in the third part. But it’s a four-part series. It’s an exhaustive, not exhausting, four-part series.

I mean, you might be exhausted when you’re done with it, but that’s your problem. Deal with it, I guess. But if you want to learn more about that, there’s a four-part series over on sqlperformance.com.

I’ll let you figure out how to get to these four things. But there it is for you if you ever need it. Now, we could avoid those problems with one hint or another with our original query.

So we could either tell SQL Server to use a hash join or to disable optimizer Rogels using a newer hint in SQL Server. I forget which version, but I think 2016 maybe. But if we do either one of those things, we tell SQL Server what kind of join to use or we tell SQL Server to not use a rule goal with this plan, we get queries that run relatively quickly, right?

About two and a half seconds, which is just about what that left join with the filter ran in. So not a whole lot of difference there. But there is something, there is some, like, further stuff we could do to get this query to maybe be faster. Now, the first thing I want to figure out is what’s up with that missing index request.

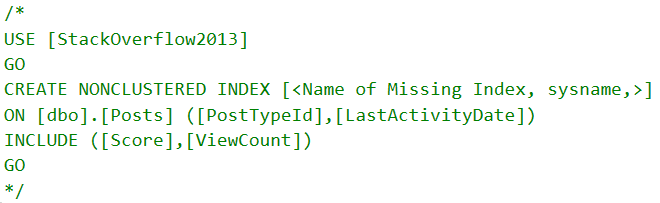

So the missing index request that SQL Server is asking for in both of these plans is terrible. And I’m going to start creating this index because it takes a little bit and then talk a little bit about why that’s happening. Now, in our queries, so long story short is that this coalesce up here.

I don’t need all that space, do I? It’s a bit daft looking. There we go. Just the one’s nice. But see, this coalesce was left over from some defensive mechanism when there was a left join and everything else, where someone was worried that they were going to rule out rows that they should keep in.

So they had the column wrapped in a coalesce. The same thing would have happened with isno, but we’ll get into that in a little bit more detail later. But the missing index request that came up was on vote type ID that include user ID and creation date.

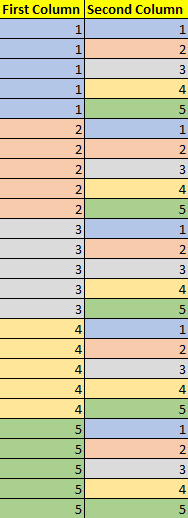

And that is a really goofy index specifically for this query. If we look at the way that data, or if we look at how much data those predicates would rule out, like filtering them in the where clause, that select query that is just for the creation date column, it goes out a lot more data.

In other words, this is a short way of saying that. That filters down to about a million and a half rows, whereas the equality predicate for vote type ID in 123 only gets, I mean, only down to 44 and a half million rows.

That is not terribly selective on its own. But with the creation date predicate in there as well, it is much more selectiver. But yeah, so if we create this index, which I’ve changed this index, so this was not a very helpful index.

But if we have an index on vote type ID and then creation date, you are more than welcome to experiment creating them with the key column in the opposite order. The same thing happens.

I just think that some of the information in the query plan when vote type ID is first is a little more interesting. So let’s go and look and see if that index helped. I’ll just start running this, which is the exact same query as before.

There’s no hints or anything. There’s nothing weird going on. And the short story is that no, it’s not going to help. And it’s not going to help for what I think is a fairly interesting reason.

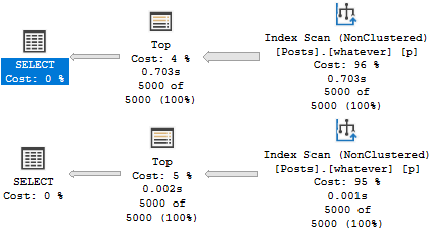

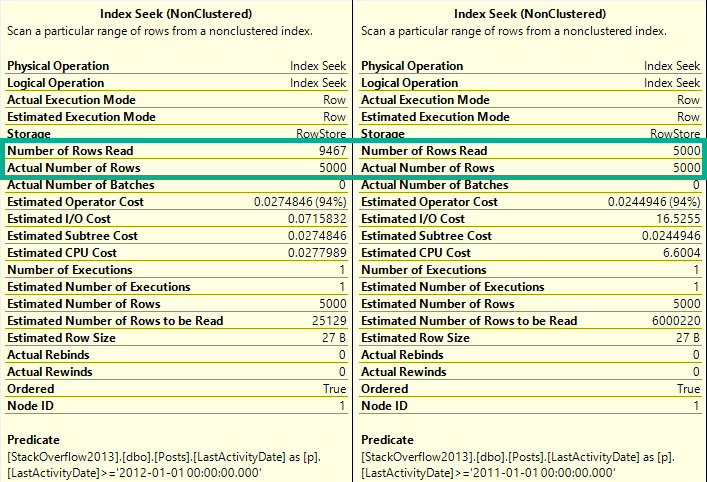

I’m going to get the estimated plan. Right? We’re going to see the exact same pattern here. And we think that we might be safe because now we have a top and then an index seek. So a top with a seek is much more efficient than a top and a scan.

Because that top with the scan is going to read through until it finds a row. And we might have to do that full scan a whole lot of times. A top with a seek is much cheaper.

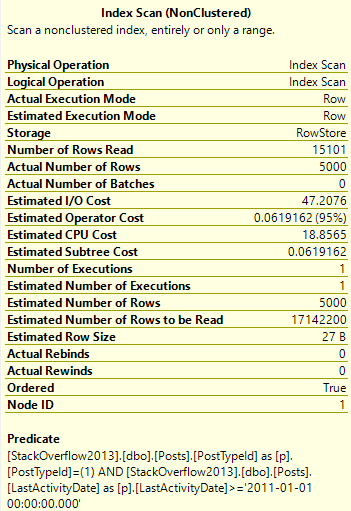

However, it’s not free and it’s not perfect, but it’s a lot cheaper. The problem that we’re running into is behind the seek, well, we have at least seek predicates for vote type ID 123. So we’re able to seek to the 44.5 million rows that we care about.

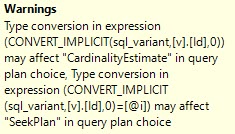

But then we have this big honking thing up here. This is internally what SQL Server does with Coalesce. It turns it into a case expression internally.

And it will do this the more columns and values and variables you throw in, the more it will stack that case expression up. But this is what the optimizer does internally. And this is where we run into trouble.

So even with a much better index, this query is still not out of the woods. And that’s because there’s something weird about Coalesce. And this is something that I blogged about a long time ago over on Brent’s site.

But it’s something that came up in reality with a client. Now, if we look at the creation date column on the votes table, it is defined as not nullable. Right?

We have, we cannot have a null value in there. It was left over, again, like I said, it was a defense mechanism from some developer who was right about nulls and ruling out rows when this was a left join query. So it was still in there from that.

And what’s funny is that if you look inside the plan XML, I’m not going to go digging into plan XML, but you’ll see this case expression in there. It Coalesce does not pick up on the fact that the creation date column isn’t nullable. It does not allow any nulls.

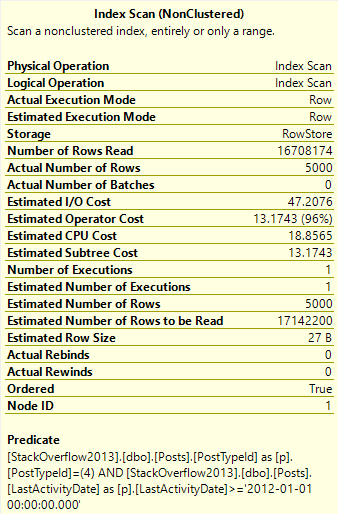

Coalesce will not do that for you. Isnull will. Isnull is a little bit less weird. And if we were to go look in the query plan XML, then we would see this. And just by changing the query to use isnull rather than coalesce.

And I’m not saying this is a good practice, but by changing that part of the query, we get a much, much faster execution plan. And I want to point out here that because isnull is not evaluated, because isnull has some special magic power that looked at the creation date column and said, you’re not nullable, we don’t need isnull anyway.

All right? Because of that, we have full seek predicates here. We don’t have a residual predicate on creation date. We only have it on user ID, user ID, user ID.

So we can see the dates in here. All right? So for each one of those seeks to invoke type ID of one, two, or three, we have an additional seek for the creation dates that we care about. But of course, knowing full well that that column isn’t nullable and that we don’t have to have any sort of null defense mechanisms, we can just write the query without that function on the column, and we can get an improved query.

So this is down to about one and a half seconds from about two and a half seconds. Maybe not the biggest one in the world. In real life, there was a lot more going on.

The reduction in time was a lot greater, but this is just a fairly good demo to show you that with a few small changes, you can have a pretty big impact on things. So what did we learn?

I don’t know. I don’t really. I have no idea. I just thought it was interesting. The row goals can kind of give the optimizer a false sense of confidence. When we had that row goal query up at the top, there was no missing index request.

SQL Server said, I’m fine, I’m fine, I’m fine. The costs were all screwy. And the query ran forever. Wasn’t good.

We had a missing index request when we ran the query a little bit differently, but it was not really an ideal index request because we had that creation date column wrapped in a function. And so SQL Server looked at it and said, I can’t really seek to you anyway.

I’m going to demote you to be an included column. And that really wouldn’t have been terribly helpful for our query because even though, as an included column, we couldn’t really seek efficiently to those 1.4 million rows.

It was a very selective predicate. We also learned that coalesce does not short circuit for non-nullable columns. So if you have a column that can’t be null and you have it in your query and you say, and you wrap it in coalesce, the coalesce will hang out.

Isnull will look at that column and say, you’re not nullable. This won’t make a difference anyway. And it will short circuit.

It won’t help you if the column isn’t nullable. And you really shouldn’t be off writing queries, wrapping columns and functions like that because you set a bad example for other people. There are a million better ways to write a query that don’t involve wrapping columns and functions.

I know that’s kind of an ivory tower way to put it. And I’ve never been ashamed to polish my own ivory. But it’s just, you know, if you can avoid it, you should.

Anyway, I guess that was the point there. One should avoid these things. They should be avoided.

Anyway, I am exhausted after that. It’s the longest 17 minutes of my life. I’m going to go drink coffee and pray for food, beg for food. Something.

I don’t know. Anyway, thank you. Thank you for watching. I hope you enjoyed yourself. I hope you learned something. And gosh darn it, I will see you in the next video. Goodbye.

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.