Jerked

Everyone thinks they’ve outsmarted the optimizer. All the time.

Like it’s a bumbling video game security guard that walks in the same circle and can’t see you if you just hold real still.

In reality, the optimizer is more like a dutiful parent playing along with your childish ruses.

One thing I see developers do quite a bit is try to “fix” a parameter in an IF branch.

Maybe it’s to protect against bad search values, but more often it’s to nix NULLs.

I know that the stored procedure I’m showing only has one branch in it where a query is executed, and the series is supposed to be about if branching with multiple queries.

I’m only doing that to simplify the point of this post, which is that “fixing” supplied values does not correct performance and cardinality estimation issues with queries in IF branches.

Sometimes that’s easier to demonstrate without additional noise.

The Thing

Here’s close to what I normally see someone trying:

CREATE OR ALTER PROCEDURE

dbo.counter_if

(

@PostTypeId int = NULL,

@CreationDate datetime = NULL

)

AS

SET NOCOUNT, XACT_ABORT ON;

BEGIN

IF @CreationDate IS NULL

BEGIN

SET @CreationDate = '20080101';

END;

IF @PostTypeId IS NOT NULL

BEGIN

SELECT

c = COUNT_BIG(*)

FROM dbo.Posts AS p

JOIN dbo.Users AS u

ON p.OwnerUserId = u.Id

WHERE p.PostTypeId = @PostTypeId

AND p.CreationDate >= @CreationDate;

END;

END;

GO

The problem here is that by the time we hit the point where @CreationDate gets set to another value, we’ve already got a query plan.

You might get a search for the value you assign there, but the plan gets optimized for NULL.

Puddings

If you execute the procedure like so, and get the query plan for it, here’s what happens:

EXEC dbo.counter_if

@PostTypeId = 2;

We get a real bad cardinality estimate there, and I’ll show you that it’s because of the NULL we passed in, even though we set it to 2008-1-01-01 later.

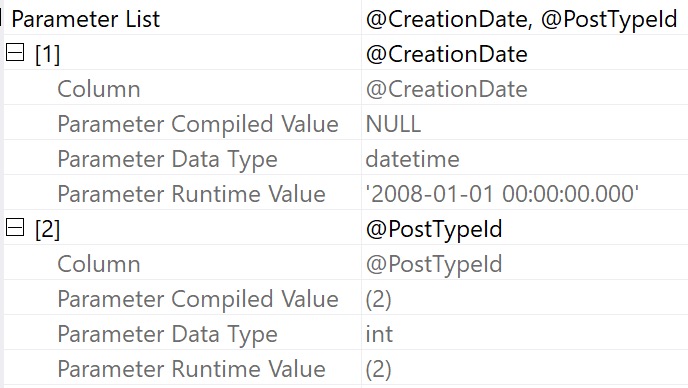

Digging into the operator properties of the select, here’s what the execution plan shows us about the parameters:

- @PostTypeId is compiled and executed with 2 for both

- @CreationDate is compiled with NULL, but executed with 2008-01-01 00:00:00.000

Different World

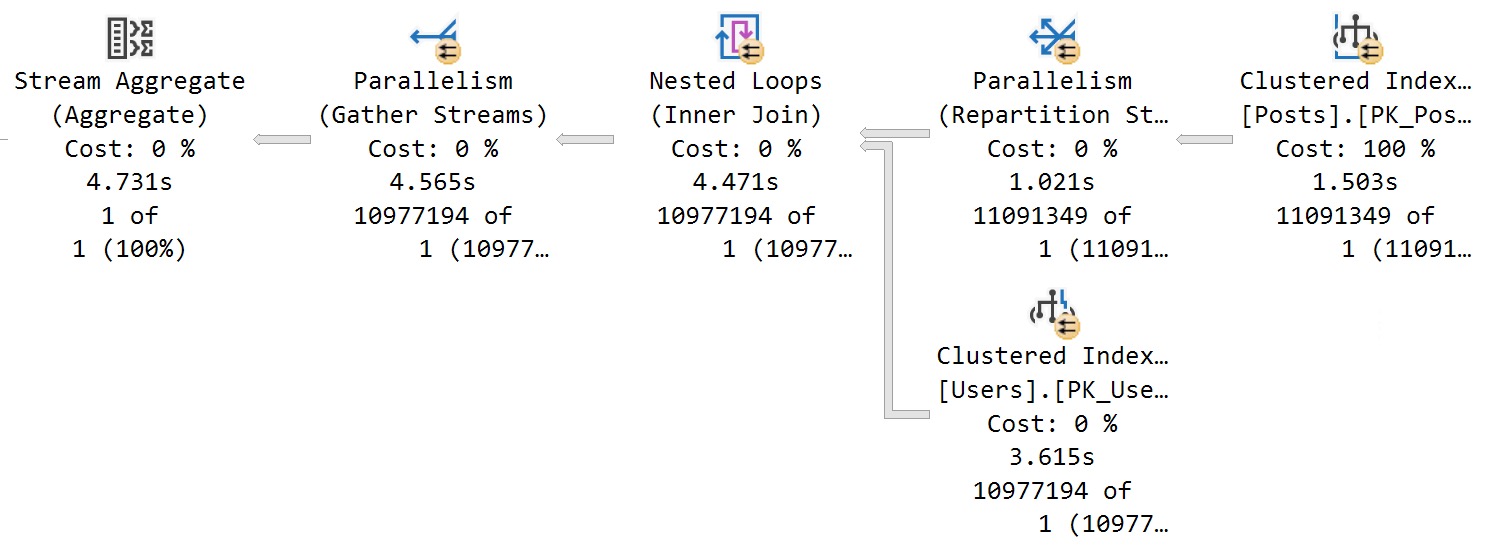

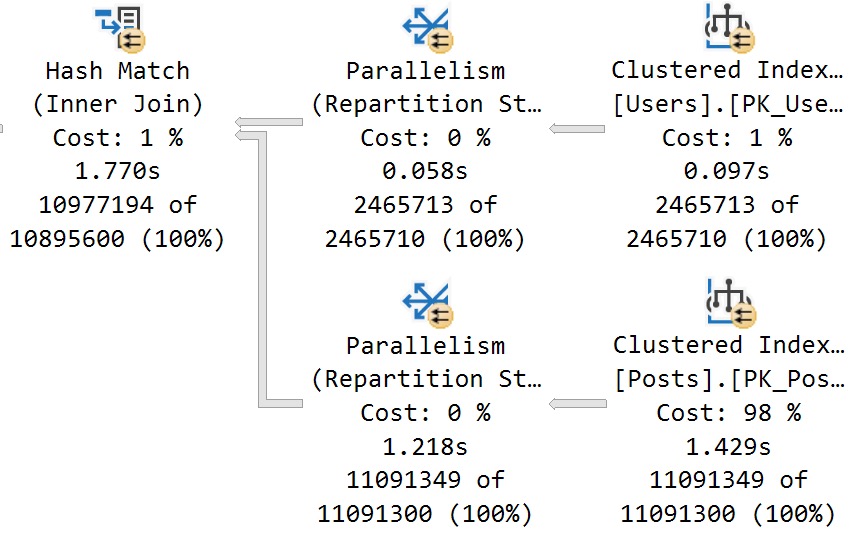

If we clear out the procedure cache — and I’m allowed to do that because I am a doctor (in Minecraft) — and re-run the procedure with 2008-01-01, we get accurate cardinality estimation.

EXEC dbo.counter_if

@PostTypeId = 2,

@CreationDate = '20080101';

We no longer get a one row estimate. Look at us. Look at how smart we are.

I’m starting to understand why so many people hate NULLs.

But Is It Null?

For brevity, I’m going to list out a bunch of similar patterns that also end up poorly:

SELECT

c = COUNT_BIG(*)

FROM dbo.Posts AS p

JOIN dbo.Users AS u

ON p.OwnerUserId = u.Id

WHERE p.PostTypeId = @PostTypeId

AND p.CreationDate >= ISNULL(@CreationDate, '20080101')

AND p.CreationDate >= COALESCE(@CreationDate, '20080101')

AND (p.CreationDate >= @CreationDate OR @CreationDate IS NULL)

AND p.CreationDate >= CASE WHEN @CreationDate IS NULL THEN p.CreationDate ELSE @CreationDate END

None of these patterns or similar permutations yield desirable results in most cases.

You may find an edge case where they’re acceptable, but most folks I end up talking to aren’t calling me because what they’ve done is working out well.

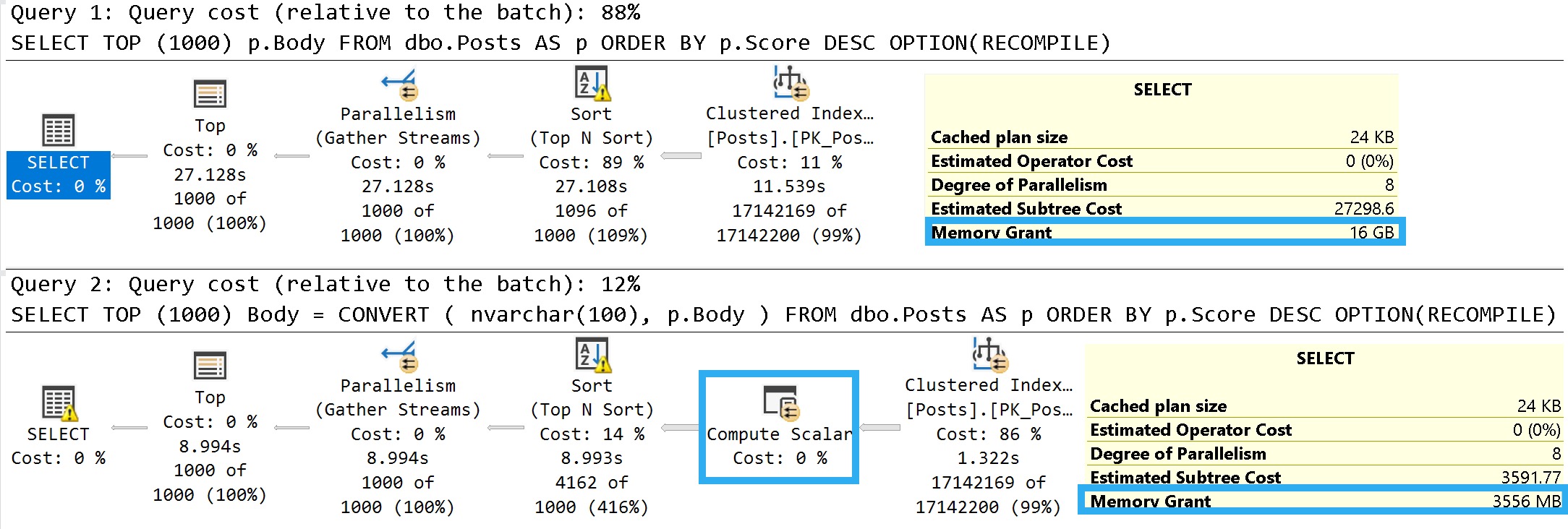

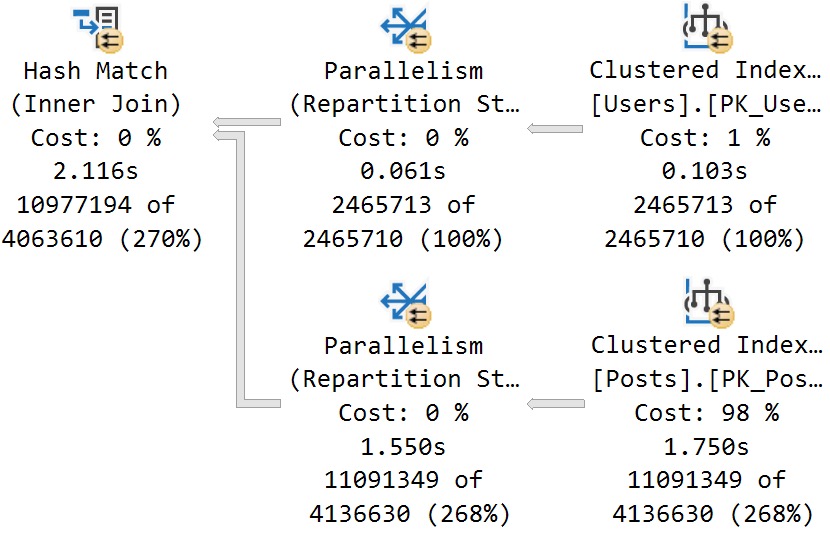

More or less, they all results in this estimate/plan:

See? You’re still not clever, and I still got your nose. Go play outside, slugger.

S Dot

Hopefully by now you can see why this technique doesn’t necessarily give you good results.

In tomorrow’s post, we’ll look at another anti-pattern I see a lot with local variables.

If you’re looking for working solutions, you’re gonna have to hang on until the end of the week.

That’s just how culminations work. They Culm and then they Inate.

Duh.

Thanks for reading!

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.