GETDATE() always uses the UTC time zone on Azure SQL Database which can be a compatibility issue for applications that assume that GETDATE() is using a different time zone. Developers may wish to replace GETDATE() with code that continues to use their expected time zone to avoid UTC time reforms. For example, I’ve seen application code with over 50,000 references to the GETDATE function. Replacing all of those calls with GETUTCDATE() would be a herculean effort.

FAST 1

I don’t know how good Erik’s SEO is, but here’s a simple replacement if you don’t care about performance and just need something that gives you the previous behavior of GETDATE():

CREATE OR ALTER FUNCTION dbo.[GetDateNew]()

RETURNS DATETIME

WITH SCHEMABINDING

AS

BEGIN

RETURN SYSDATETIMEOFFSET() AT TIME ZONE N'Central Standard Time';

END;

GO

Keep reading if you’d like to do better than that.

AT TIME ZONE At Risk

AT TIME ZONE was a great addition to SQL Server 2016 that allowed for the retirement of many well-meaning but poorly implemented attempts to do time zone conversions in T-SQL. However, it was not without its own problems:

- The CPU cost per execution was surprisingly high, but this was addressed in SQL Server 2022 and in Azure SQL Database.

- AT TIME ZONE makes scalar UDFs ineligible for inlining.

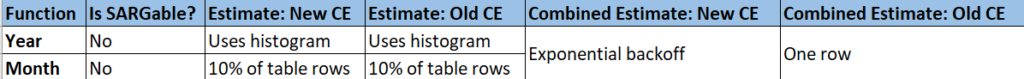

- AT TIME ZONE usage results in an “unknown” cardinality estimate.

You can see the cardinality estimate issue in action by populating a simple table with about 6 million rows.

DROP TABLE IF EXISTS dbo.Level100;

CREATE TABLE dbo.Level100 (

TenantID INT NOT NULL,

InsertTime DATETIME NOT NULL

);

INSERT INTO dbo.Level100 (TenantID, InsertTime)

SELECT q.RN % 4, DATEADD(MINUTE, -1 * RN, GETDATE())

FROM

(

SELECT ROW_NUMBER() OVER (ORDER BY (SELECT NULL)) RN

FROM master..spt_values t1

CROSS JOIN master..spt_values t2

) q;

CREATE INDEX TenantID_InsertTime ON dbo.Level100 (TenantID) INCLUDE (InsertTime);

CREATE INDEX InsertTime_TenantID ON dbo.Level100 (InsertTime) INCLUDE (TenantID);

The first query uses GETDATE():

SELECT COUNT(*) FROM dbo.Level100 l WHERE l.TenantID = 2 AND l.InsertTime > DATEADD(MINUTE, -10, GETDATE()) OPTION (MAXDOP 1);

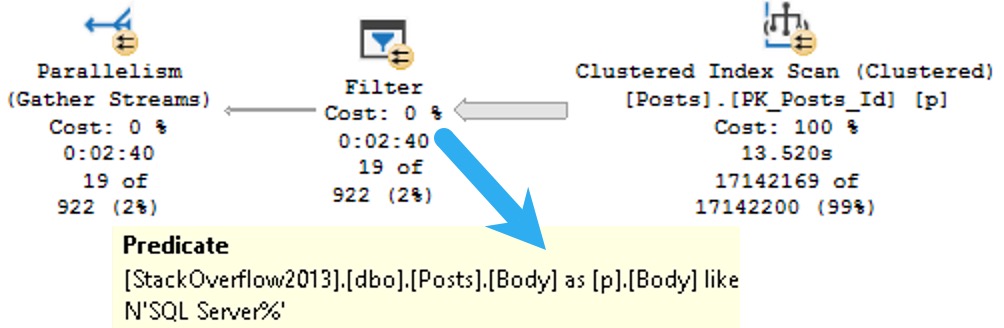

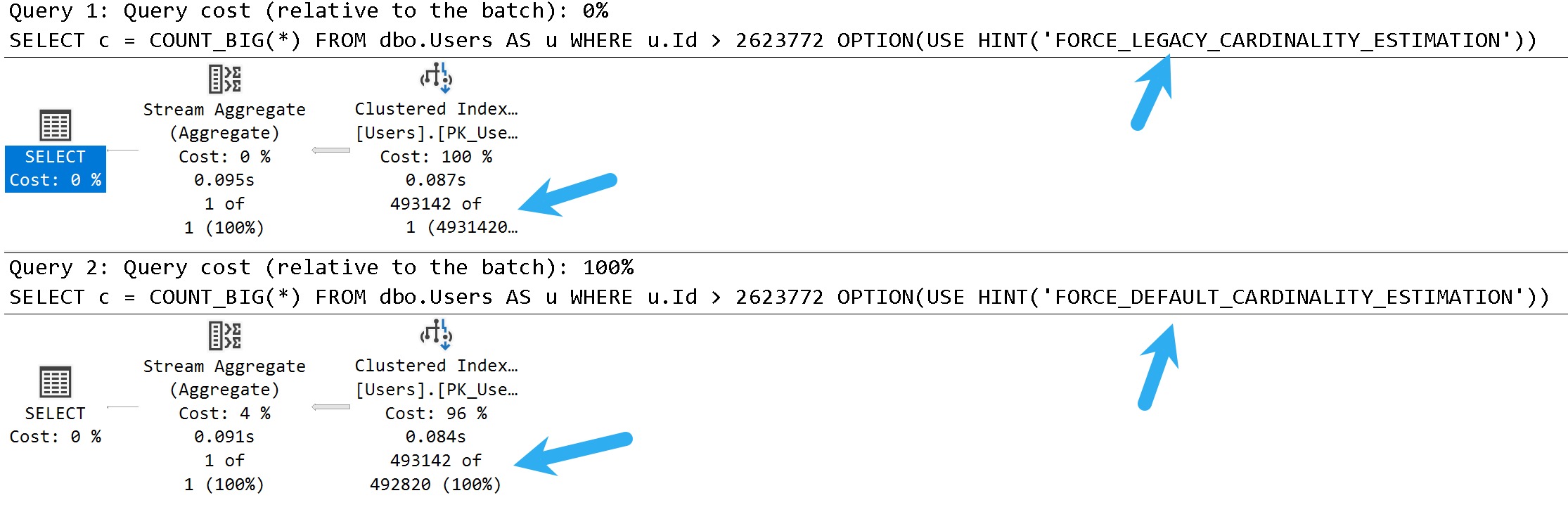

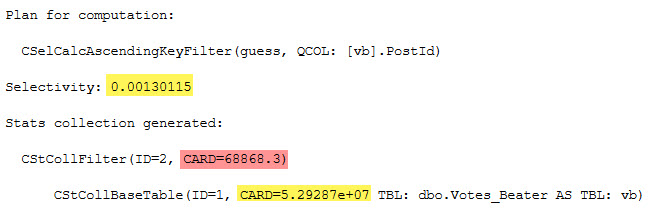

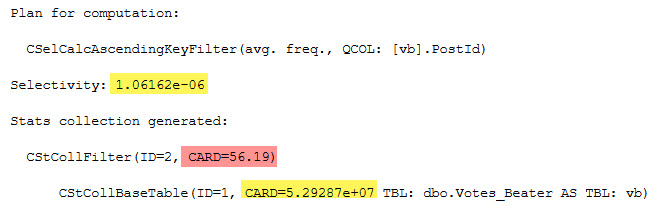

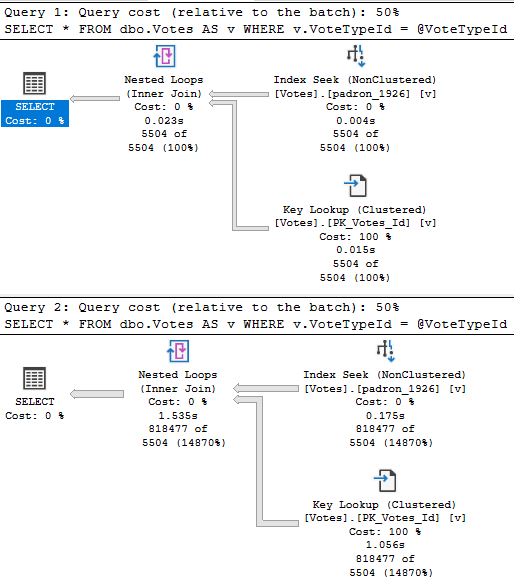

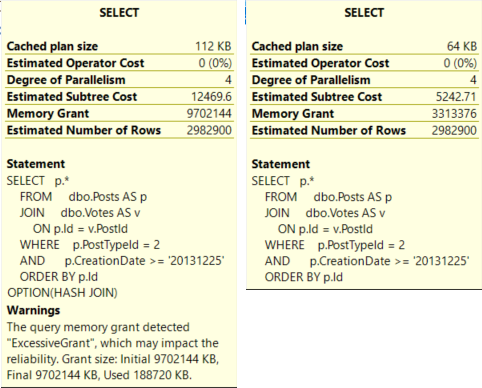

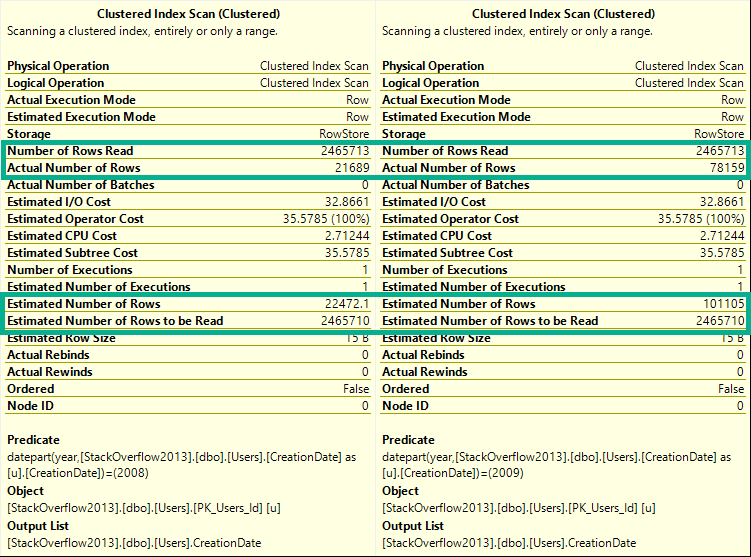

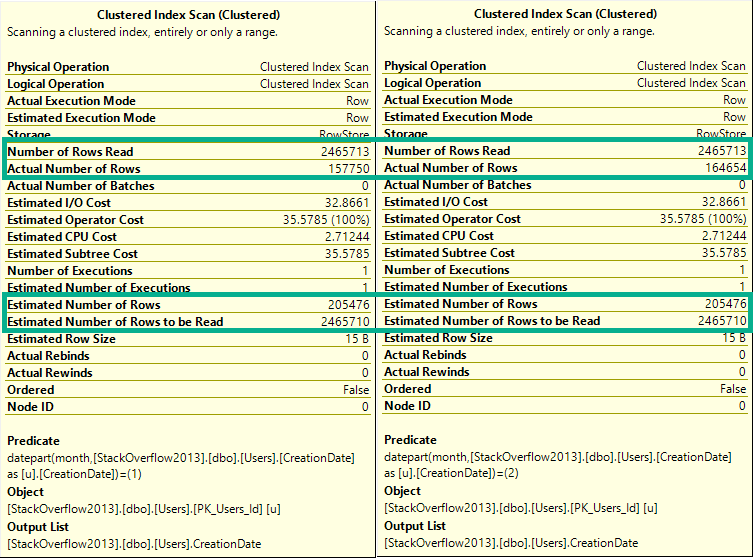

The filter against TenantID is expected to return 25% of the rows in the table and the filter against InsertTime is expected to return less than 0.001% of the rows. As expected, the query optimizer chooses to filter against the InsertTime_TenantID index and the query finishes instantly:

The second query uses AT TIME ZONE:

SELECT COUNT(*) FROM dbo.Level100 l WHERE l.TenantID = 2 AND l.InsertTime > DATEADD(MINUTE, -10, CAST(SYSDATETIMEOFFSET() AT TIME ZONE N'Central Standard Time' AS DATETIME)) OPTION (MAXDOP 1);

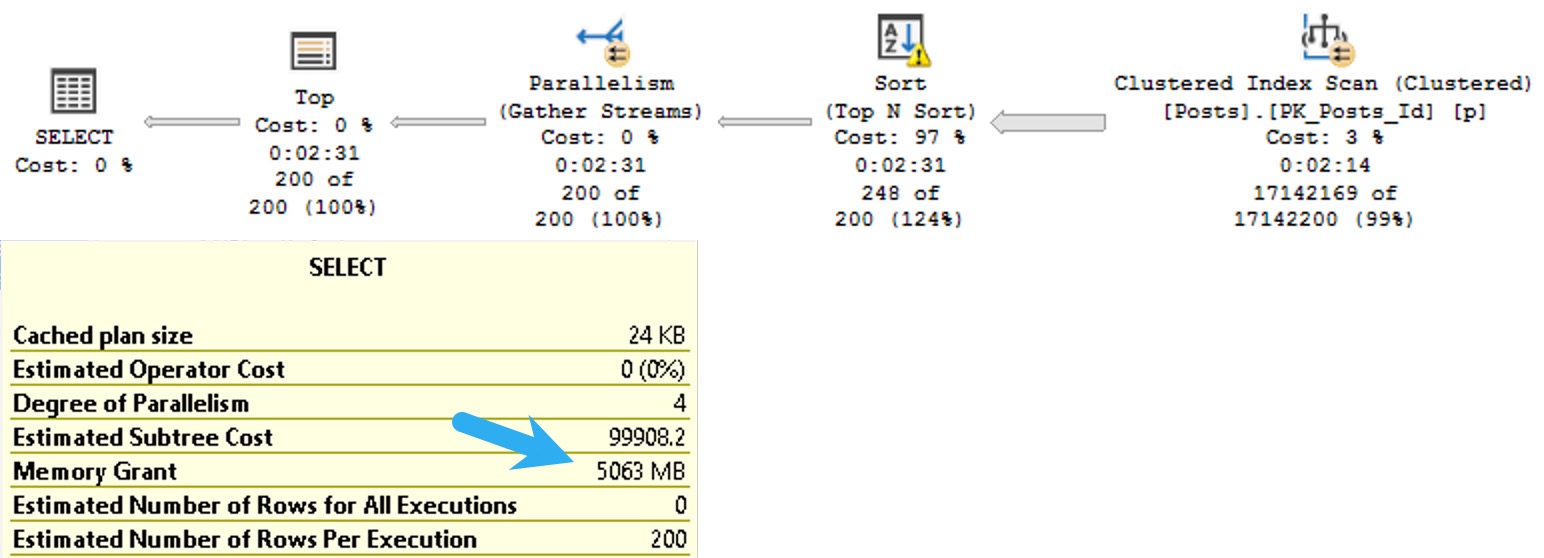

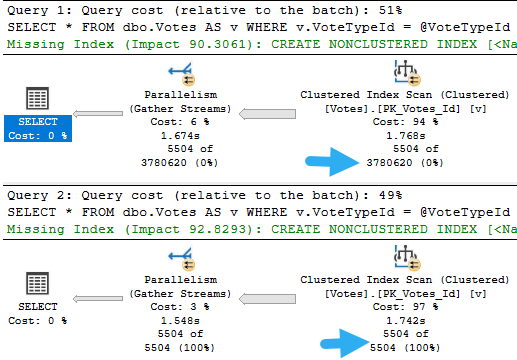

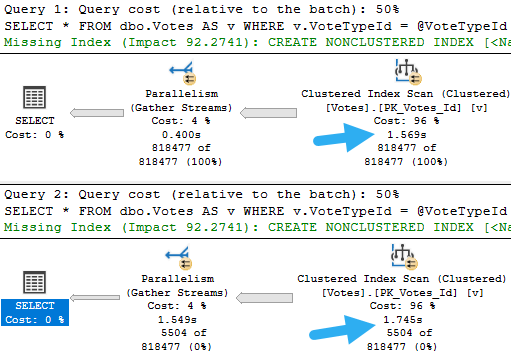

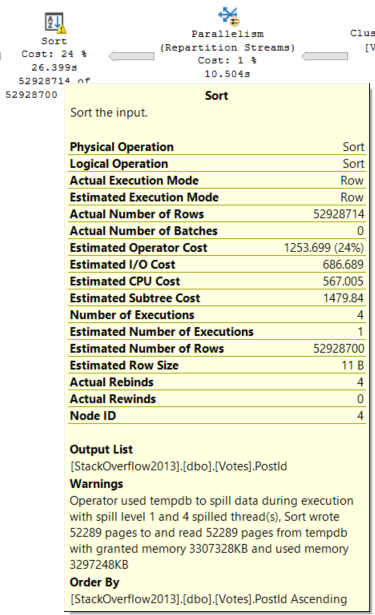

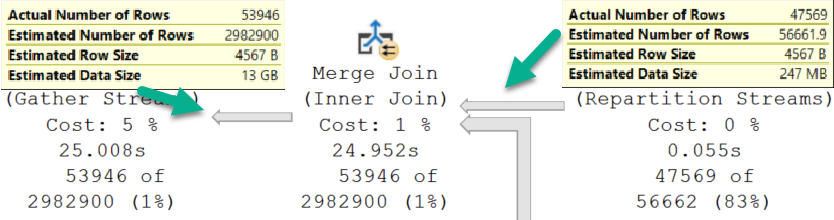

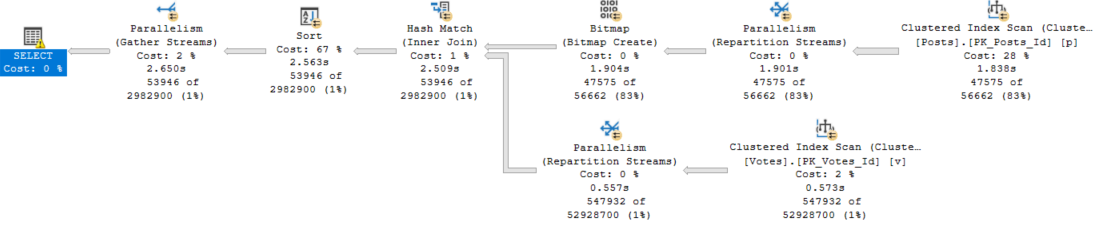

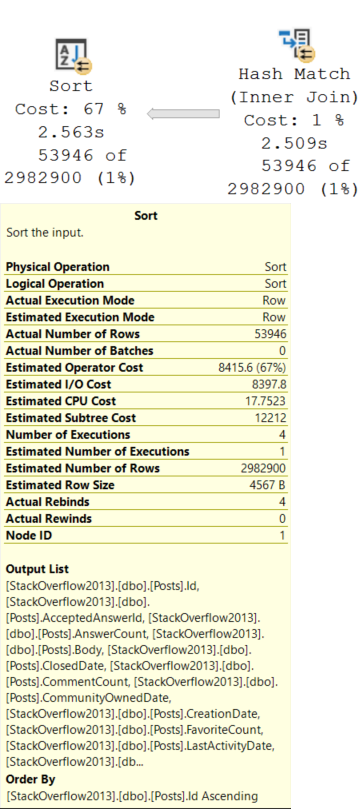

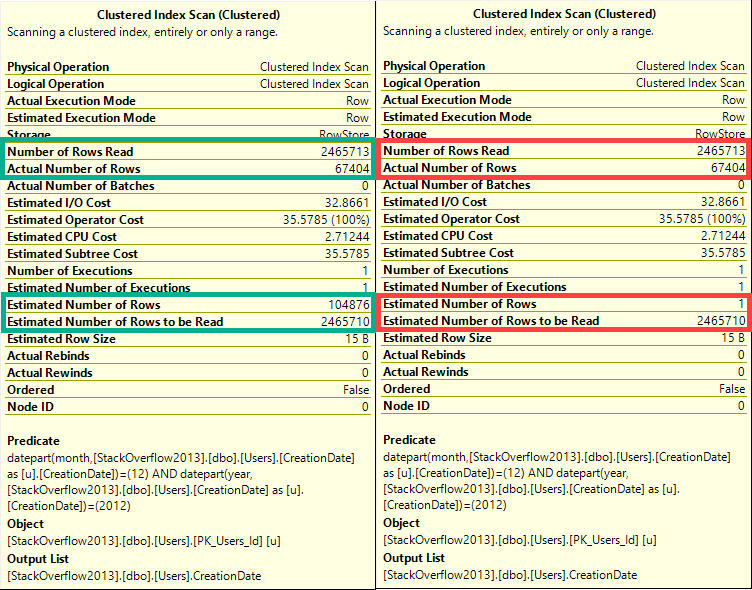

Once again, the filter against TenantID is expected to return 25% of the rows in the table. However, the presence of AT TIME ZONE results in an unknown inequality estimate against InsertTime which is 30%. The query optimizer makes a different choice and goes with the TenantID_InsertTime index because 25% < 30%:

Meme Preparation

It seems that we need to return to the bad old days before we had AT TIME ZONE available in SQL Server. One advantage that we have is that the function only needs to do the time zone conversion for the current moment in time. Central time is six hours behind UTC at the time of publication, so we could construct a function like this:

CREATE OR ALTER FUNCTION dbo.[GetDateCT](@PassInGetDateUTC DATETIME)

RETURNS DATETIME

WITH SCHEMABINDING

AS

BEGIN

RETURN DATEADD(HOUR, -6, @PassInGetDateUTC);

END;

GO

The input parameter is there to make the function eligible for inlining because GETDATE() and other similar functions prevent inlining. Returning to the same query as before:

SELECT COUNT(*) FROM dbo.Level100 l WHERE l.TenantID = 2 AND l.InsertTime > DATEADD(MINUTE, -10, dbo.[GetDateCT](GETUTCDATE())) OPTION (MAXDOP 1);

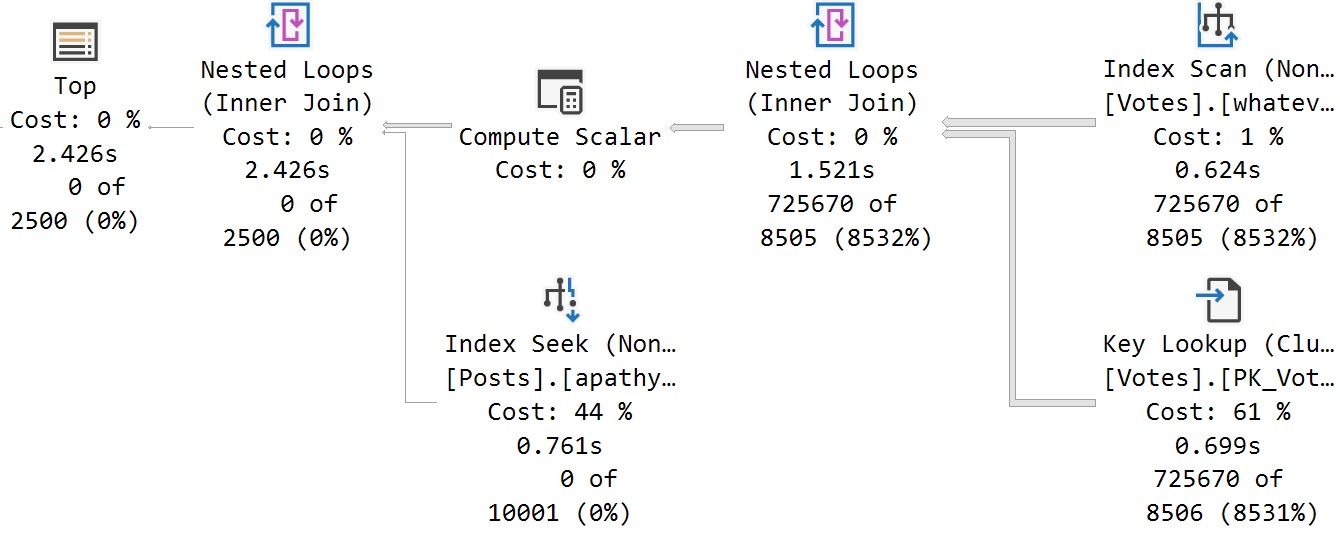

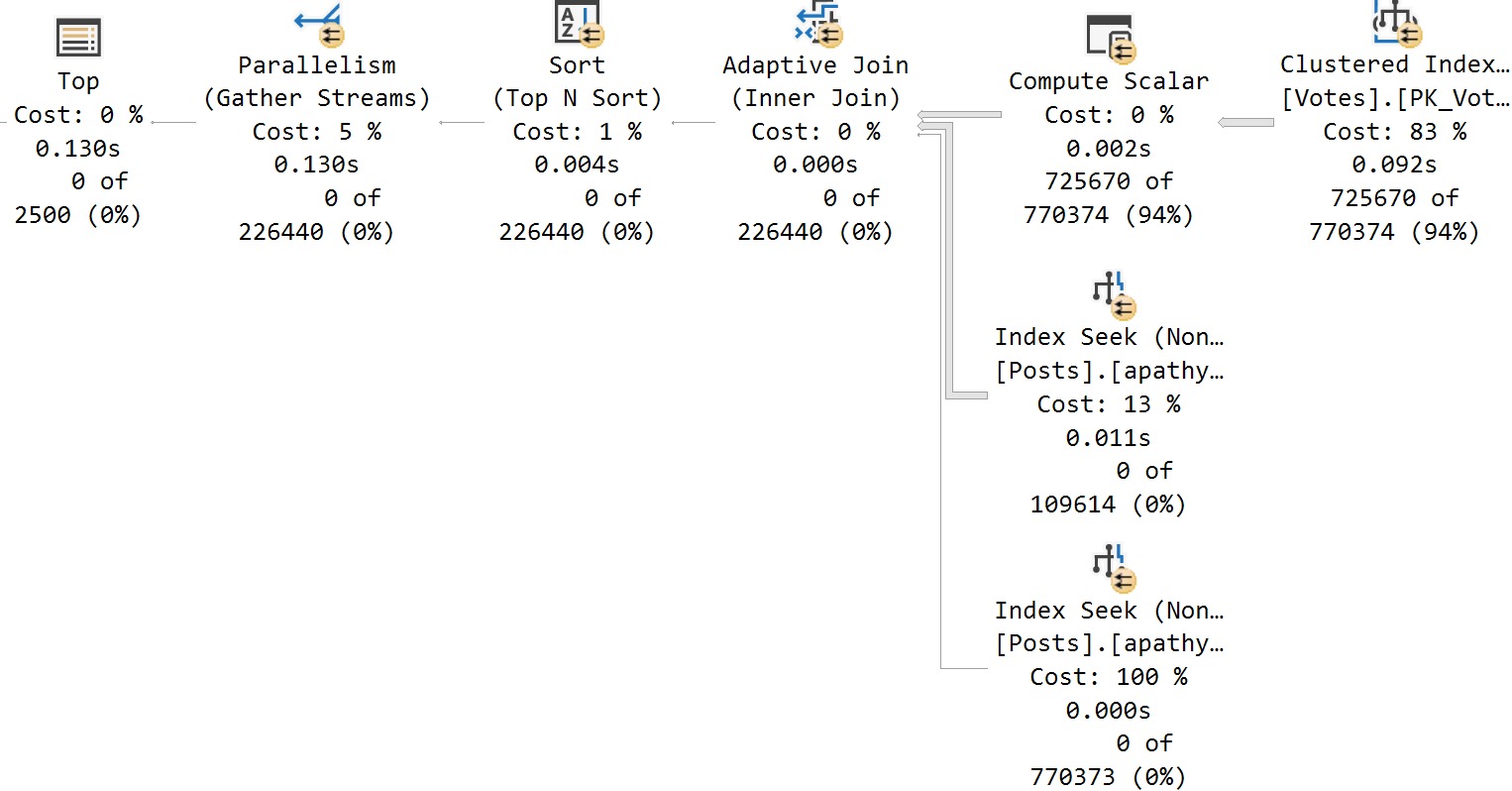

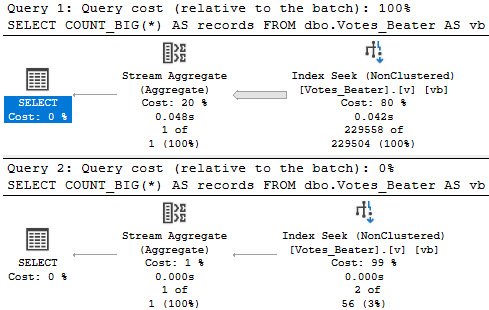

We can see that the new function results in a reasonable cardinality estimate and the query optimizer makes the correct index choice:

Of course, this function definition will need to be updated a few times per year to deal with daylight savings time changes. It isn’t too difficult to design a process to automatically perform these updates, but honestly I expect that the hassle of doing something will exceed the tolerance of most companies. However, this option is available to you if you need to preserve the usually superior cardinality estimation behavior enjoyed with GETDATE().

How to Convert Time Zones in SQL Server?

Parsing and Arsing

Any solution you pick, other than a naked reference to SYSDATETIMEOFFSET() along with AT TIME ZONE, may result in parsing issues in your code. For example, some parts of certain queries do not allow for subqueries, so a subquery replacement against a table-valued function will not work as a direct replacement. Even the simplest scalar UDF reference can result in code that fails to parse, such as the following:

CREATE TABLE #t (

StupidColumn DATETIME dbo.[GetDateNew]()

);

Fortunately, most of these issues are likely rare in practice and should be straightforward to address.

Keep in mind that you also need to fix GETDATE() references in areas such as default column values, constraints, computed columns, default column tables for table types, and so on. I would personally use a naked reference to SYSDATETIMEOFFSET() along with AT TIME ZONE for these areas as I see no benefit in using a scalar UDF.

Summary of Issues for Various Solutions

I was going to put some introduction text here, but whatever, you can figure out the point of this section on your own.

Inline table-valued function approach

- Most T-SQL compatibility issues

- Very verbose

- Cardinality issues with AT TIME ZONE

Naked SYSDATETIMEOFFSET() AT TIME ZONE N’Central Standard Time’ replacement

- Very verbose

- Cardinality issues with AT TIME ZONE

Simple scalar UDF with AT TIME ZONE:

- UDF is not eligible for inlining

- UDF will return different values as the query executes which probably isn’t what you want

Non-static scalar UDF:

- Function definition must occasionally be refreshed

- Somewhat verbose

- Some types of queries, such as those with CTEs, will not allow any UDF to be inlined

Final Thoughts

Messy no-win situations like this highlight the importance of picking the right cloud platform for your application. Azure SQL Managed Instance and Azure VMs avoid this problem entirely. Thanks for reading!