Credits and Debits

This post is inspired by my Humanoid BFFL Joe Sack’s wonderful post: Keep Humans in the Circle.

It’s an attempt to detail my progression using LLMs, up through how I’m using them today to build my free SQL Server Monitoring and Query Plan analysis tools.

While these days I do find LLMs (specifically Claude Code) to be wonderful enablers for my ideas, they still require quite a bit of guidance and QA, and they’re quite capable of (and sometimes seemingly eager to) wreck your day.

Just in the past couple weeks, I’ve had Dear Claude:

- Drop databases

- Git checkout and lose a bunch of work

- Merge to main when it had no business doing so

- Write a bunch of happy-path tests to make bad code pass

- Delete inconvenient data to make a query work

This is along with a bunch of side-annoyances, of course. It’s all in my local environment, so no one’s getting hurt, but it’s still a lot of recovery work for me at times. You should probably not let it go beyond that.

Even Amazon agrees.

Dear Claude has skills and a Claude.md file to give it persistent instructions to do things, but it still has quite a habit of not following them. Like they’re not even there. But they are there, Dear Claude.

Sometimes it can’t figure out how to query DuckDb without being blocked, and other times it will run SQL scripts to manually patch things rather than use the dashboard Installers to make sure those work properly (in my Monitoring tool, there’s an Installer meant to update procedure code and table schema on upgrade).

Manual patches are supposed to be verboten, but…

In other words, it’s a lot like hiring a junior developer who doesn’t really always listen to you, or know better, and then hiring a brand new junior developer every time the conversation compacts.

Hopefully the new one million token context makes this all a bit less painful. Time will tell.

Conversations Gone Wrong

I’ve been working with SQL Server for a long time. Too long, some might say.

Long enough to have quite particular and occasionally profound opinions about it, and long enough to be skeptical of anything that promises to make SQL Server easier at the level I work at.

Even SQL Prompt, which I like quite a bit, leaves me with rather a bit of work to (un)do at times. Perhaps it needs a .md file to learn from. Perhaps if it doesn’t get one, I’ll make something that does. The world is a fun place these days.

When LLMs showed up and everyone started losing their collective minds over them, I did what any reasonable person would do: I tried to lose my mind over them, too.

What followed was a months-long journey from complete, laughable frustration, to settling into a more productive use-case: Them being my little enablers.

The first thing I tried was the most natural thing in the world: having a conversation. About SQL Server. My beloved.

LLMs are supposed to be good at conversation, after all. This should be a meeting of the minds, right? All their years of training data, all my years of training data, together at last. And me with no one to talk to.

Wrong. Wrong, wrong, wrong. So very, horribly, totally, utterly, and terribly wrong. Good golly.

Whenever the monthly model updates roll out and the usual spate of hucksters talk about the most advanced, doctor-level reasoning capabilities, I laugh until it hurts.

I’d ask something like “what causes parameter sniffing problems with local variables?” and get back this enthusiastic, confident, completely wrong answer. The LLM would happily tell me that local variables cause parameter sniffing.

That’s all backwards, ‘natch. Local variables circumvent parameter sniffing, which is its own problem because you end up with the average density estimate guess instead of a sniffed value. This is often rather an unattractive proposition for skewed data distributions.

It got worse, too. I’d ask it about topics I know fundamentally quite well, and get back these half-cooked answers that sounded more like someone went to a one hour talk about a subject and came away with a lot of wrong ideas about what was said. Deeply incorrect in important places and unable to explain further.

World’s shortest interview.

Here’s the kind of exchange I’m talking about:

-- Me: Why might a parallel query with a large memory grant

-- still spill to tempdb?

--

-- LLM: A query may spill to tempdb when the memory

-- grant is insufficient for the data volume

-- being processed. This typically happens when

-- statistics are outdated and SQL Server

-- underestimates the number of rows...

--

-- Me: What about when the grant IS large enough

-- based on the estimate, but the distribution

-- is skewed?

--

-- LLM: Great question! When data distribution is

-- skewed, you should update statistics with

-- FULLSCAN to ensure accurate cardinality

-- estimates...That’s not even wrong in an interesting way. It’s idiot advice like you’d find on LinkedIn. I won’t even dignify it.

It’s wrong in the “I’m going to confidently repeat the first three results from a search engine” way.

The for-realsies answer involves how memory is allocated to individual threads during execution, and how thread memory allocation can cause spills even when total memory is fine.

Remember that all plans start as serial plans, and that’s when the memory grant is assigned. If a parallel plan is chosen, the memory grant gets divided up equally amongst DOP threads. If one thread gets many/all the rows, it’s likely that division of memory will not quite be adequate.

But the LLM had no idea about any of that. It just kept cheerfully, wantonly suggesting I update my statistics, like that’s the answer to everything.

At least it didn’t tell me to do index maintenance, which is a step up from some people.

Complete waste of time for expert-level SQL Server topics.

I’d rather talk to a cat.

I am allergic to cats.

Getting Organized

One thing I’m terrible at is dull admin work.

Things like building course outlines, writing read me files, and keeping various things up to date as they change along the way.

Documenting things is not my idea of a good time.

I’d often try to use them for that, and walk away sorely disappointed. Much like the above exchange, the LLM would build course content that is unusable.

- Page splits, the silent killer

- Logical reads: why your queries can’t have nice things

- MAXDOP 1 or MAXDOP None: Which is worse?

Okay, cool, let me get in a time machine back to 2008 so any of this will be relevant.

I would sooner die, dear reader.

For the documentation bits, it would just hallucinate things that the code had never, and would never do. For example. it kept insisting that sp_IndexCleanup checks index fragmentation. Dawg is you [bleeping] kidding me?

For the training outlines, I mostly just binned them all. There were some okay fundamental ideas, and progression paths, but the details were a nightmare. How fast is a logical read, anyway?

For the read me files, there was a lot of manual labor fixing them.

Sure it was nice to have something that knew markdown, and could make things pretty for me, but having to give all the text a correctiondectomy was quite the opposite of good.

But hey, it gave me a starting place, and I could work from there. That’s more than I started with.

Writing Queries: Sorta Better, But Still Painful

Okay, so it couldn’t talk about SQL Server competently. But could it write SQL?

Can I finally have something bang out a bunch of queries I don’t feel like writing? Would any of them make good demos, or be logically correct?

I figured the bar was lower here. I’m not asking it to understand optimizer internals. I’m asking it to write a SELECT statement that doesn’t error out.

And it kind of could. In the way that a junior developer who just finished a SQL boot camp kind of can. And the way an ORM kind of can.

Sure, it starts with SELECT, and it has mostly the right table names, and it has all the columns (most of them even existed, too), but…

It still seemed to not understand data very well.

-- Write a query to find the top 10 customers

-- by total order amount for orders placed

-- in the last 90 days

--

-- Tables:

-- dbo.Customers (CustomerID, CustomerName, Email)

-- dbo.Orders (OrderID, CustomerID, OrderDate, TotalAmount)And I’d get back:

SELECT TOP 10

c.CustomerName,

c.Email,

SUM(o.TotalAmount) AS TotalOrderAmount

FROM Customers c

INNER JOIN Orders o

ON c.CustomerID = o.CustomerID

WHERE DATEDIFF(DAY, o.OrderDate, GETDATE()) < 90

GROUP BY c.CustomerName, c.Email

ORDER BY TotalOrderAmount DESCWhich is… fine. It works. Looks like crap, though.

But look at it. If a person handed that to me, they might lose that hand. And database access.

For more complex queries, it would break down faster than hostage negotiations for an ex-wife.

I asked it to write a running totals query with a proper window function. I do enjoy a proper window function.

-- What I asked for:

-- Running total of sales by month, reset per fiscal year,

-- with a percentage of the annual total so far

-- What I got back:

SELECT

FiscalYear,

MonthNum,

MonthlySales,

SUM(MonthlySales) OVER (

PARTITION BY FiscalYear

ORDER BY MonthNum

ROWS BETWEEN UNBOUNDED PRECEDING AND CURRENT ROW

) AS RunningTotal,

MonthlySales / SUM(MonthlySales) OVER (

PARTITION BY FiscalYear

) * 100 AS PctOfAnnual

FROM MonthlySalesData

ORDER BY FiscalYear, MonthNum;Spot the problem?

That PctOfAnnual calculation is the percentage of the full year, not the percentage of the annual total so far.

The denominator needs to be the running total, not the total-total.

Look, I’m not great at math, and I can see this is a problem. It’s not that me or another human couldn’t or wouldn’t mess this thing up too, but these robots are supposed to be the almighty beings replacing us all in the workforce.

When I pointed this out, it apologized profusely, and gave me back a version where it replaced the denominator with the running total expression, but didn’t take much pride in its efforts generally.

The formatting is an atrocity, and it didn’t really do much for making sure data types were constrained on the expressions. Again, very human laziness on display.

Useful for scaffolding simple queries, but I’d spend almost as long fixing its output as I would writing it myself.

It quite reminded me of this old Dennis the Menace episode:

Uncle Ned asks Helen to plant some bulbs, and she plants all 120 of them all upside down.

In many of these scenarios, I played the part of Uncle Ned. I just gave up and waited until next year.

New Stored Procedures: Guts and Bones and Bloody Knuckles

Writing a stored procedure from scratch is tedious work.

- Error handling

- Parameter validation

- Debug modes

- Version detection

- Lots of dynamic SQL

- Working out #temp table definitions

I thought maybe the LLM could handle that part, and I’d fill in the more detailed knowledge.

Build me a building. I’ll do the decorating. I’ve got quite the fabulous eye.

The template it produced wasn’t that bad. The formatting was still rotten garbage, but that’s what SQL Prompt is for.

CREATE OR ALTER PROCEDURE dbo.ProcessCustomerOrder

@CustomerID int,

@OrderDate datetime2(7) = NULL,

@OrderItems dbo.OrderItemType READONLY,

@NewOrderID int OUTPUT

AS

BEGIN

SET NOCOUNT ON;

SET XACT_ABORT ON;

IF @CustomerID IS NULL

BEGIN

RAISERROR(N'@CustomerID cannot be NULL.', 16, 1);

RETURN;

END;

SET @OrderDate = ISNULL(@OrderDate, SYSDATETIME());

BEGIN TRANSACTION;

BEGIN TRY

INSERT dbo.Orders

(

CustomerID,

OrderDate,

StatusID

)

VALUES

(

@CustomerID,

@OrderDate,

1 -- New

);

SET @NewOrderID = SCOPE_IDENTITY();

INSERT dbo.OrderItems

(

OrderID,

ProductID,

Quantity,

UnitPrice

)

SELECT

@NewOrderID,

oi.ProductID,

oi.Quantity,

oi.UnitPrice

FROM @OrderItems AS oi;

COMMIT TRANSACTION;

END TRY

BEGIN CATCH

IF @@TRANCOUNT > 0

BEGIN

ROLLBACK TRANSACTION;

END;

THROW;

END CATCH;

END;

GOThat skeleton is pretty decent. SET NOCOUNT ON and SET XACT_ABORT ON, proper TRY/CATCH with a transaction check before ROLLBACK, SCOPE_IDENTITY() instead of @@IDENTITY.

It’s clear the training data included some moderate quality SQL Server content. Perhaps it’s the first thing to fully read Erland’s post.

But then I’d ask it to add business logic:

- Inventory checks

- Discount tier calculations

- Shipping cost logic with regional rules

And that’s when the great Pear Shapening would commence. I’m giving some mock examples, because if I just gave examples from my analysis stored procedures, they’d be largely unrelateable to most readers.

For me, there was just too much fixing to make the scaffolding make sense. Reminding it of things, asking it to include or add things, having that break other things.

Again, not saying a human (even me) wouldn’t have these problems, but this is what’s phasing us out?

Ho hum. That’s nice. Close laptop. Go for walk. At least a bartender will still talk to me.

The worst part was when it was almost right. A procedure that works perfectly in testing, but has a little concurrency issue under load, or that produces wrong results when a specific combination of parameters is passed in.

These are the bugs that cost you a lot of confidence in the robots.

What About Existing Code?

This was where things started to shift a bit.

There was still great suffering, and lots of bugs I had to find out about on my own later, but… at least it had a good example to start with, and it kinda figured out my formatting/style preferences. Less running for the Pepto Bismol than before.

Instead of asking the LLM to create something from nothing, I gave it existing code and asked it to make changes.

- Add a parameter (and keep the

@helpand readme up to date!) - Refactor a query that would otherwise be a lot of tedious text moving

- Add error handling to a procedure in multiple places

Having existing code as context made a noticeable difference.

The LLM could see the style, the naming conventions, the patterns already in use, and generally try to match them.

-- Me: "Add a @Debug parameter to this procedure that,

-- when set to 1, prints the dynamic SQL instead

-- of executing it"

-- Before (snippet):

SET @sql = N'

SELECT ...'

+ @where_clause;

EXECUTE sys.sp_executesql

@sql,

@params,

@StartDate = @StartDate;

-- What I got back:

-- Added @Debug bit = 0 to the parameter list

IF @Debug = 1

BEGIN

PRINT @sql;

RETURN;

END;

EXECUTE sys.sp_executesql

@sql,

@params,

@StartDate = @StartDate;That’s reasonable. It understood the intent, put the debug check in the right place, and all the other many right places all at once.

But, like, I didn’t want to RETURN there. Or anywhere. There was so much more stored procedure left to run. These types of unforced errors are quite common.

Try-hard robots.

And the more complex the change, the more it struggled.

I asked it to refactor a 400/500-line procedure to use temp tables instead of table variables, and it made the swap but missed that one of them was a TVP and that kinda wasn’t all that cool to deal with.

Turns out LLMs are about as aware of TVPs as your average SQL developer. At least there’s some consistency in the world, I suppose.

The back-and-forth was still frustrating.

I’d point out a problem, it’d fix that but introduce a new one.

I’d point out the new one, it’d fix that but regress on something else.

Three rounds in, I’m basically re-reviewing the entire procedure from scratch anyway.

Again, playing the part of Uncle Ned. Poor Uncle Ned. All 120 bulbs.

Bug Hunting And Code Reviews

Here’s where things got a bit better.

Instead of asking the LLM to write code, I started asking it to read code and tell me what was wrong with it.

Turns out, LLMs are way better at critique than creation. Which, honestly, tracks.

I am also better at critiques than creation at times. Especially when someone else has to act on the critique.

-- Me: "Review this procedure for performance issues and bugs"

-- The procedure had, among other things:

SELECT

o.*

FROM dbo.Orders AS o

WHERE o.OrderDate BETWEEN @StartDate AND @EndDate

AND EXISTS

(

SELECT

1/0

FROM dbo.OrderItems AS oi

WHERE oi.OrderID = o.OrderID

AND oi.ProductID IN

(

SELECT

ProductID

FROM @ProductList

)

)

OPTION (RECOMPILE);The LLM flagged several things, and they were actually decent:

-- LLM's review points (paraphrased):

--

-- 1. SELECT * pulls unnecessary columns, can prevent

-- covering index usage

--

-- 2. BETWEEN on datetime: if @EndDate is '2024-01-31',

-- rows at exactly midnight are included but nothing

-- after. Use >= @StartDate AND < @EndDatePlusOne

--

-- 3. The table variable @ProductList won't have stats,

-- so the optimizer will estimate 1 row regardless

-- of actual content. Consider a temp table.

--

-- 4. OPTION (RECOMPILE) on every execution: is this

-- intentional? If this runs frequently, you're

-- paying compilation cost every time.Not all of it is earth-shattering, but it’s the kind of thing a DBA might flag. The BETWEEN on datetime point is one I see people get wrong constantly.

The table variable statistics point is correct and relevant, though it kinda lost that the recompile hint would get at least table cardinality. It’s nice that it was in the right neighborhood.

It’s sort of like if Helen had planted the bulbs a bit sideways instead of upside down.

I could take each of those points, decide if I agreed, ask to see a proposed fix, and then evaluate the fix. I’m in control. Mostly.

The big difference was that I was the one making decisions. The LLM was providing input, not output. It was a reviewer, not an author.

For actual bugs, it was even better:

-- Me: "This procedure sometimes returns duplicate rows.

-- Can you figure out why?"

-- [insert 200-line procedure here]

--

-- LLM: "The JOIN between #TempOrders and

-- dbo.OrderHistory on OrderID can produce

-- multiple matches when an order has been

-- modified more than once, since OrderHistory

-- keeps all versions. You probably want to

-- filter to only the most recent history record

-- per OrderID, or use a ROW_NUMBER() window

-- function to deduplicate."That’s exactly the kind of answer I want quickly. It identified the specific join, explained why it produces duplicates, and suggested two reasonable fixes.

I didn’t have to go through six rounds of “no, that’s not it” with more and more all caps and curses being introduced before getting somewhere useful.

The only time I want to go through six rounds without being useful is at happy hour.

The Lightbulb Cometh

For all of the previous stages, I was the bottleneck.

The LLM would produce something, I’d review it, find problems, describe the problems in English, the LLM would try to fix them based on my description, ad nauseum, alpha and omega, prompt without end, amen.

It was like pair programming where my partner can’t see the screen. There are also a variety of cooking competition show similarities here. Probably something like two people trying to cook the same dish without being able to see what the other is doing, or something.

The question that changed how I work with LLMs was: Why am I the middleman, here?

I gave Claude Code access to sqlcmd, connected it to a development database, and instead of asking it to write a query and hand it to me to test, I told it to write the query, run the query, look at the results, and figure out if they were right.

At least as much as it could figure out if the results were right.

This is where the happy-path code tests tend to happen, but you learn to catch those and tell the robot to act more adversarial pretty quickly.

The difference was pretty good.

-- What I used to do:

--

-- Me: "Write a query to find customers with

-- declining order frequency"

-- LLM: [writes query]

-- Me: [runs it] "This returns 0 rows, that

-- can't be right"

-- LLM: "Oh, let me fix the date logic..."

-- Me: [runs it] "Now it returns every customer"

-- LLM: "My apologies, I had the comparison

-- backwards..."

-- [repeat 4 more times]-- What I do now:

--

-- Me: "Connect to the dev database. Find customers

-- whose order frequency has declined over the

-- last 3 quarters compared to the prior 3.

-- Run it and verify the results make sense."

--

-- Claude Code: [writes query]

-- Claude Code: [executes via sqlcmd]

-- Claude Code: "Got 847 rows. Let me verify.

-- Checking a sample customer... CustomerID 4521

-- had 12 orders in Q1-Q3 2024 and 4 orders in

-- Q4 2024-Q2 2025. That looks correct.

--

-- But I notice the query doesn't account for

-- customers who are brand new in the recent

-- period. Let me add a filter for minimum

-- account age..."

--

-- Claude Code: [modifies and re-executes]

-- Claude Code: "Refined to 612 rows."It went from six rounds of me squinting at code to one round of it figuring things out on its own.

And it caught a problem I hadn’t even thought to mention (the new customer issue) because it could actually see the data.

This pattern became my default workflow for anything involving existing procedures:

-- Me: "The procedure dbo.CalculateMonthlyRevenue is

-- returning numbers that are about 15% too high

-- for January 2025. Figure out why."

--

-- Claude Code:

-- 1. Reads the procedure definition

-- 2. Runs it with test parameters

-- 3. Breaks the procedure into pieces, runs each

-- CTE/subquery independently

-- 4. Compares intermediate results

-- 5. Finds that a JOIN is including refunded orders

-- 6. Proposes a fix

-- 7. Runs the fixed version

-- 8. Compares output to a manual calculation

-- 9. Reports back with the fix and verificationAll of that happens without me doing anything.

I’m not copying and pasting results.

I’m not describing output in English and hoping the LLM interprets it correctly.

It can just look.

Unit testing became way easier too:

-- Me: "Create a test scenario for dbo.ApplyDiscountTiers.

-- Set up test data covering: no discount, single tier,

-- multi-tier, boundary values, and NULL handling.

-- Run each scenario and verify the results match

-- the business rules in the procedure comments."

--

-- Claude Code creates a temp table with test cases,

-- executes the procedure for each one, compares actual

-- vs expected results, and reports:

--

-- "All 12 test cases passed except case 8: when

-- Quantity is exactly 100 (the tier boundary), the

-- procedure applies the higher discount tier.

--

-- The comment says 'orders of 100+ get Tier 2'

-- which is ambiguous: is 100 included in Tier 2

-- or not? Currently it is.

--

-- If that's wrong, line 47 needs >= changed to >."That’s the kind of crap that would have taken fourteen rounds of back-and-forth to get to in the old workflow.

The LLM found the ambiguity, tested the actual behavior, cross-referenced it with the comments, and asked me the right questions.

All Good Things

The progression wasn’t about LLMs getting better (though they have, I believe). It was about me figuring out the right way to use them.

Asking an LLM to be an expert in SQL Server internals is asking it to do something it’s bad at.

It’s pattern matching against training data, and there isn’t enough deeply technical SQL Server content out there for it to pattern match well on niche topics. I’d often ask it loaded questions, get a bunch of absolute gobbledygook nonsense wrong answers, point it to a blog post with the right answer, and reap the apologies.

For every accurate article about parameter sniffing, there are fifty Stack Overflow answers that are somewhere between incomplete and wrong and terribly outdated,

Asking it to write code in isolation is slightly better, but you’re still fighting the fundamental problem: it can’t see what’s happening. It’s writing blind. It doesn’t know your data, your schema, your indexes, your edge cases.

So it makes guesses, and you spend your time correcting those guesses.

The sweet spot turned out to be giving it the ability to close the loop. Write something, test it, see the results, iterate. The same way a human developer works.

The LLM doesn’t need me to be its eyes and hands. It needs a connection string and permission to experiment.

I still do the final QA. I still review what it produces before anything goes out the door. I’m not handing the keys to the castle over.

But the amount of work that gets done before I even look at it is dramatically higher, and the quality of what I’m reviewing is way better, because it’s already been through several rounds of self-correction.

If you’re still arguing with chatbots or manually copy-pasting query results back and forth, try jumping ahead. Give it access to a dev environment. Let it run things. Let it fail and fix its own failures.

Stop being the middleman.

Enablement, and Other Drugs

For years, I’d wanted to build a monitoring tool for SQL Server.

Actually, I’m sort of lying. For years, I’d offered to help every single monitoring tool company make their monitoring tools less crappy.

What’d I get back? Crickets, runarounds, and a whole bunch of frustration.

Well, fine. They don’t care. I do. Perhaps I can take away enough of their money for them to someday care. That’s the goal, anyway. Or maybe they’ll just quit.

Either is fine, really.

So for a while, I started trying to talk developer-friends of mine into helping me build something. They’d all think it was a cool idea until the word “free” came up.

I’m not getting paid for anything, here. I’m probably losing work.

In fact, from what I can tell, the MCP tools I built in are doing a great job for people. I’ve gotten a lot of awesome feedback about them. But they’re also doing my job, so hopefully they’re also doing the jobs of lesser consultants, too. Better get back to the factory.

When I realized that developers were developing things using LLMs, I figured I could build off my SQL Server performance knowledge, and Vision Code myself exactly what I wanted. There was no Vibe Coding here, because I knew exactly what I wanted (pretty much, though that has evolved a bit since the initial process began).

I also, like, know what I’m doing and what I want and what’s right and I’m not just doing. a bunch of guesswork to get there.

From the beginning, I knew that I couldn’t skimp on things like security, so I made sure to use Windows Credential Manager from the start. If you don’t trust that, then you should probably uninstall SSMS.

But this introduced quite a new set of pain points with my robot enablers: they can’t run something and look at a GUI.

While they could run a query and validate results, it was me who had to iterate for long periods of time trying to get things visually correct. I’m still working out some things I don’t quite love in that regard, but the list is narrowing, at least. I can take screenshots and show them, but that doesn’t guarantee a good outcome always.

Work Flows

When people open issues, I have Claude review the issue, investigate the code, and come up with reasonable fixes for testing.

- Does it always work? Nope.

- Is Claude always right the first time? Nope.

- Do I often find myself frantically hitting the Escape key to stop it doing something asinine? Yes.

But is this something that I could do on my own? Learning WPF? C#? XAML?

Hell no.

The robots have enabled me to see this through, and continue to work on it at a pace that would otherwise be impossible.

And I gotta tell you, for something that’s only been out a couple months, I think it’s competitive-to-better than stuff that’s been around 10 or more years, and those people are charging you a lot of money for the privilege of being annoyed and unhelped by.

I’m going to keep at it until I wear out my Max plan.

But it’s not just this stuff. It has also allowed me to work quickly on:

- Getting a TON of issues closed out in sp_WhoIsActive

- Doing a crazy round of code review in the First Reponder Kit

- Working out a bunch of nits and gripes in my own SQL troubleshooting scripts

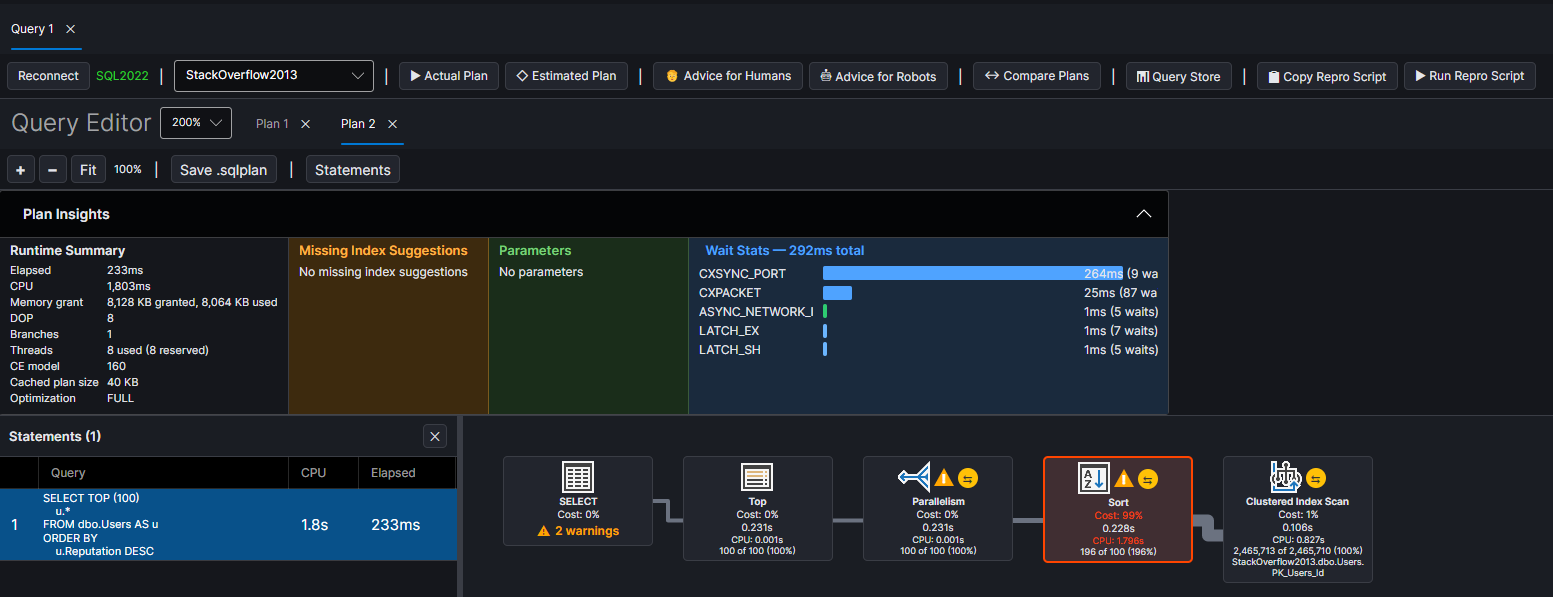

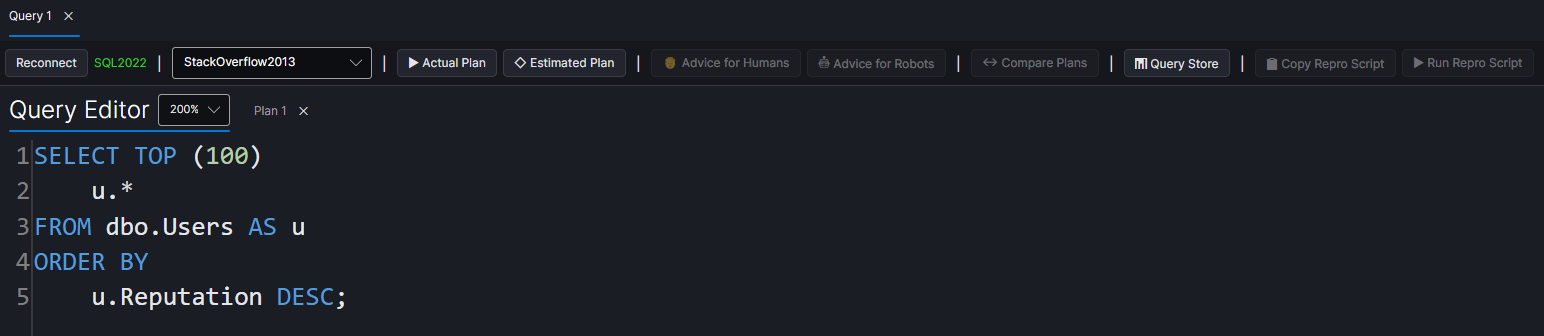

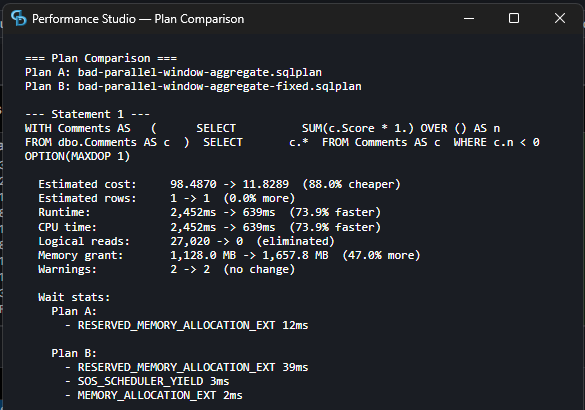

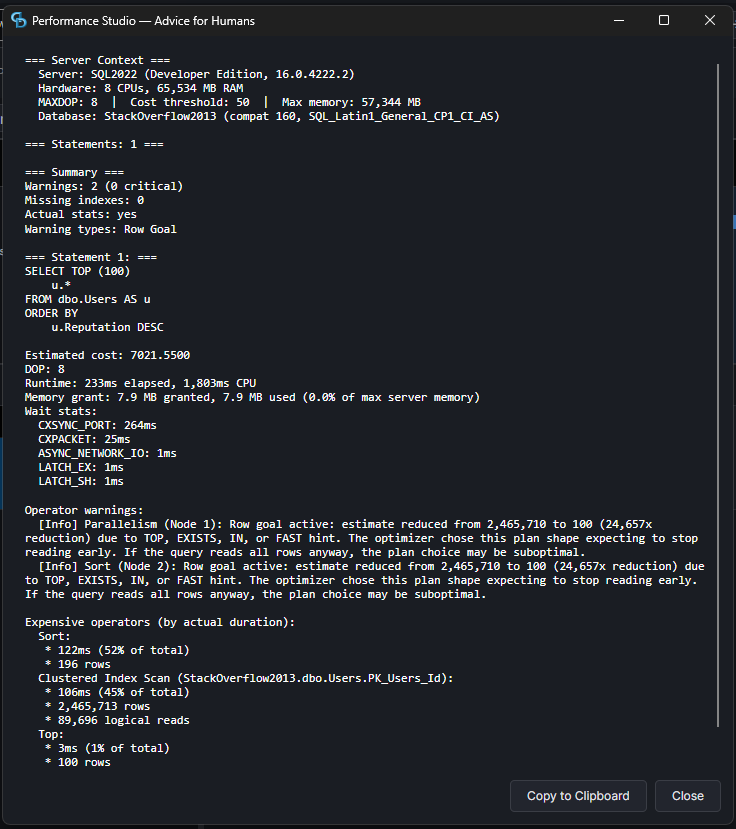

- Post the plan analyzer from the monitoring tool to a standalone product

- Transcribe and summarize my YouTube videos

- A bunch of personal projects

I can get a lot of stuff done and out into the world that I couldn’t before. Going from nothing to an imperfect something is fine with me. The world is full of imperfect software. At least I’m willing to fix mine.

An imperfect something is something that can be improved. Nothing is still nothing, no matter how much you think about it.

If you’re out there reading this, I’d encourage you to give something like Claude a crack at helping you build something that has always seemed too difficult, tedious, or involved for you to personally get started on.

It’s going to take some time and patience, and you’re going to have to supervise the process and output, but you’ll have something that used to be nothing.

Thanks for reading!

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.