Do That, But Faster

Serial zones in parallel plans can leave a lot of performance on the table. One of the best ways to see that is with insert queries that do a lot of work. A big contrast between @table variables and #temp tables is that the former fully disallows parallelism in modification queries, and the latter do not.

The story gets a little more complicated when inserting into a columnstore index, but this post isn’t really about that.

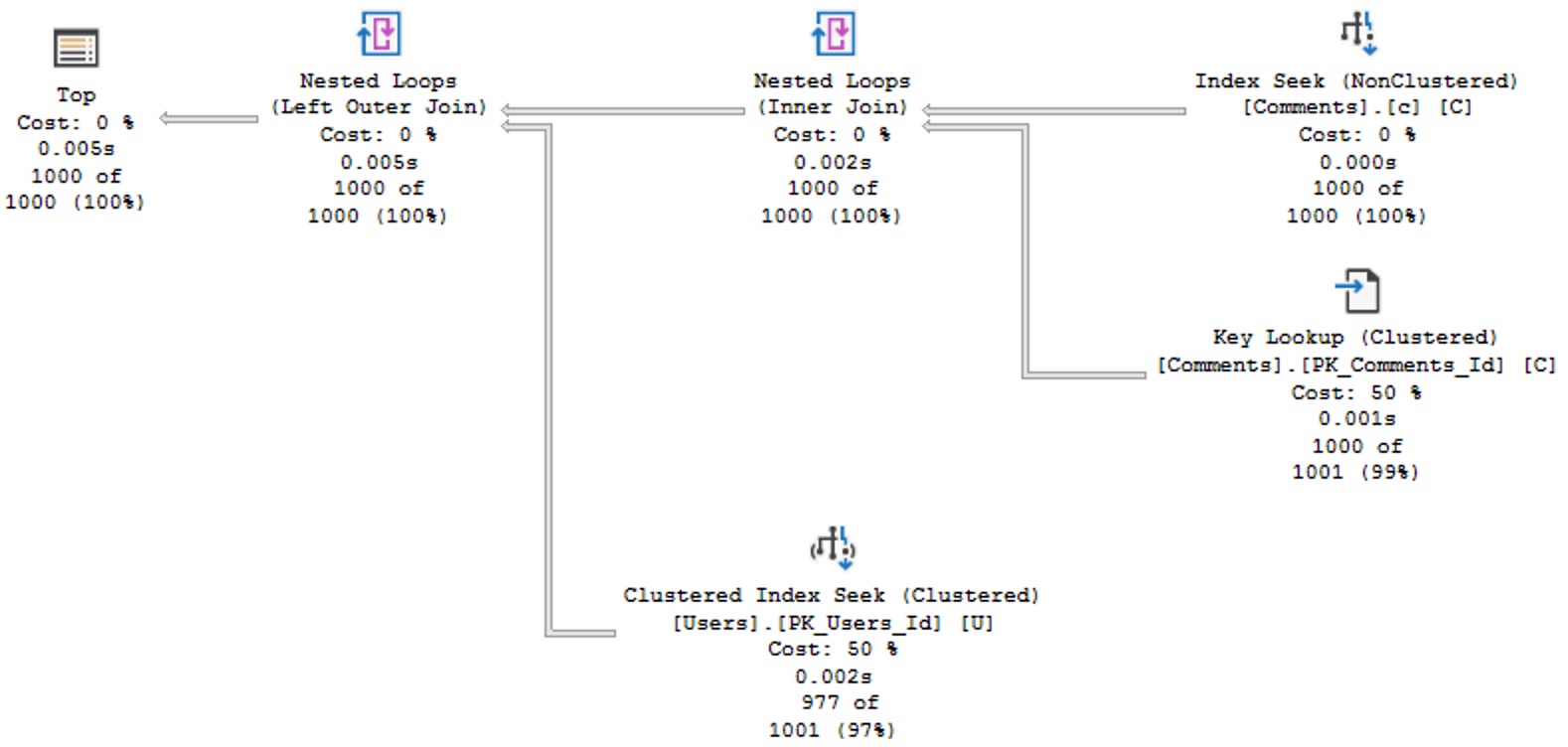

Let’s look at some scenarios where a fully parallel insert is allowed, and then not allowed.

The thing to keep an eye out for is the insert operator being in the serial zone. For the purposes of this thread:

Works: SELECT INTO

As long as you don’t do anything too goofy here, a fully parallel insert will “always” be allowed, here.

Goofy things will be explained later in the post.

--This will "always" work, as long as you don't do

--anything listed below in the "broken" select

SELECT

C.UserId,

SumScore =

SUM(C.Score)

INTO

#AvgComments_SelectInto

FROM

dbo.Comments AS C

GROUP BY

C.UserId

HAVING

SUM(C.Score) > 200;

DROP TABLE #AvgComments_SelectInto;

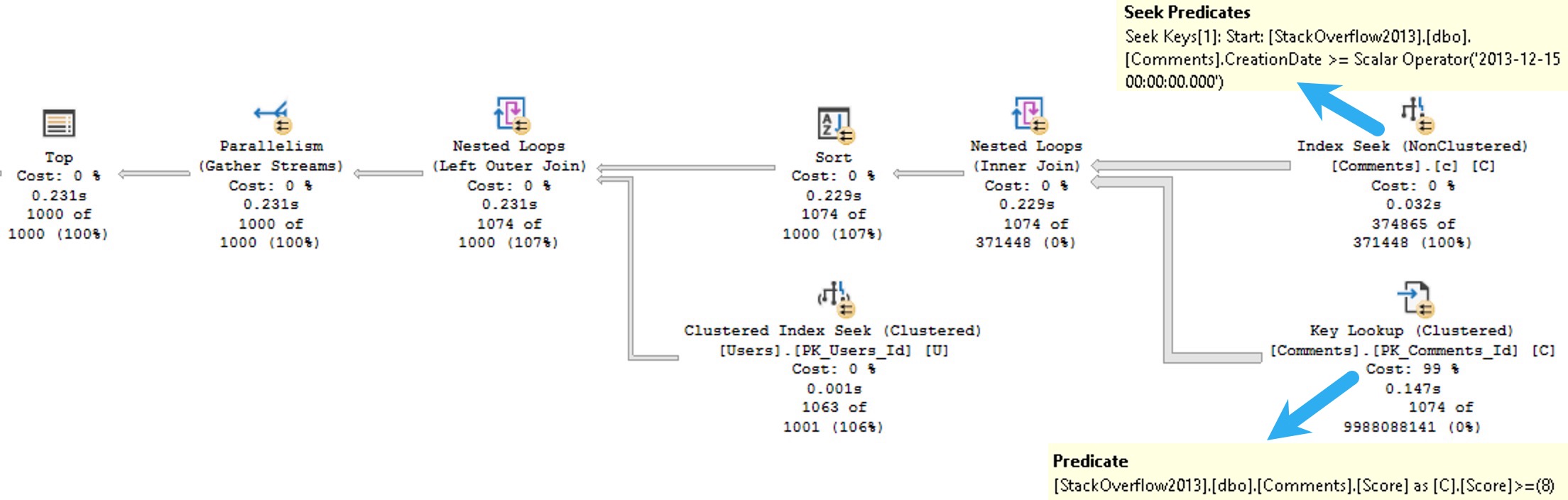

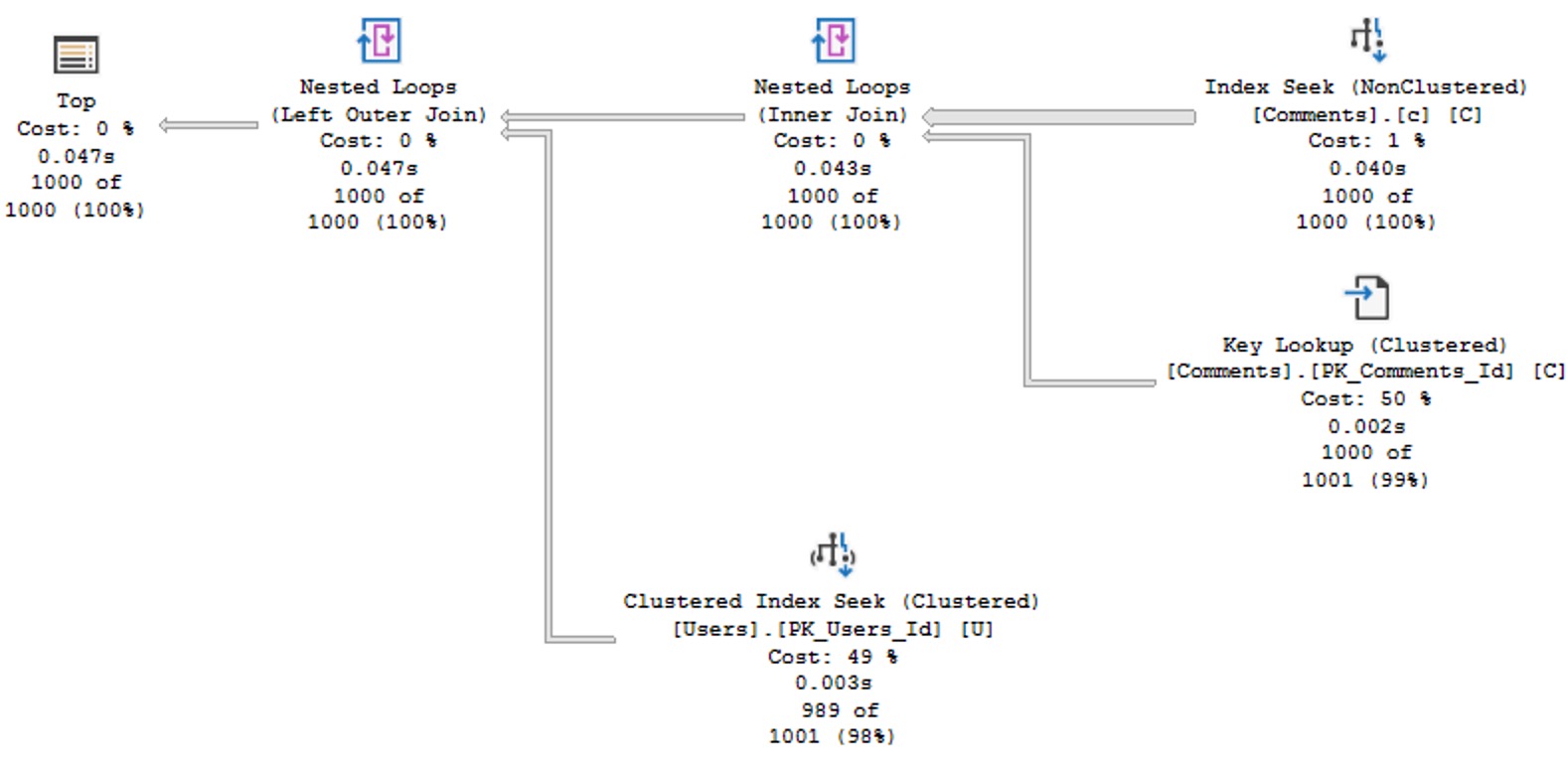

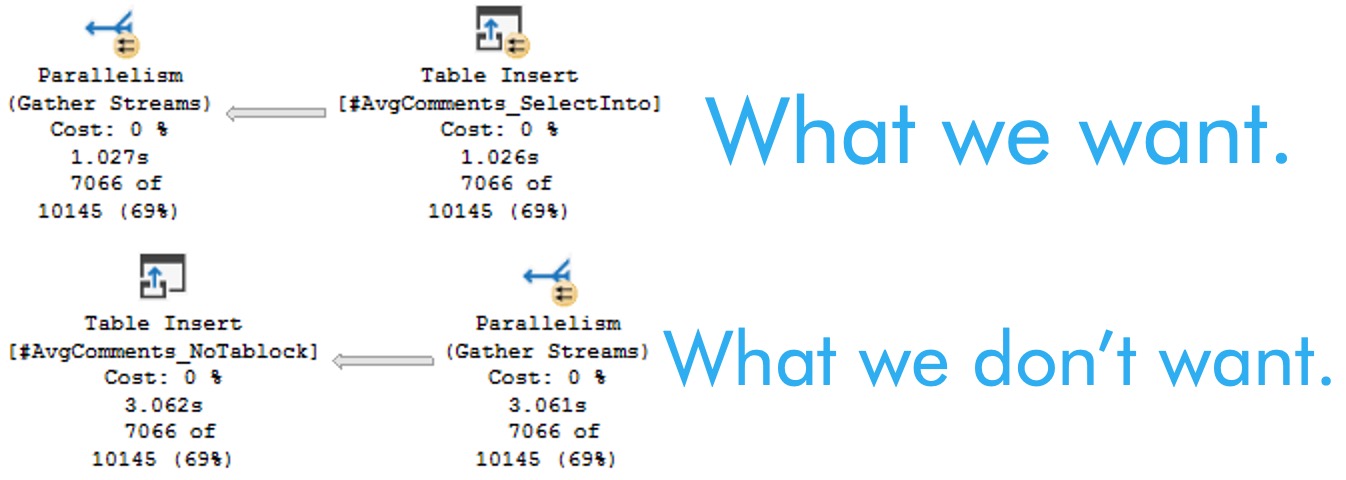

Works: INSERT, with TABLOCK

Rather than selecting directly into a table, here we’re gonna create the table and issue an insert statement with the tablock hint.

--This *will* get you a fully parallel insert, unless goofiness is involved.

CREATE TABLE

#AvgComments_Tablock

(

UserId int,

SumScore int

);

INSERT

#AvgComments_Tablock WITH (TABLOCK)

(

UserId,

SumScore

)

SELECT

C.UserId,

AvgScore =

SUM(C.Score)

FROM

dbo.Comments AS C

GROUP BY

C.UserId

HAVING

SUM(C.Score) > 200

DROP TABLE #AvgComments_Tablock

Doesn’t Work: INSERT, without TABLOCK

Without the tablock hint, this will get you the plan we don’t want, where the insert operator is outside the parallel zone.

--This will not get you a fully parallel insert

CREATE TABLE

#AvgComments_NoTablock

(

UserId int,

SumScore int

);

INSERT

#AvgComments_NoTablock

(

UserId,

SumScore

)

SELECT

C.UserId,

SumScore =

SUM(C.Score)

FROM

dbo.Comments AS C

GROUP BY

C.UserId

HAVING

SUM(C.Score) > 200;

DROP TABLE #AvgComments_NoTablock;

Doesn’t Work: A Whole Laundry List Of Stuff

Basically any one thing quoted out has the ability to deny the parallel insert that we’re after.

If you’re doing any of this stuff, like, bye.

--SET ROWCOUNT Any_Number;

--ALTER DATABASE StackOverflow2013

-- SET COMPATIBILITY_LEVEL = Anything_Less_Than_130;

CREATE TABLE

#AvgComments_BrokenTablock

(

--Id int IDENTITY,

UserId int,

SumScore int,

--INDEX c CLUSTERED(UserId)

--INDEX n NONCLUSTERED(UserId)

);

--Also, if there's a trigger or indexed view on the target table

--But that's not gonna be the case with #temp tables

INSERT

#AvgComments_BrokenTablock WITH (TABLOCK)

(

UserId,

SumScore

)

--The rules here are a little weird, so

--be prepared to see weird things if you use OUTPUT

--OUTPUT Inserted.*

--To the client or

--INTO dbo.some_table

--INTO @table_varible

SELECT

--Id = IDENTITY(bigint, 1, 1),

--dbo.A_Scalar_UDF_Not_Inlined_By_Froid

C.UserId,

SumScore =

SUM(C.Score)

FROM

dbo.Comments AS C

--Any reference to the table you're inserting into

--Not exists is just an easy example of that

--WHERE NOT EXISTS

--(

-- SELECT

-- 1/0

-- FROM #AvgComments_BrokenTablock AS A

-- WHERE A.UserId = C.UserId

--)

GROUP BY

C.UserId

HAVING

SUM(C.Score) > 200;

DROP TABLE #AvgComments_BrokenTablock;

Explainer

There are many good reasons to want a fully parallel insert, but you need to make sure that the bottleneck isn’t earlier in the plan.

If it is, you may not see the full performance gains from getting it.

In general, it’s a good strategy when building larger #temp tables, but at this point I add a tablock hint to every #temp table insert at first to test things out.

Thanks for reading!

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.