Row Goals: Part 3

Video Summary

In this video, I delve into some interesting query optimization techniques using Roll Goals in SQL Server, particularly focusing on how sub-queries can impact execution plans and performance. We explore scenarios where sub-queries lead to inefficient nested loops joins or top operations above scans, which can significantly slow down queries despite having good indexes available. By understanding these patterns, you’ll learn strategies to improve query performance, such as using COUNT() instead of EXISTS in certain conditions and leveraging appropriate join algorithms like hash joins when necessary. The video also covers how to identify and address these anti-patterns through practical examples and real-world optimizations, helping you avoid common pitfalls that can plague your SQL Server queries.

Full Transcript

All right, so welcome back to Roll Goals. We’re in part three now. All right. If the three surprises you, there’s a playlist with all the others. I don’t know how you would stumble upon the third part without having stumbled upon the others, but welcome to the internet. Welcome to YouTube, I guess. Anyway, or wherever you found this. It could be Twitter, LinkedIn, other websites. If you got here from my OnlyFans, sorry. This is going to be much, much more difficult. It’s going to be much more boring. I wish I had OnlyFans money. Anyway, one place that Roll Goals can be very useful for reshaping query execution plans is with sub-queries. Now, I want to say this query isn’t like terrible, terrible, but it is a good example. All right. So let’s look at the query. Let’s look at the execution plan for this query. And what we’re doing is we are selecting some stuff from the user’s table. where reputation is greater than or equal to 1000. And what we’re doing in here is saying, I want to find each user’s max last activity date. And then I want to figure out if that max last activity date is less than or equal to, I guess, Halloween of 2009. Right? And then we’re going to order by some stuff out here, I guess, for whatever reason. Seemed like a good idea at the time, right? How do most queries end up with an order by? Seemed like a good idea.

Seemed like a good idea at the time. So we’re going to run this. And we’ll get the execution plan back eventually. And we get our rows and aren’t we so happy to have gotten our rows, but we are not necessarily happy with the execution plan. So we have the user’s table down here. And we might find it quite odd that SQL Server did not start with the user’s table, maybe find just the, you know, whatever rows it cared about there, and then go find rows in the post table that matched to that because, you know, we like the, on the, on the, on the users table, we have the clustered primary key on ID. And we currently do have an index on the post table on owner user ID, I haven’t, I didn’t show the indexes that I created for these demos. But you’ll see, you’ll, you’ll understand what they are eventually, right, you’ll get it. So it’s a little bit strange that SQL Server chose to take this part of the execution plan, the select max, last activity date, where p dot owner user ID equals u dot ID. And it chose to, like formulate this aggregate first, and then like, you know, like scan the post table, aggregate the data, and then filter out the rows that we don’t care about, right? The filter here is going to be Halloween of 2009. That’s our, that’s our, that’s our filter predicate in the query plan.

So it chose to get all this stuff, like, you know, scan 17 million rows, aggregate them down to 1.4 million rows, I think, 1, 4, 3, 5, 0, 7, 2, yeah, 1.4 million rows, and then filter out the rows we don’t care about down to 7,320, right? So it’s a little weird that SQL Server chose to do this. Because it had alternatives.

Now, what, what’s one thing that you and I know about this, about a query that does this, right? Like even, even just like, if we focus on this part of the query, right? If we selected the max last activity date from posts, it could only ever return one single row.

If we selected the max last activity date for, for posts, for posts, even grouped by owner user ID, owner user ID would only ever produce one row. You can’t have multiple maxes. I mean, you, like, let’s, like, let’s say both max last activity dates were like today, right?

Like you had like two or three or four or five of them. It would still be one max, right? Like they wouldn’t have multiple maxes. Like we have one max value.

So this actually can only ever return one row per owner user ID, right? We have that pretty much guaranteed. So if we rewrite our query a little bit and we stick a top one in here, then all of a sudden SQL Server understands that too. Why it doesn’t understand that to begin with?

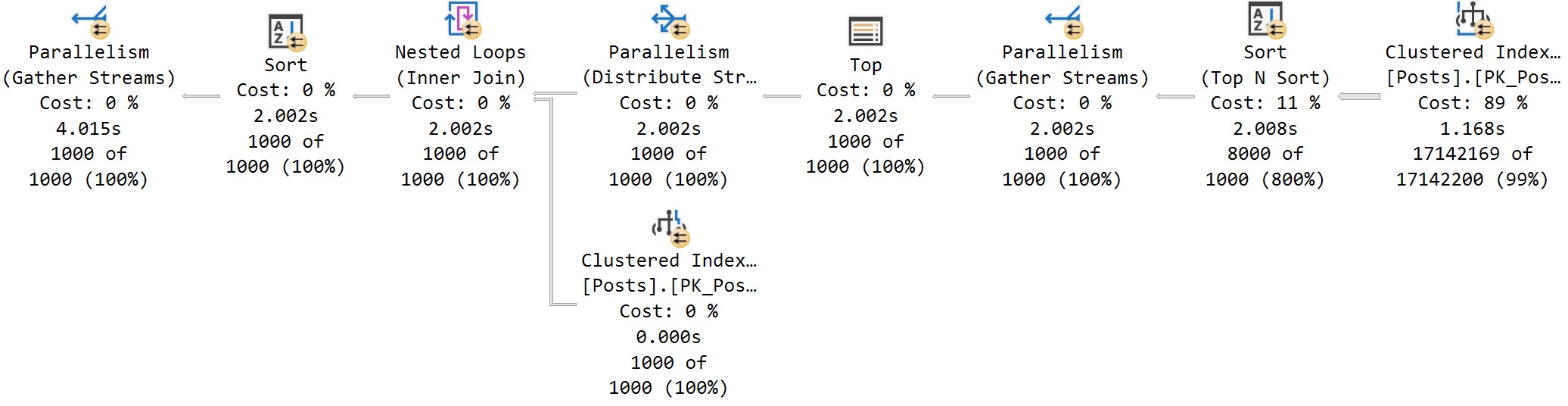

You know what? Maybe it does and the optimizer just said, oh, I don’t like the cost of that. I don’t, I don’t like the, I don’t like how much that costs. It’s too expensive. We’ve got a max last activity date at home, Erik Darling. But if we do this, remember the last query that we ran, that took about 3.7 seconds.

Okay. 3.7. That’s the, that’s the mark to beat. If we put a top one in our little sub query here and we rerun this, well, that was noticeably faster. This is 714 milliseconds, right?

And notice now here we have a top and then we have a stream aggregate and then we have another top. And it’s all rather funny looking, isn’t it? But this ends up being a faster execution plan because, you know, even, even though we, we find, we find more rows in the users table initially than we, than we got when we joined to the post table the first time.

It took three, like extra three seconds the way that we did it last time. Now we’re getting one aggregate per user ID that comes out. And this ends up being a lot faster than getting all the aggregate user IDs at once.

This is a trick that you can use in sub queries in the select list, sub queries in a where clause that have an aggregate like this. You can use this almost anywhere and you can get a completely different execution plan shape that might be more efficient. It’s especially more efficient if you have an index that you can seek into down here.

I have an index that leads with owner user ID. So when this query runs, we can seek to each owner user ID that we care about, right? And that’s a, that’s a very efficient thing to do.

Even though we do the last bit of filtering over here for Halloween of 2009, this query still finishes a full three seconds faster. If you want to see kind of a cute little trick, we can add in a sort of an empty group by, right? We have a group by with these empty parentheses here.

And what this will do, this will actually remove the stream aggregate. It’s kind of a funny little side effect, right? If we run this and we get the, look at the execution plan. I mean, it saves a little bit of time, right?

The last one was 700 milliseconds. This one’s about 550 milliseconds. But now we just have two tops hanging out next to each other, right? What are they looking for? I don’t know.

We’ll get to the bottom of it someday. Anyway, row goals tend to work well when data is relatively easy to find, either because it naturally occurs often or you have a reasonable set of indexes to search with. Sometimes, even if you have a reasonable set of indexes to search with, it’ll be slow, though.

Row goals can be slow. And this is a pattern that I end up troubleshooting quite a bit. So, almost everyone I work with has some store procedure that either has, like, maybe a loop that does stuff, maybe, like, some batch things going on.

Or they’ll have, like, a conditional, like, update or delete, usually. So, what they’ll do is they’ll start a query with something like this. And they’ll say, if this condition exists, go do this thing.

Like, so, in this example right here that we’re looking at, we’re joining post of votes. And we’re looking for where vote type ID equals one and post type ID equals one. The reason why we’re looking for this is because a post type ID of one, which is a question, should never have a vote type ID of one, which is when a user accepts an answer.

Vote type ID of one should only happen for a post type ID of two, because only an answer can be marked as the answer. A question can’t be marked as the answer. So, we’re going to run this query.

And what we’re going to see is that even with good indexes available, we are unable to find this data. Right? We don’t find any matches.

But SQL Server thinks that it’s going to find matches, because SQL Server trusts you. One of the sort of conditions of the optimizer is that if you ask for rows via a query, SQL Server assumes that those rows exist. SQL Server is like, well, you seem like a nice person.

Why would you lie to me? Why would you send me on a wild goose chase looking for data that doesn’t exist? Right? And so, we look at all this stuff, and we see that SQL Server estimated three rows, but we ended up with 3.7 million rows. And so, we went into a nested, so SQL Server set a row goal and said, I think I can find this condition with three rows.

But it didn’t, did it? Nah. Eh, did not.

We went into a nested loops join, because row goals work, like nested loops join, like small navigational queries, like with nested loops and stuff, work very well with row goals. Right? Because you just, oh, I’m going to find this.

I thought, I’ll find it. Yeah, you get it. I’ll get it on the next row. I promise. Right? So, we have this nested loops join here, because SQL Server was like, oh, well, I can find this really easily. Right?

I’m just going to seek to the three rows that I care about here, and I’m sure I’ll find something down here. But we don’t. Right? No rows come out of the post table that meet that. So, if you have a store procedure or something, or some other, you know, code that runs, that looks for data conditions that shouldn’t exist, and seeks to correct them, right? Or like delete them, or update them so that they’re the right thing, or just like flag them so you’re like, wait a minute, this is wrong.

We need to figure out what happened. Then you can run into this problem quite easily. So, if we look at the properties of the index seek, we will see something that we saw before, the estimate rows without row goal. Right?

So, SQL Server was like, if I don’t set this row goal, I’m going to have to go through 3.7 million rows. That’s no good. I think I can do this in three rows. Right?

Which is like, I guess, 2.8 something. Right? It’s like, I can do this and it gets rounded up to three. Okay. But guess what? None of that happens. And then at the end of all this, so it looks a little funny that we get a lot of rows here, and then zero rows here, and then zero rows here. But then we end up with one row here, but that is coming from this constant scan.

So, SQL Server is like, ah, well, I got to figure out if this exists or not. And I have to return something to say, did this exist or not exist? So, we get that.

One way that I fix this quite frequently is I make SQL Server count. Oh, oh, that’s tooltip hell. Ah, all right.

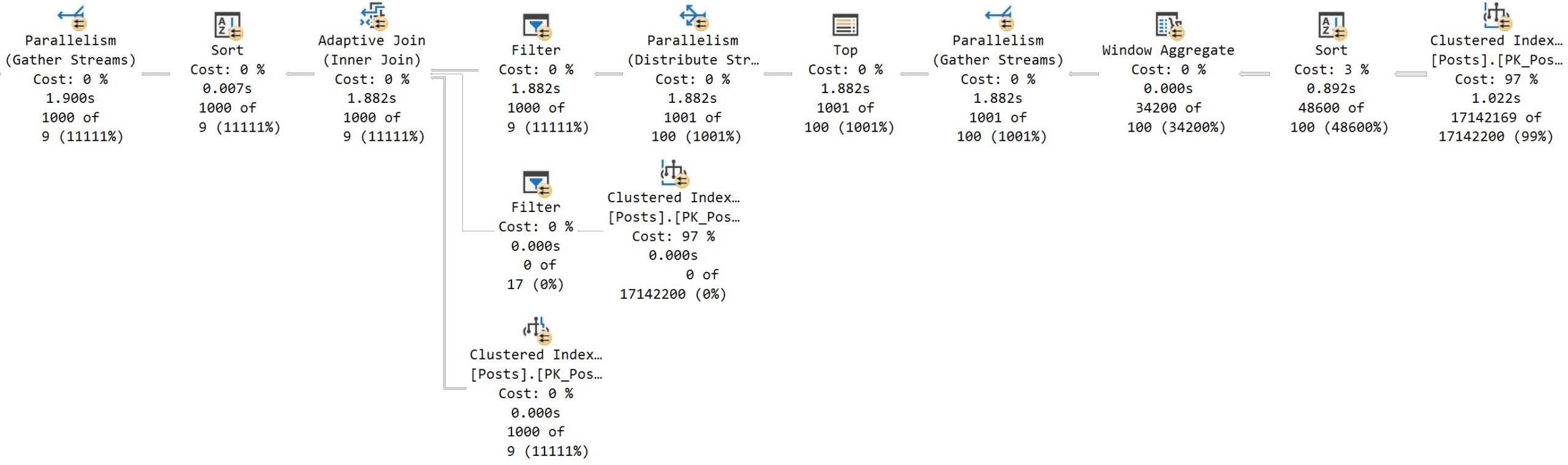

So, we can actually run this same if exist query, and we can just say, if this exists and it has a count greater than zero, now all of a sudden, SQL Server has to do something differently. SQL Server has to count everything and then filter out on that count. All right.

So, we went from, how long was that one? 6.464 seconds. All right. Now, let’s run this one where we make SQL Server count things. All right.

We make SQL Server do some extra work. That was noticeably faster, right? So, this is 1.2 seconds. And we get a much more sort of appropriate execution plan for this. So, this filter is where the halving on the count comes in. But, we notice that we get all 3.7 million rows here, and the whole thing just takes about 1.2 seconds total.

We get a nice parallel plan. It’s still nested loops, which, you know, you can think what you want about that choice. Maybe this would have been faster with a hash join.

I don’t know. There’s a lot of scanning around for stuff when you do that. But, you know, SQL Server is like, I’m going to get 3.7 million rows here, and then I’m going to probably get 3.7 million rows here, but I got none. All right.

So, maybe the nested loops join, not the best choice, but still a lot faster. All right. Not that crazy single serial nested loop row goal plan. All right. Avoid that.

Another big anti-pattern that I see quite a bit is a top above a scan. All right. So, top above scan, almost always a bad sign. When people talk about query plans, they often say, there’s no such thing as a bad plan.

There’s no such thing as a bad operator. Like, they’ll make all sorts of, you know, like, I know a lot about stuff, things. But there are certainly query plan patterns that I dislike seeing quite a bit.

And when I see these query plan patterns, I almost always say, hmm, I think you’re having a bad day because of this. So, this is an example of that. So, what I’m going to show you first is the estimated plan.

All right. And if you were, say, looking at this plan in query store or the plan cache or maybe some monitoring tool that correlates plans and stuff, you might say to yourself, look, how could this possibly take a long time? How could this, look at those tiny little lines.

What could, what could go wrong here? Are you insane? This was slow. This had to get blocked. The server must have been, like, shutting down when this query ran. No way this query took however long, right?

So, it looks harmless, but it is not harmless. All right. If we run this and we get the actual execution plan, we might be shocked. We might be very shocked.

We might be very dismayed. We might take an extra long look at our phone. We might start doom scrolling some social media or another. We might, I don’t know, we might call about our car’s extended warranty.

We might find all sorts of things to do in the time that these queries take to run. We might find all sorts of things to do in the time that it takes these things to finish, right? We can get a lot of things.

Now, one thing that I joke about quite a bit in my stuff is part of query tuning is a rows to seconds ratio. All right. How many rows per second should you be able to get back?

Well, we get back one row, but it takes us almost 24 seconds, right? That’s not a good time. And I mean, a lot of this query does still have rather thin lines to it, right? These are all tiny, thin lines.

That’s still a tiny, thin line. This one, you can barely, it’s barely a smidge. But look what happens, right? This is all 24 seconds. I mean, all 24 seconds is really like here. But we have this top above a scan.

And this top is just asking for one row over and over and over again until it finds something, right? So SQL Server said, I can do this in one row. Just one row.

I can knock this out. No problem. Clearly, that didn’t work out. It took 58 rows to knock this out. Now, what the top is doing is every time it gets a row, right? Because we didn’t find what we wanted, right?

We didn’t find that on the first row. It took on the 58th row we finally found this. So every time this ran, we did a scan of the votes table. How long that scan takes depends on where in the data what we’re looking for is, right?

So this one, for some of them, it probably would scan the whole table. For other ones, it would only scan part of the table. If we look at the properties over here, like, you know, we’ll see the actual number of rows read is, let’s see, 244689052.

That’s a nine-digit number of rows. Nine is a very hard one to do. I guess I’ll just put a thumb down. So nine-digit number of rows.

So the votes table itself is 52 million rows. But, you know, it’s, but 52 million times 58 is not that number. I don’t think it is.

Maybe it is. I don’t know. But I don’t think this, this, the votes table gets read in full for every one of those. Maybe, maybe, maybe not. Because sometimes, because we’re looking for not exists. So if we find that a row exists, we can exit out early, right? Because it’s a semi-join.

It either is there or it is not there. So sometimes it might find everything. Sometimes it might not. But, so we talked about this. So, of course, this would be fairly trivial to fix on our own.

We could just add an index on post ID. And then we would have a top above a seek. It would be much easier to do, let’s say, 57 seeks into an index on post ID. And figure out if a row is there or not.

Mostly not. And then it would, what do you call it? On the 58th row, we would get, we would figure out, we would find what we wanted. So, this pattern is fairly easy.

Like I said, fairly easy to spot in an, even in an estimated execution plan. Either from query store, the plan cache, your favorite monitoring tool. You may need to get an actual execution plan to know that there’s a real problem with it.

But, getting one just like that was a fun and exciting time. We had 24 seconds to just relax, stretch, yawn. I don’t know, scratch ourselves if we wanted to do that.

Anyway, enough about, enough about those kind of row goals. Let’s talk about some good kind of row goals. There are two different types of row goals, to my mind. There are top level row goals, which is like when you say select top, whatever.

And then there are sort of interquery row goals, right? Where you might select a top within a query like this. So, if we, let’s, let’s actually quote this out first, right?

So, we’re going to take this and we’re going to run this query. And we’re going to look at the execution plan. And we will see that we just have one top out here. And that SQL Server scans the users table.

And then it does a nested loops join. And then it hits the post table. And then it does another nested loops join. And it hits the comments table. This query is not disastrously slow. It’s about 1.3 seconds.

But look how the query plan shape changes when we put a top in here. We’re going to put that top there. And we’re going to run this.

And it takes, I mean, it’s still about 1.3 seconds. Like I said, this isn’t an example of fixing something with a row goal. This is just an example of top level versus inter-query row goals.

But now we have one top over here. And we have one top over here. And the shape of the query plan has changed a bit. So, now we start, we hit the users table out here.

But within this branch, we join posts to comments, right? So, things have changed in the shape of the query a little bit. So, in the next video, and I think the final video in this talk, we’re going to look at how we can use this sort of knowledge about how we can sort of get things to happen together in a query with row goals to fix some bad performance issues.

All right. Thank you for watching. I hope you enjoyed yourselves. I hope you learned something. And I will see you over in the next video.

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.