I am a video

Thanks for watching!

Video Summary

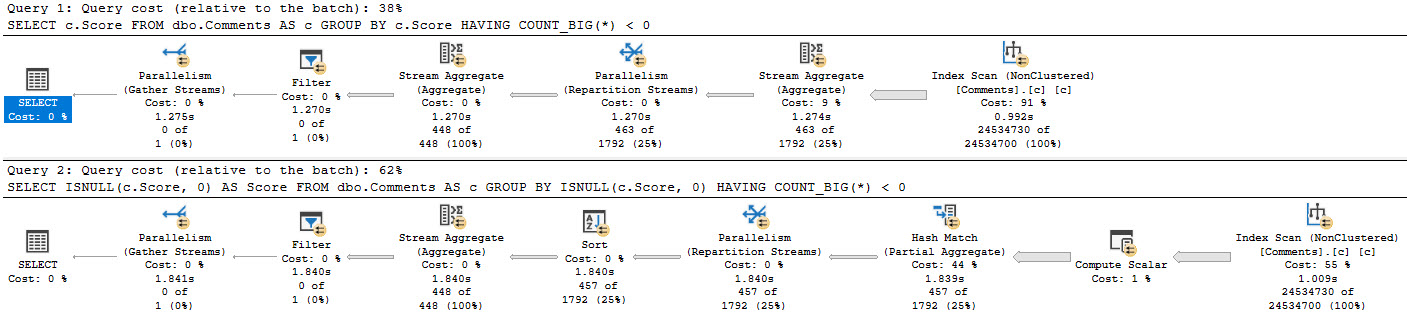

In this video, I dive into a real-world scenario where I used my SSMS tuning setup to optimize a query for one of my help reviews. The query in question was particularly slow and caused significant delays during live demos due to its execution time. After analyzing the execution plan, which took over 2 minutes to run, I decided to tackle the issue by rewriting the query to eliminate unnecessary join conditions and reduce redundant operations. This approach not only sped up the query significantly but also provided a clearer, more efficient execution path for SQL Server to follow. By sharing this experience, I hope to offer some practical insights into optimizing complex queries for smoother performance in real-world scenarios.

Full Transcript

Oh boy, oh my goodness. This is, this is quite a day, quite a Friday. Uh, Erik Darling here with Erik Darling Data. It would be Darling Data, but someone else, someone else got there first. I mean, for the domain. I mean, I still have the company. Whatever. I mean, no one else got here first. That would be awkward. Like, hostile takeover of my own company. Anyway. Uh, today we are drinking something red that my wife walked in and handed me, so I have no details. Uh, it smells nice though. And, uh, this video is, uh, going to build, oh geez, we’re building things, we’re building on things. There’s a pattern forming here. Uh, where we’re going to, uh, build a little bit on a video that I recorded, I think, last week. I don’t remember.

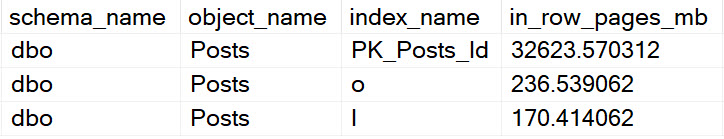

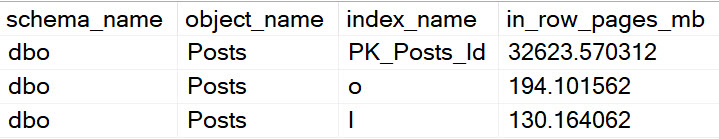

The weeks are still, time is still very strange to me. Uh, talking about, you know, how I set up SSMS to tune queries. And here’s an example of actually when I used it to do that same, to do that very thing. Same very thing. Uh, recently. To, uh, one of my help reviews. Uh, what’s up memory? And, uh, I don’t know why I named it that. I was, I guess because who is memory sounded stupid. Uh, so, yeah. Uh, so this, this, this help review will tell you, uh, what’s in, what’s in your buffer pool currently. And that’s helpful, I guess, because everyone wants to know what’s in their buffer pool constantly all the time.

Uh, I use it for demos that I do for things that, uh, I find helpful. So, I don’t know. Maybe you will too. I don’t know. If you don’t, go do something else with your life. I don’t care that much. Um, but, what I found is every time I wanted to use this thing on a, uh, on an instance with a good chunk of memory, it would be, it was slow. Terribly, dreadfully, painfully slow. If we look at the execution plan, uh, we see that it took about 2 minutes and 44 seconds to run.

And, uh, who could wait that long for anything? Uh, and it made, uh, certain demos very difficult to do live or even to, like, sort of do off-the-cuff recording. I would have to, like, pre-stage everything so that it would be set up here. So, we were just, like, like, sitting around waiting for this and awkwardly staring at the camera.

Or, like, doing a cut scene or a montage, lifting weights in my short shorts, running on a beach, karate-kicking seagulls, whatever. Uh, but, I mean, just, you know, kind of, like, digging through the execution plan. Again, very helpfully looking at the operator times under these things, right?

So, we no longer have to care about percentages because, I mean, not that they meant anything anyway. Shut up, car. But, it was helpful, it’s much more helpful to sort of, like, follow the yellow brick road of operator times to when things sort of drop off and pick up.

So, kind of framing this a little bit, we have this nested loops join that hits 2.15. And we have this concatenation that is at 0.001. And down here, we have this table spool, which takes a minute and 36 seconds.

Prior to that table spool, we do some nonsense where we hit this view, sysalloc units. And, uh, I’m mispronouncing that would have been deadly. Uh, and this one down here, sys.buffer descriptors.

And we sort of join those together. And then we spend a bunch of time in the spool and a bunch of time in this nested loops join. And this, and the spool, of course, is trying to save us some trouble with the nested loops join.

It’s like, oh, I don’t know how repetitive this stuff is going to be. I don’t know if I want to do everything down here over and over again. I’m going to use a spool to cache some information and reuse it if I can.

Uh, you know, I don’t know how helpful this spool actually was. We look at the properties of the spool. And we look at the, uh, the rebinds and the rewinds.

Well, you know, I guess, I guess it’s okay. Deal with it. But, uh, I don’t like this spool. And if you look at the nested loops join, and kind of zoom in on the tool tip, it’s all happening because of this very, very difficult predicate.

Uh, there’s a lot of and and or logic in this join condition. Let’s go zoom in on what the join condition looked like beforehand. Uh, and this is, you know, sort of many times looking at, uh, queries and looking at, uh, you know, things that people wrote up in the real world.

This is like the enemy of performance. This is not a good thing to do if you want queries to run quickly. Uh, especially, you know, bigger queries, more rows, things you have to worry about, performance. Ah, terrifying.

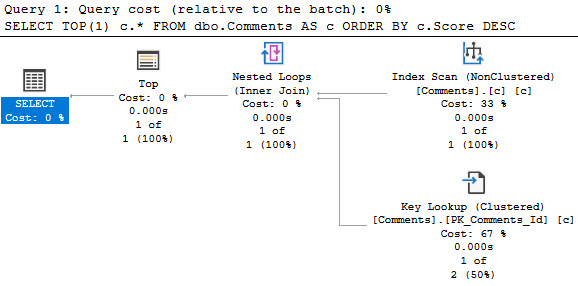

So, uh, you know, my initial reaction. And I guess we can just come over here. My initial reaction was just to try a query hint on here for no performance spools. Zero performance spools.

Which would get rid of, uh, actually gets rid of a few different kinds of spools in query plans. And get rid of row count spools. Uh, lazy table spools. And, uh, lazy index spools. It does not get rid of eager index spools.

Uh, the only thing that does that is a, uh, uh, turning off certain query rules via other things that we’re not talking about here. But, um, no, with the no performance spool hit, if we look at the query plan, we get a faster one. Still faster.

56 seconds. So we did better. It still takes a full minute. It’s still a lot of time to kill. We don’t feel like talking, really. It’s, uh, you know, kind of dragging along the query plan. You know, it’s kind of the same, same set of yuckiness.

Like, why does it take five seconds to seek into an index? Like, what, what are you doing? You know, who designs these things? Add an index.

Add a good index. Why is this? This is silly. Uh, and then this one, or three seconds. And, you know, like, like focusing in on the operator times. There’s a thing that’s like, we have big jumps, right? Not like, we don’t want like incremental ones. Incremental ones are boring.

What if that big jump to where we got up there? And, you know, I guess like a six second jump is pretty big, right? 5.7 seconds up to there. Okay. Well, you know, something to think about. Uh, but, you know, kind of, again, following this road, right?

So now we have that same join, I think. Uh, but we have, uh, like a bunch of stuff down here that just sort of takes a lot of, without the, without the spool, we have to do a lot of, the optimizer chooses a much different plan.

Whereas like a bunch of different stuff happens. Right. And, uh, I don’t know. I’m kind of got lost with what I was going to say about this one, but you know, it’s Friday and you’re not paying attention anyway.

So it doesn’t matter all that much. Does this do? No. Outer references. So this time we get a part, we get an apply nested loops where, uh, rather than the regular nested loops where everything happened at the join, where it was with the spool. Now we have, we get an apply nested loops where it takes, uh, the type and the, uh, owner ID and it pushes things down.

If I’m, if I’m looking at the right join, even at this point, I’m, I forget. Anyway, uh, that’s how I tune queries. I forget things. Uh, but yeah. So, uh, what I, what I, what I thought looking at it was, geez, this is a performance problem that I’ve solved for other people.

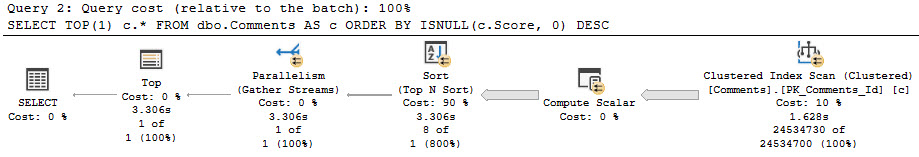

A lot of them, a whole bunch of times. Why don’t I solve it for myself for once? And so I rewrote the query and I’ll show you what I did in a minute. But now the execution plan, I, this finishes in about seven seconds.

So now everything that’s slow in here is purely Microsoft’s fault. There’s no like really, really big jumps in the query plan. Uh, you know, we still get, have no buffer descriptors was kind of annoying, but not the biggest deal in the world.

But anyway, let’s get onto the rewrite. Uh, all the stuff up here is superfluous. I mean, that’s just like display level stuff. Uh, what really ended up making a difference was, so the first time I was thinking about rewriting this, I thought that I would just do this thing in here and separate out each of the join conditions.

So in this one, I have one explicit join condition with no, uh, this space there. Ugh, I stink. Uh, and then another one with this explicit join condition. So no like and or in crap in here.

And, you know, looking there could cool. And at first I thought that I would do this and I would, uh, just preserve the original join logic, which up here is, uh, from buffer descriptors joined over to allocation units.

But, uh, I found that hitting that buffer descriptors view three times was painful. So I ended up pulling the buffer descriptors part out and just doing one join to it at the end once I had everything else, uh, done out here.

So now the query is just grabbing all this stuff, right? Having fun doing things, pulling out information. And then, uh, when we get outside of that sort of inner query where we, after we just, I just called that X because, uh, I don’t know, Mr. X.

And then, uh, we do our grouping and our ordering out here. And we do all the summing in whatnot up here with our case expressions here where they’re far less dangerous.

And of course, uh, repeating this query with three explicit joins is a lot faster. Now it’s a lot faster for reasons that I’ve talked about in other posts where, you know, if we’re writing a single purpose queries, it’s a lot easier for the optimizer to go, uh, to deal with that and to make an efficient query plan.

Um, I’ll, I’ll probably blog more about that in the future, but for now, that’s what you get because we’re about at the 10 minute mark and I’m about to get my Friday evening started. And, uh, I hope, well, I mean, by the time you see this, it’ll be Tuesday, but that’s your fault.

Anyway, thank you for watching.

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.