Yep Nope

Video Summary

In this video, I delve into the intricacies of how thread pool weights do not appear in QueryStore, despite generating significant thread pool waits during execution. I walk through a detailed experiment using my custom `ThreadPooler` stored procedure and StackOverflow 2013 database on SQL Server 2019, running 150 concurrent queries to simulate high thread demand. Despite hitting the thread pool limits and experiencing contention, QueryStore fails to capture any thread pool weights, leading me to question the current implementation and documentation of the query store waitstats view. This video is a deep dive for my dear friend William at Microsoft, aimed at clarifying these details and potentially improving the user experience in the query store GUI.

Full Transcript

Erik Darling here with Erik Darling Data. Recently voted the consultant most likely to die in front of their computer by BearGut Magazine. So I’d like to thank the editors, the writers, and all the voters out there, all the BearGut heads out there who voted for me. I think what really pushed me over the edge was when I shared my Windows Update notifications where it said, we notice that you use your computer mostly between 5am and 9pm, so we’re not going to restart your computer. I think that’s what really sealed the deal for me, but, you know, just want to make sure, make sure they show everyone at BearGut Magazine my appreciation there. Love you guys and gals, all you fine people at BearGut Magazine. So, this video is for my dear friend William at Microsoft, and it is to hopefully clarify some details around how I think that thread pool weights do not actually end up in QueryStore. So, to kind of step through a couple things up front, this is QueryStore. I have recently purged all QueryStore data from here because I don’t, I want to start fresh. I had a little bit of a difficult time clearing that out. There were some really interesting things that I had created in there. That’s okay.

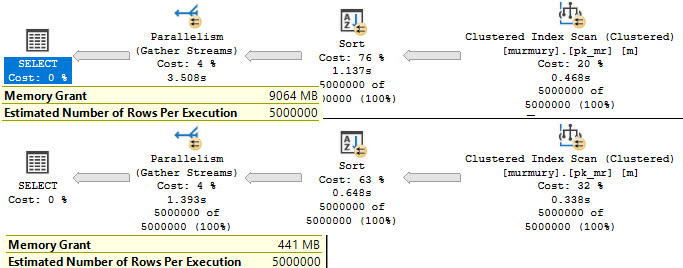

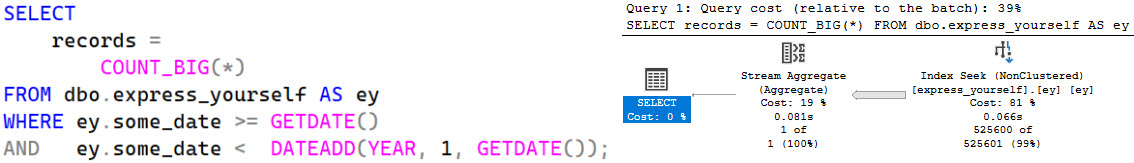

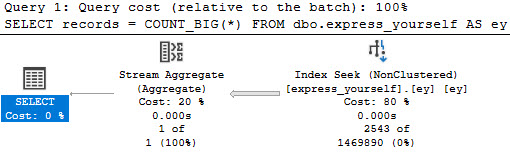

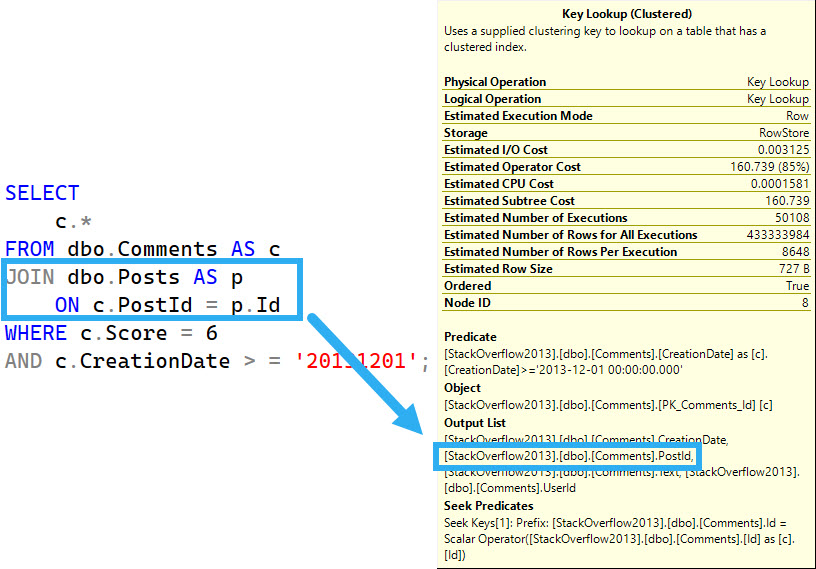

What was I going to do? Take a backup? Okay. So, that’s that. So, this is QueryStore. It’s on. The capture mode is on. The wait stats capture mode is on. Everything is good to go here. That’s StackOverflow 2013. The store procedure that I’m using to generate thread pool is a store procedure called ThreadPooler right here, and the query in it is written in kind of a funny, silly way in order to do a very specific thing. And that very specific thing is run at, come on, buddy, run at DOP8 and reserve eight threads per concurrent parallel branch, which brings us to 24 threads, because the best way to generate thread pool is to generate parallel queries and run a whole bunch of them at the same time.

In order to run a whole bunch of them at the same time, we’re going to be using Osterest, but we’ll talk about that in a minute. So, this is a freshly restarted SQL Server. I just restarted it before recording this because I want wait stats to be buck naked.

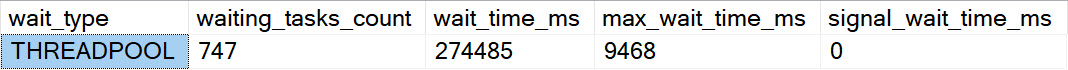

We have nine thread pool waits on the server. We have nine thread pool waits because the thread pool is an artifact of both of creating threads, which happens during SQL Server’s lifetime. It will create and trim the family of threads that run queries on here. So, when you create threads, you hit thread pool waits.

Not for a very long time. You can see the max wait time is one millisecond. And, you know, that’s pretty good. That’s pretty fast. Fast threads. It must be using lightweight pooling or something. It must have priority boost turned on to get threads created that quickly.

But we have these thread pool waits on the servers. We have a few of them. Not a lot, though. So, fine. In sys.queryStoreWeightStats, this should be completely empty because I haven’t run anything. WeightCategory2 is, of course, the one that would be responsible for tracking thread pool waits.

So, I have SPPressureDetector, my shameless plug for my store procedure here, which is going to tell us some information about CPU pressure on the server. I’m using the remote DAC so that I don’t get held up by the test that causes thread pool waits because there’s nothing more useless than sitting there waiting for DMV queries to finish.

So, my server is allowed… Oops, I didn’t do that very well. I didn’t frame that up very well. My server is allowed to use up to 744 threads. We have currently used 39 threads, and we have 665 threads available.

Nearly 666 threads available, which would be devil worship, which is the only way that consultants actually get business. So, I don’t know.

I don’t know what the correlation there is. Maybe I should worry about business if I only have 665 threads. But, yeah. So, this is the current setup for this. The way I’m going to be testing things is running my thread pooler procedure against the StackOverflow 2013 database.

Again, making sure that context is ultra clear here. This is the server we’re hitting, SQL Server 2019. And I’m going to be running 150 threads over 20 rounds of executions. So, 150 concurrent queries of thread pooler.

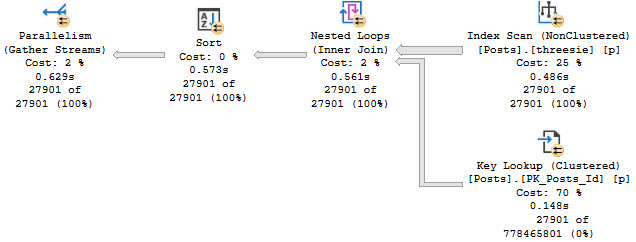

I was running this up higher before, but I was getting a lot of timeouts from Ostrust. And I don’t want timeouts. I want queries that hit thread pool, but complete.

I don’t want there to be failed executions getting into the mix and mucking things up. Because, even though I know Query Store tracks failed executions, this is a failure before anything even happens. So, there’s no way the Query Store would be able to say, oh, you were going to run that query, but you couldn’t get a thread.

So, there’s no way to put that together. So, I don’t want failures. I want contention, but not like timeout, disaster, the usual kind of thread pool stuff that I demo. So, let’s kick that off and get that working.

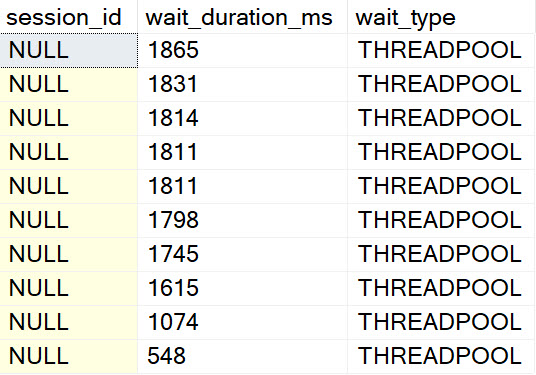

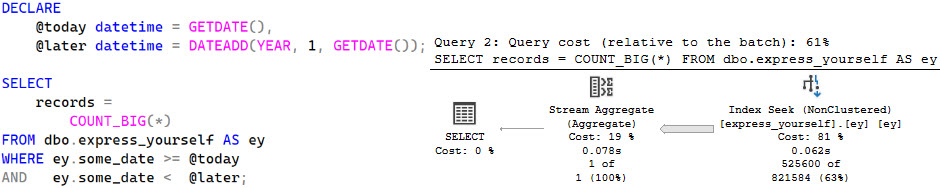

And we’re immediately going to see SP Pressure Detector telling us about thread pool weights. All right. So, you can see that we are the 704 threads that we are allowed to use. We have used 755 now.

We’re at negative 59. So, that’s a bad time. And we have 17 requests waiting for threads. And that’s going to be just about 17 down. Oh, wow. More than that. So, in the span of time between this query running and this query running, 10 extra threads got on a line waiting for CPU.

That’s fun. Or 10 extra tasks started waiting for the thread, rather. If we run this a couple few times during the course of things going, we’re going to see more thread pool weights here and there.

So, we’re going to see some. We’re going to see them come and go. And we’re going to see, if we look at Ostrass first, we’re not going to see anything timing out. When Ostrass has queries time out, it gets very, very loud about it.

There’s all sorts of messages and flooding and going on in there. But if we run this, you know, and we find some stuff with this thread pool, come on, baby, give me something. Anything.

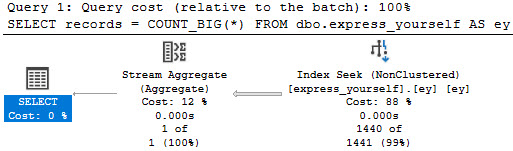

One row, one row. Screw you. Make me look bad in front of my friend William. What we’re going to see over here is something kind of interesting. You know, there’s a bunch of queries executing at DOP8 that have 24 worker threads. But then as we get down a little bit lower, we’ll see SQL Server starting to force some queries to use lower DOPs because we’re hitting these thread pool weights.

Right? So, we’ll see DOP7, DOP3, DOP1, and then, you know, whatever. So, we can tell that we’re hitting thread pool. But we can also tell that queries are at least finishing.

Right? Like, queries are getting through and eventually completing. So, there’s that. Great. Wonderful everything. We’re going to see as this thing starts executing that weights are going to, or thread pool weights are going to go up on the server.

Not constantly because we’re not constantly hitting thread pool anymore. You know, it was sort of inconsistent in the pressure detector stuff where, like, some queries were and some queries weren’t. But, you know, we see that from the, I think, nine waiting tasks that we had before, we’re up to 616 now.

So, we added a whole bunch of them in. You know, if we run this, we might see some more. I don’t know. I forget. So, whatever.

This thing has been dragging on long enough. Oh, there’s a whole bunch more. Woo-hoo! We hit more thread pool. We did our job. Let’s come back over here and look. Okay. Maybe it hasn’t quite made it to that DMV yet. Consistency is an eventual thing, I suppose.

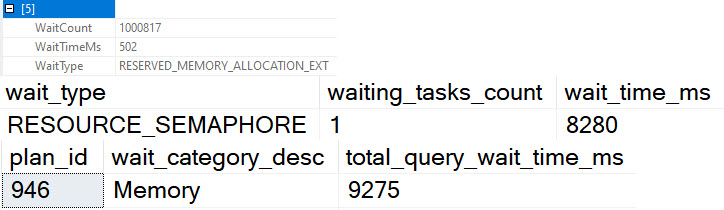

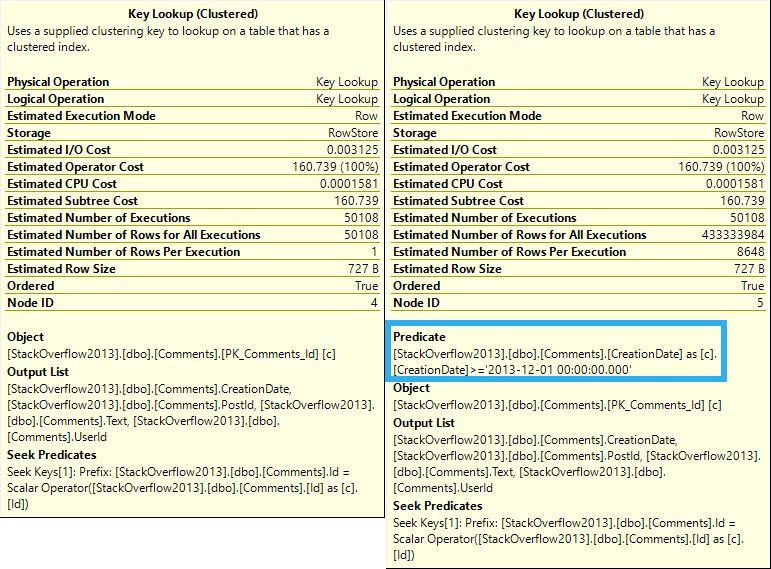

So, let’s kill that. And let’s poke around a little bit. We’ll see thread pool weights down here. We don’t see any weights in here.

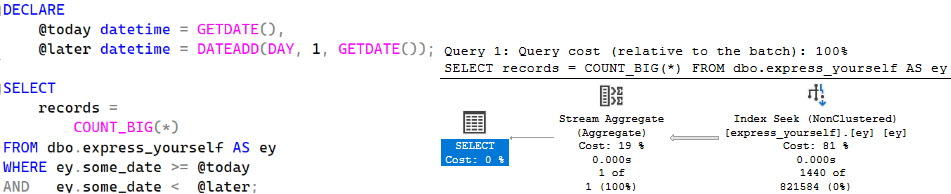

We don’t see any thread pool weights in query store. And if we run here, we’re going to see, you know, queries and stuff running. We see all sorts of other weights get populated, but nothing for that weight category of thread pool. So, that’s a bummer.

And, you know, we can probably see if we poke through query store. Maybe. I mean, I hope we do anyway. If we go look at query store. And we go look at top resource consuming queries.

And let’s view those. And let’s see here. There’s our top resource consuming query. And that’s from our stored procedure thread pooler, which had 293 executions. All right.

So, it did a lot of work. Did a lot of stuff. Executed a whole bunch of times. We did a bunch of thread pool. But we have no thread pool weights in here. All right. If you look at wait time, query ID 37, you know, there’s no wait time. So, I don’t know.

Whatever. I’m exhausted. Give me a break. If the query store GUI worked better, that would be probably more illustrative that we didn’t have any of that in there. So, I don’t know.

Maybe that’s a separate issue that I’ll open up. Fix the query store GUI. Get some UX in the query store GUI. Something like that. User experience points. Tell a user story.

Great user story with the GUI. Anyway. William. I hope you enjoyed this video. I hope it helps you in your quest to fix the documentation in the query store waitstats view. Anyone else watching this?

You shouldn’t have watched it. This video was only for my best friend William at Microsoft. So, you’re a disgusting individual. And I’ve lost all respect for you. All the non-Williams out there. How dare you intrude on this special time that we had together.

Anyway. It’s Friday. So are you. I’m going to leave now.

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.