Annals

The longer your application has been around, the more developers and queries it has seen. There are a lot of ways the indexes could look, depending on how you approach performance issues with customers.

If you’re the type of shop that:

- Creates specific indexes for clients experiencing problems or

- Packages indexes into patches that everyone gets or

- Allows clients to manage indexes on their own

You could be dealing with a lot of stray indexes depending on which path you’ve chosen. If no one is going back and looking at how all those indexes get used, you could be missing a lot of performance optimizations.

Three Ways

Let’s talk about the three ways that not cleaning up indexes can hurt performance that I see most often while working with clients:

Buffer Pool Pollution

SQL Server doesn’t work with pages while they’re stored on disk. It’s architected to work with pages that are stored in RAM, called the buffer pool, and that’s that. The more data you have by way of rows stored in tables, and indexes that make copies of data (except the clustered index, which is the base copy of your table), the more objects you have contending for space in the buffer pool.

There are other things that need space in memory too, like query memory grants we talked about earlier in the series. Between the buffer pool and query memory, there are three main types of memory contention you can see. In this post, though, what I want to get across is that all those index objects vie for space in the buffer pool when queries need to access them.

It doesn’t matter if an index hasn’t been used in 10 years to help a query go faster, if you need to load or modify data in the base table, the relevant index pages need to be read into memory for those to occur. If your data is larger than memory, or if you’re on a version of SQL Server with a cap on the buffer pool, you could be hitting serious performance problems going out to disk all the time to fetch data into memory.

How to tell if this is a problem you’re having: Lots of waiting on PAGEIOLATCH_XX

Transaction Logging

The transaction log is SQL Server’s primitive blockchain. It keeps track of all the changes that happen in your database so they can be rolled back or committed during a transaction. It doesn’t keep track of things like who did it, or other things that Change Tracking, Change Data Capture, or Auditing get for you.

It also doesn’t matter (for the most part) which recovery model you’re in. Aside from a narrow scope of minimally logged activities like inserts and index rebuilds, everything gets fully logged. The big difference is who takes a log backup. Under FULL and BULK LOGGED, it’s you. Under SIMPLE, it’s SQL Server.

Just like with the buffer pool needing to read objects in from disk to make changes, the changes to those various objects need to be written to the transaction log, too. The larger those changes are, and the more objects get involved in those changes, the more you have to write to the log file.

There’s a whole layer of complication here that is way more than I can cover in this post — entire books are written about it — but the idea I want you to understand is that SQL Server is a good dog, and it’ll keep all your indexes up to date, whether queries use them to go faster or not.

How to tell if this is a problem you’re having: Lots of waiting on WRITELOG

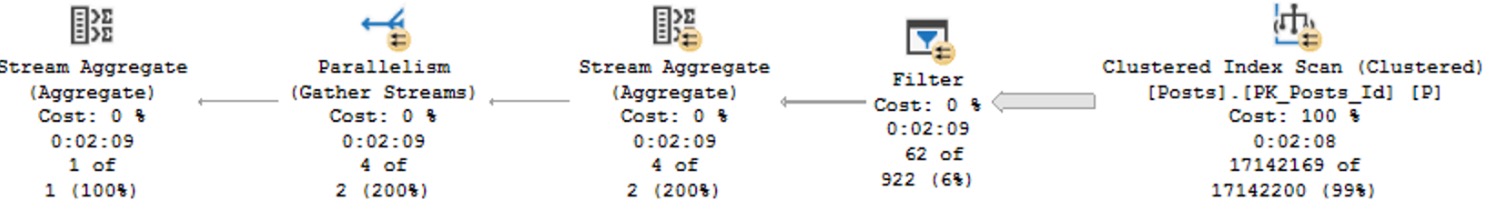

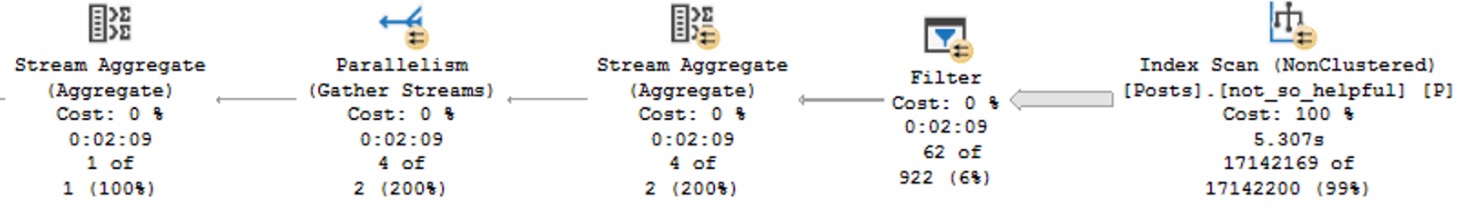

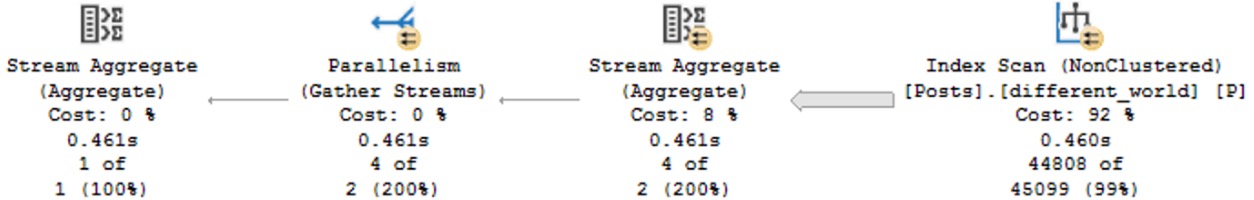

Lock Escalation

The more indexes you have, the more locking you’ll likely have to do in order to complete a write. For inserts and deletes, you’ll have to hit every index (unless they’re filtered to not include the data you’re modifying). For updates, you’ll only have to lock indexes that have columns being changed in them. The story gets a little more complicated under other circumstances where things like foreign keys, indexed views, and key lookups get involved, but for now let’s get the basics under control.

When you start making changes to a table, SQL Server has a few different strategies:

- Row locks, with a Seek plan

- Page locks, with a Scan plan

- Object locks with a Scan plan

Because SQL Server has a set amount of memory set for managing locks, it’ll attempt to make the most of it by taking a bunch of row or page locks and converting them to object locks. That number is around the 5000 mark. The number of indexes you have, and if the plan is parallel, will contribute to that threshold.

How to tell if this is a problem you’re having: Lots of waiting on LCK_XX

Sprung Cleaner

In this video, which is normally part of my paid training, I discuss how over-indexing can hurt you:

To find indexes that can be removed because they’re not used, a good, free tool is sp_BlitzIndex. It’s part of an open source project that I’ve spent a lot of time on. It’ll warn you about indexes that are unused by read queries, and even ones that have a really lopsided ratio of writes to reads.

Those are a great place to start your clean up efforts, because they’re relatively low-risk changes. If you have indexes that are sitting around taking hits from modifications queries and not helping read queries go faster, they’re part of the problem, not part of the solution.

Thanks for reading!

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.