Compiles! Recompiles! Stored Procedures! Temp Tables! Extended Events! In SQL Server

Thanks for watching!

Video Summary

In this video, I delve into the intricacies of stored procedures, temp tables, compiles, recompiles, and query hints—essentially packing as many fascinating topics as a human hand can hold. Using `spHumanEvents`, an extended events wrapper I developed to simplify tracking for SQL Server users, we explore how to monitor and manage these processes effectively. By running a series of queries with different hints in a loop, we uncover the nuances between recompiles and compiles, highlighting the potential benefits of using the `KEEP PLAN` hint to stabilize query plans across multiple executions. This exploration not only sheds light on common issues but also offers practical insights for optimizing stored procedures that rely heavily on temp tables.

Full Transcript

Alright, Erik Darling here with Darling Data and this happy, friendly, amazingly kind video, this community spirited video, we’re going to talk about a cacophony of fascinating things. Namely, stored procedures, temp tables, compiles, recompiles, and query hints. It’s as many things as I can fit on a hand. If I were blessed by any of the gods with additional fingers, we would talk about more things today, but I’m all out of fingers, so you get what you get. In order to track compiles and recompiles, we’re actually going to do, we’re going to look at this three different ways. One of those ways is not going to be query store because query store is somewhat uninteresting in this way. We’re going to look at, we’re going to use spHumanEvents in two different ways. We have one event up here to track compiles. We have one event up here to track recompiles. Alright, and then we have this query plan clash, plan cache query that we’re going to use to track the same thing in the plan cache. Cool, great, wonderful. What are we tracking exactly? Well, it’s really better if I start this loop off and then we talk through exactly which specific things we’re dealing with here.

So what I need to do is in very quick succession. Not too quick. I gave myself a little room to wiggle. I do like having room to wiggle. I’m going to start this off here. spHumanEvents, that’s my store procedure that I use to make extended events a little bit easier on the average SQL Server user because Lord knows Microsoft didn’t make it easy. And as you all know, I love and care about you far more than Microsoft ever will. They’re a nameless, faceless, conglomerate bunch of people who say no. Me, I say yes. Darling Data, we say yes to you.

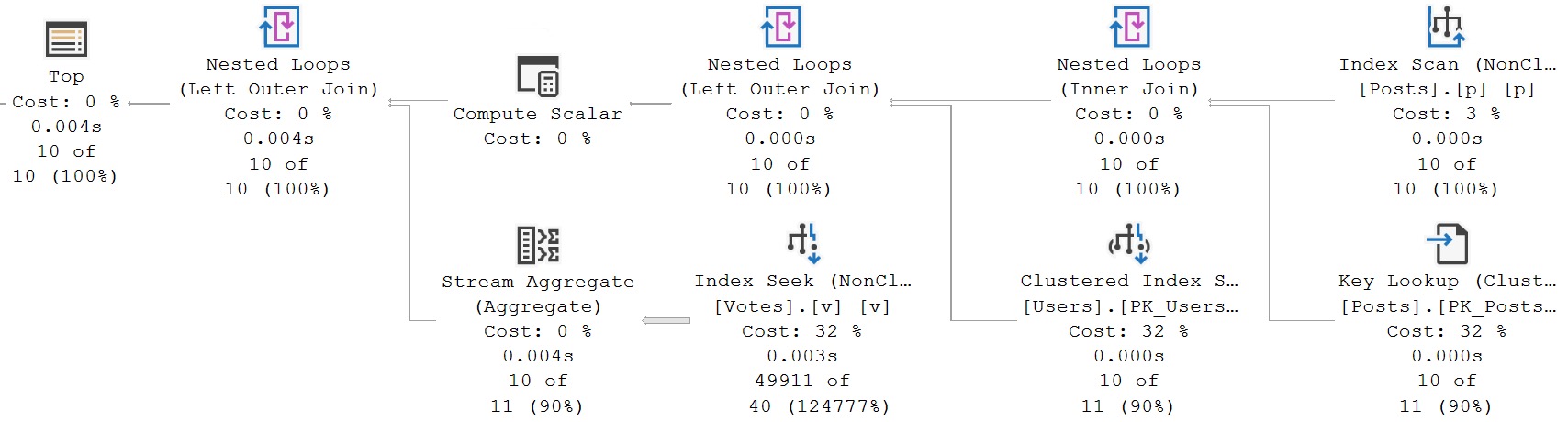

So I’ve got a store procedure and this is the very store procedure that’s running in the loop that’s executing right now, if you would believe that. And what this store procedure does is it takes some parameters, obviously. That’s what store procedures typically do. They take a parameter. It’s like we here at Darling Data will take a drink. Store procedures will take a parameter. We create a table called filtered posts. We insert into that table the results of this query where our wonderful parameters are used, where SQL Server drinks greedily from our parameters. And then we run the same parameters. And then we run the same query, essentially, in four different ways. So the first way that we run this query is with no hints whatsoever, just looking for where the score column in our temp table is greater than zero.

And notice that I’m doing the greater than select zero thing here. The reason that I’m doing that is to avoid simple parameterization, not because it actually interferes with this demo, but just because a lot of people see it and say, oh, that looks weird. What is that? And then everything gets derailed, hopelessly derailed. We have another iteration of the same query, except this one has a recompile hint on it. So this one we’re explicitly saying, SQL Server, please recompile this plan. Tear down that old plan. And then we have two more queries that we run. One of them uses the keep plan hint right here. And I wanted to use this to see if keep plan would get us anything in this situation.

Not to jump too far ahead, but keep plan really seems to work better for like actual tables and like an actual schema, not like 10 tables. And then we have keep fixed plan down here. All right. Wondrous, wonderful, amazing. You believe that I do this for free for your benefit. You believe that the things that I do for you, all the typing that I do for you, brothers and sisters. And so, well, I mean, there’s our loop, right? Our loop is finished. And if we go get the results from this thing back and we look at what happened, we can see this loop ran 21 times with post type ID one.

And then another 21 times with post type ID two for a total of 42 trips to that store procedure, right? What this loop does is what as long as post type is less than three, and that’s not a weird heart. I don’t play those games. I’m married to the data. I can’t go making hearts at every single thing in SSMS.

And then while end date is less than this, we do this and we increment our dates by three months at a go. And then when we finally reach our limit here, then we reset our dates and we set post type ID one higher. And only on that trip around do we raise our loop counter.

So 21 times for post type ID one, 21 times for post type ID two, which is a total of 42 trips to that store procedure. I did not have 42 drinks. You are watching me. There’s no way I could have had 42 drinks during the execution of that store procedure.

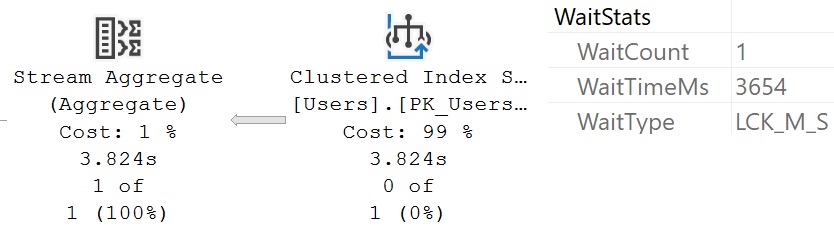

Though Lord knows sometimes I wish I could. If we come over here and we look at the compiles, we are going to see a whole bunch of statistics gathering in tempDB. And if we expand this a bit, we’ll see that this is all coming from the filtered post table in our store procedure.

All right. This is all compiling filtered post statistics. And we have 35 rows of that, I guess. And we have most of these just have one compile, but this one at the top has eight compiles.

I’m not sure why this one thinks it’s so special that it can go and compile eight times in total, but it went and did it anyway. And if we look at the results of the recompile extended event that we had in here, look at what we’re going to see. This is actually interesting to me.

I found this part fascinating. Utterly fascinating. You might be wondering, Eric, why did you find this utterly fascinating? Well, let’s look at what we have some recompile causes in here, right?

For two of them, we have statistics changed. For one of them, you can probably guess which one this is. We have option recompile requested, right?

Which is great. Love the capitalization there. Capital O option, all lowercase recompile. And for the two down here, we have deferred compile. All right.

All right. Cool. Those all happened in Stack Overflow 2013, which is exactly where we executed our store procedure. They were all from the store procedure that we’re talking about. But if we come over here just a wee little bit, and I have no idea why one of them has that little extra less space in it.

A little strange. And we’ve got to do a little bit of work to get this expanded out to where we want it. Because what we want to see are which compile, which query text with which hints or no lack of hints, ended up with all these compiles and all this stuff going on.

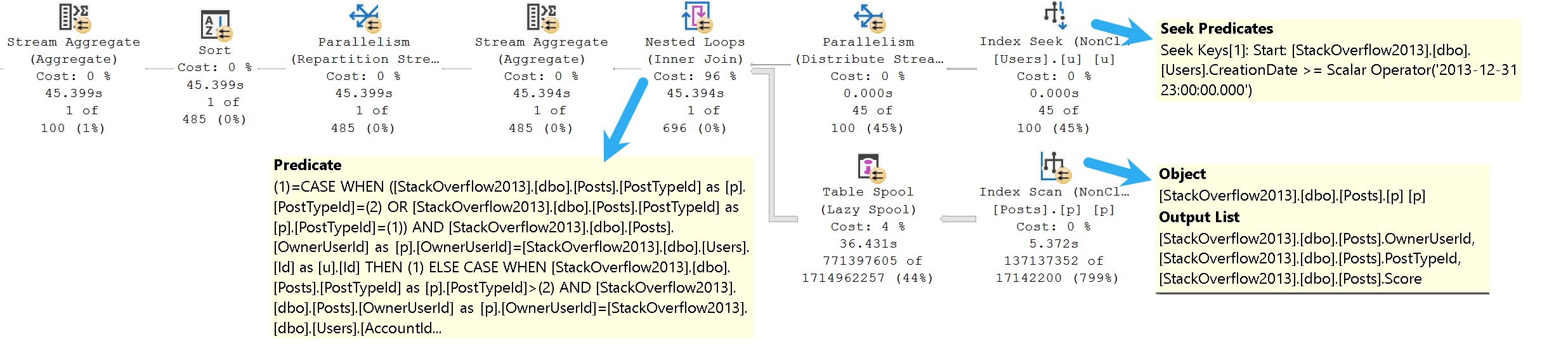

And now let’s slide over this way. Since we’ve got this all framed up nicely here, we have our first query, which is no hints, which had 42 total recompiles and almost 10 seconds of recompile CPU, on average taking 232 milliseconds to recompile this query.

All right. That’s a fairly crazy thing, right? And remember, the recompile cause, and this is statistics changed.

Down here, where we have our keep plan hint, we still ended up with 42 total recompiles, but we just used far less recompile CPU on that. Almost nothing there.

This is pretty wild that just like a regular query requested that required, not requested, required. Didn’t just say, can I have like 10 seconds of recompile CPU? And SQL Server was like, yeah, I guess.

It was just like, no, I need to do this. If I don’t get this, I’m going to go crazy. And down here at the very bottom, we have our two other queries, which only recompiled once, which probably means they just kind of compiled once or something, I guess.

I’m not entirely clear on all of the inner workings of how Extended Events tracks these things. Nor do I have the moxie to care too much about all of that. So, you know, that’s fun.

Sorry if you don’t like it. But there’s only so much time in a day, and there’s only so much free I can give. So these two here, the keep fixed plan did like no work, and the insert select into the temp table did absolutely no work. Now, if we come over here and we run our plan cache query, we’re going to see nearly the same thing, except it’s going to be a little misleading.

I mean, sure, these three up here have crazy high plan generation numbers on them, probably much higher than they should. Well, I’m not going to say much higher than they should be. Just like 129.

We only executed the thing 42 times. I don’t know. I don’t know. Pretty wacky stuff.

Pretty wacky stuff. But what’s misleading is that these queries all look like they only executed once. So that’s because of all the compile, recompile, stats change, go get stats, go make a new plan, kick out the old plan, we don’t need it anymore, get the new plan in here. And all that stuff, which is, I don’t know, can be aggravating.

To be perfectly honest with you, that stuff can be quite aggravating. And the two queries down at the bottom, this is just kind of to show you that the plan cache does agree mostly with the extended event recompile stuff that we saw, where these queries both have 42 executions, right?

And they did very little on the recompile tip. So the moral of the story here kind of is, if you have stored procedures, and those stored procedures feed data into temp tables, and you’re having trouble with, like, high recompilations, and you want to, and you, like, you’re, like, confident and comfortable in the query plan that you’re getting across all those executions, which can be, granted, tough to figure out if you’re constantly recompiling.

But if you’re getting the same plan over and over again, right, and you want to reduce all that compile overhead, right? You have stored procedures that execute a ton, they use temp tables, you put, like, a relatively stable amount of data in those temp tables, and you’re just, like, SQL Server, just use a decent plan, just reuse it, I don’t care, then the keep fixed plan hint can be pretty useful to tamp down the recompiles and potentially take a lot of the stress and strain out of all the many, many executions of that stored procedure.

Will that be perfect in every case? Of course not. But we live in a complex, data-driven database, data face, data world, and it’s up to us to decide when it’s appropriate to do these things. It’s up to us to figure out when these things are causing problems and come up with a reasonable fix for them.

In this case, I would recommend for the query in that stored procedure that we stick with the keep fixed plan hint because that would tamp down on the recompilations, and it would give us a nice stable execution plan that not only would we be able to reuse, right, but we would be able to track exactly how that plan is performing over time.

If we find that that plan is not performing so well, we could, you know, recompile the stored procedure and let SQL Server come up with a new plan the next time, and maybe that one will be better. We always, we pray that SQL Server will make good choices, don’t we?

Lord knows we don’t. It’s up to SQL Server to make these good choices. So, this is just kind of a fun little demo that I put up with while investigating something else entirely, but I thought it was worth sharing.

If you’re not familiar with SP Human Events, the link to my GitHub repo where it lives will be in the show notes, and you’ll be able to go and install it in all of your servers and run it in all its glory and be able to track down these interesting problems the same way I do, this professional stored procedure-y way.

So, anyway, that’s good for today, I think. I hope you enjoyed learning about compiles, recompiles, temp tables, stored procedures, and extended events. I don’t know if that was the same five from before, but again, we’ve hit the magic number, and this is also how I wave goodbye.

So, if you enjoyed this video, I do like thumbs-ups and… Family-friendly thumbs-ups. Do not stick our thumbs where they don’t belong.

I like comments, and I like subscribers. So, if you like this and you like me, then subscribe to the channel and you get more of me and more of this. And it’s kind of a win-win for everybody, because then I get what I like, you get what you like, and we’re all the happier for it.

We’re all better off, aren’t we? So, as usual, thank you for watching. I hope you learned something. I hope you enjoyed yourselves. And I will see you in another video, I guess probably tomorrow, when I’ve thought of something else to talk about, which amazingly I always do.

So, thank you for watching.

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.