How To Evaluate Index Effectiveness While Tuning SQL Server Queries

Video Summary

In this video, I discuss a practical approach to evaluating the effectiveness of your indexes when tuning queries, using specific examples from the Stack Overflow database. I walk through running several queries that progressively narrow down the result set based on score and date filters, demonstrating how index seeks can sometimes be less efficient than expected. By leveraging trace flag 9130, we explore how residual predicates are applied later in the query execution plan, highlighting where most of the filtering actually occurs. This insight is crucial for making informed decisions about indexing strategies, especially when dealing with range predicates and varying data selectivity.

Full Transcript

Erik Darling here with Darling Data. One thing that I’ve noticed recording these videos, I don’t know if it’s the professional grade studio lighting that I use here, but I’m getting a lot more gray in the mustache, particularly right here, but over here too. This I was used to a lot of gray in, but the other stuff is becoming new to me. I don’t think that I’m quite ready to start investing in various dyes, other ablutions, but there is a sign, isn’t there? Anyway, today’s video, long awaited, much anticipated video, I want to talk about how you, the professional query tuner that you are, can you can sort of start to figure out how effective your indexes are for the query that you’re tuning.

Now, there are all sorts of things, other things, all sorts of other query inhabitants that might inform your indexing decisions. You know, like, quality predicates, joins, presentation, order by, top order by, offset fetch, windowing functions, partition by, order by, we’ve talked a bunch about those sort of recently. All sorts of things that you might consider when creating indexes.

But one thing that a lot of folks don’t consider when creating indexes is just how effective those indexes are for helping them, for helping their queries find the data that they’re looking for. So, what we’re going to do here is run a few queries.

And now, these queries are sort of, these are not slow queries, first off. These queries are not slow. This is just a simple exercise to help you determine how effective the filtering your queries are doing in your indexes are.

Now, a couple of things about the predicates in here. And these queries are very specifically engineered to show you sort of like a worst case scenario for indexing choices. So, this last activity date column is a little funny.

So, when the Stack Overflow databases originally got chopped down to different years, like the Stack Overflow 2010 database, the Stack Overflow 2013 databases. I don’t know how many other versions of the database exist out there in the world.

I have a columnstore version of the database. I don’t think anyone has ever used, but it’s out there. I pay like 25 bucks a month to host a torrent for it.

So, that’s fun. But, so, when the databases got chopped down, we really just cut off the creation dates at a certain point. There are other date columns that live in the 2010, 2013, and probably other versions of the database, that have a much wider range of dates in them.

So, like the last activity date on some of the posts in the 2013 database is way beyond 2013, which is kind of a funny data anomaly. But, you know, what can you do?

I suppose you could run some date math update to flip stuff back by like five years or something, but I don’t really see a lot of value in that. But, this predicate here is designed to be very wide open.

So, this is just going to grab everything in the table. And, this second predicate on score, this, I’m going to keep increasing this value to return fewer and fewer rows. All right.

So, if we run these three queries, that one’s looking for a score greater than or equal to 5,000. This one’s looking for a score greater than or equal to 15,000. And, this one’s looking for a score greater than or equal to 25,000. If you run all these, what you’ll probably notice in the results is that, just like I promised, fewer and fewer rows come back as we increase that date filter.

Now, if we look at the three queries that ran, let’s just tighten this up a little bit. Nice, tight screenshot there. We’ll see that, well, sort of weird that even as we return fewer and fewer rows, the index seek takes longer and longer.

Right? So, this one is 234 milliseconds. Actually, this one went down a little bit.

Hmm, 226 milliseconds. This one’s 235 milliseconds. Well, actually, you know what’s funny is, like, prior runs of this demo, like, the seek time would actually go up by about 10 milliseconds each time. I don’t know what’s different this time.

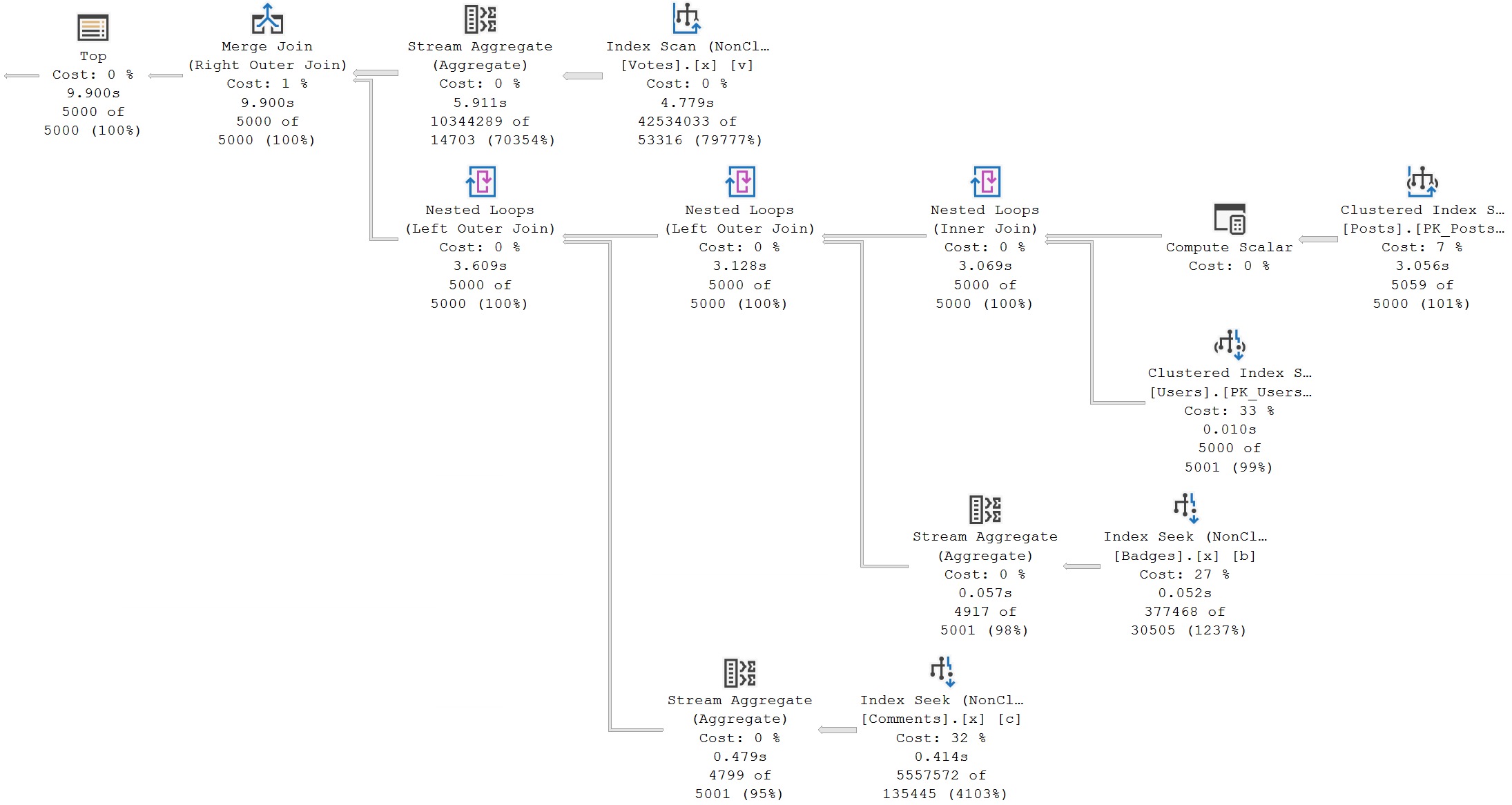

I guess I’m just lucky because I’m on camera. Apparently, the camera does not add 10 milliseconds to your query plans. Just makes your cheeks look extra rosy. But anyway, what the seeks look like for all of these is this.

So, we have seek predicates down here that find some of the data, and then we have these residual predicates up here. Right? So, we’re able to find the start and end date.

We’re able to find at least the start of, or rather, we’re able to filter down to some of the 5,000. But we do the residual filtering later. We can tell we do the residual filtering later.

If we use this very special trace flag called, well, I mean called 9130, numbered 9130, I guess. So, if we run these three queries, what the trace flag will do is take that residual predicate on score and apply it later in a filter. So, if we look at these and look at the index seek now, we don’t see any references to score down here, and we don’t have that other residual predicate up here.

What we have in the query plan is a filter, a secondary filter. That’s a good word, good term. And that is where the score predicate gets applied.

Now, what I want you to notice is that for these three of, like, let’s tighten this up. Let’s kind of tighten these way up, way tight on these. I don’t know why my mouse isn’t cooperating so much today.

A bit frustrating. But if we look at all three of these seeks, we can see that these seeks don’t really narrow down the rows that we’re hitting at all. We basically seek through the index to every value in the table.

Where the filtering actually happens, where we actually reduce the result set, is when we apply that score predicate outside of that range predicate on the dates. So, bringing it down to 5,000 gets us to 73 rows. Bringing it to 15,000 gets us to five rows.

I don’t know why I didn’t get my dot there. Give me a dot. Come on, baby. There we go. And bringing it to 25,000 gets us down to one single row. So, what you can, so, using that, using that information, we might decide that the query that we’ve designed, or rather the index that we’ve designed for this query, it’s not doing, not holding up its end of the bargain.

We’re not getting good filtering from that, from that index with this query. So, let’s give this index a shot. So, I created two indexes at the start of this thing.

One of them called P, and that’s the one you’re looking at now. And I probably should have mentioned this earlier. This one leads with last activity date, and has score as the second key column. Important information.

For you now, for you later, for your life in general. And now, if we start using this index on score, and then last activity date, we’re going to see these queries change a whole bunch. Very meaningful, profound ways.

Now, we are, when we do our filtering, we are able to very quickly and efficiently locate those score rows, and then apply the predicate on the date. So, we narrow these things down very, very early on, right? And if we look at the way these predicates are set up, we are able to find where the score column is greater than or equal to 5,000.

And we are able to find the start range of the dates that we’re looking for here, 2007-01-01. And then up in the residual predicates, this is where we apply the full range of filtering for the dates, right? So, all three of these, well, I mean, they went from, like, kind of like, what was it, 200 and something milliseconds to zero milliseconds.

This is a much, much better indexing proposition for this query. Now, if we go back and we look at the same type of query again, using trace flag 9130, we’ll see some still rather agreeable results. But it is helpful to use this sort of thing to figure out where you’re doing most of your filtering.

Where is most of your filtering actually getting applied? Is it in, like, the actual seed predicate or is it in residual predicates? So, if we look at these three, let’s tighten this up.

Now it’s easy, I think because I yelled at it, scared it. I scared it into submission. Now it’s back to acting up. So, if we run these three queries, when we look at what happens here, we can still see that the majority of the filtering is happening way early on, right? And that the later filter operator doesn’t actually get rid of anything.

73 in, 73 out, 5 in, 5 out, 1 in, 1 out. So, that secondary filter on the date range was not really all that helpful to us, at least from an indexing perspective, at least from a data filtering perspective. Now, you might run into situations like this in real life when you are tuning queries, and you might find that, especially if you have store procedures that allow for these sorts of range predicates.

So, sort of like, if you have, like, where some amount is greater than or equal to something, and some date range, or some data, or some column is between some date range, you might find that as you expand and contract certain ranges, you make more filters more selective, and you make other filters less selective, not fewer selective, less selective. That you might find that the indexes you have become less efficient for those wider and shorter ranges. Figuring out how to handle that is, of course, can be rather complicated.

Sometimes a recompile hint is good enough, especially if you have multiple indexes available for the query to use. Like, if we had both of these indexes available, the second one that leads on score and has last activity date as the second column, and the first one that leads on last activity date and has score as the second column. If these were both available, and we had a recompile hint on there, then SQL Server would be able to sort of figure out via cardinality estimation which index might be better to use in certain circumstances.

It might also make mistakes there, but who knows, right? All you can do is set SQL Server up for success, give it the car keys, and see what happens. If that doesn’t work, then you might have to take it a step further.

You might use dynamic SQL, and you might start evaluating what values get passed in, and you might start hinting different indexes to use depending on the selectivity of the ranges that you have. That people are passing in for their queries. So, like, if you have a very, very wide date range, and a very, very selective score filter, you might say, oh, use the index that starts with score.

If you have a very unselective score predicate, like if you said, like, where score is greater than zero or something, or a score is greater than, like, negative a million, and you had, like, a date range for, like, the last month of the whatever you’re searching, you might want to lead with the narrow date range predicate and have the secondary residual predicate on score that’s not going to screen a whole lot out. So, you might want to use that second column in the index. So, there are all sorts of ways to deal with it.

It really depends on how deep you need to get into fixing these kinds of queries. A lot of the time, it is fairly easy to just allow SQL Server to sort of make choices based on, you know, what it thinks is best at the time. But, you know, plan reuse, parameter sniffing can also be, make that very difficult proposition.

As usual, I do not recommend optimize for unknown. I do not, I do not recommend taking your stored procedure parameters and setting them equal to local variable values. I do not recommend any of these things because they are unwise decisions, most of it.

So, anyway, that about wraps it up for this video. I have one other video I think I am going to record today. I am feeling ambitious.

But, if you like this sort of SQL Server performance tuning training content, feel free to give my video the little thumbaroonie. If you want to get notified when I record more of these, especially when I record multiple videos in a day and you are just like, screw it, long lunch. I am drinking for this one.

Then, please subscribe to my channel. We are nearing ever, ever closer to the 3000 subscriber mark. And I do not know what I am going to do when I hit 3000 subscribers. Maybe, I do not know, maybe I will throw a party and live stream and watch me get drunk alone and write demos.

The majority of my evenings, anyway. So, yeah. Thank you for watching.

I hope you enjoyed yourselves. I hope you learned something. I hope that you will watch more of this prolific in-depth SQL Server training content. Anyway, thank you for watching.

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.