A Little About Key Lookups In SQL Server

Video Summary

In this video, I delve into key lookups and indexing in SQL Server, explaining why sometimes SQL Server chooses a clustered index scan over an index seek with a key lookup. I walk through creating an index on the `last access date` column of the `users` table from Stack Overflow’s database and demonstrate how estimated costs can help us understand why SQL Server makes certain plan choices. By tweaking parameters in our queries, we see firsthand how key lookups can impact performance, especially when dealing with a large number of rows. I also touch on the limitations and potential issues associated with key lookups, such as their impact on read committed isolation level operations and the importance of covering indexes for filtering elements. If you’re curious about SQL Server indexing and query optimization, this video is packed with valuable insights that can help you make informed decisions when designing your database schema.

Full Transcript

Erik Darling here with Darling Data, Limited Liability Corporation. And if everything goes well here, you can all thank the wonderful, lovely, talented Drew Ferjuel, Ferjueli, for helping me correct both my audio and my video settings. If you need an audio, video health check, I highly recommend Drew’s services. Part of the health check was putting the microwave, microphone further away from my mouth and also adjusting some of the Streamlab settings that he was really smart about. If you like smart, funny people who tweet occasionally, I suggest you follow Drew at Pitferg on Twitter. I think he has another social media presence, but that’s the limit of my social media presence, so I’m going to leave it there. And Drew has a podcast with his friend Paul Baylor, who I think left Twitter. But you can see more details about their podcasting at doingtheirbest.com. So if everything goes well, thank Drew. Everything goes like crap. We’ll throw eggs at him. So this video is a little bit about key lookups, a little bit about indexing, stuff like that. Again, you know, sort of training wheel stuff, stuff that a lot of folks have questions about when I work with clients. And when I go to the bar and people are like, Oh, it’s Erik Darling, that SQL Server guy, let’s ask him questions about databases. So they get just get recognized in public so much.

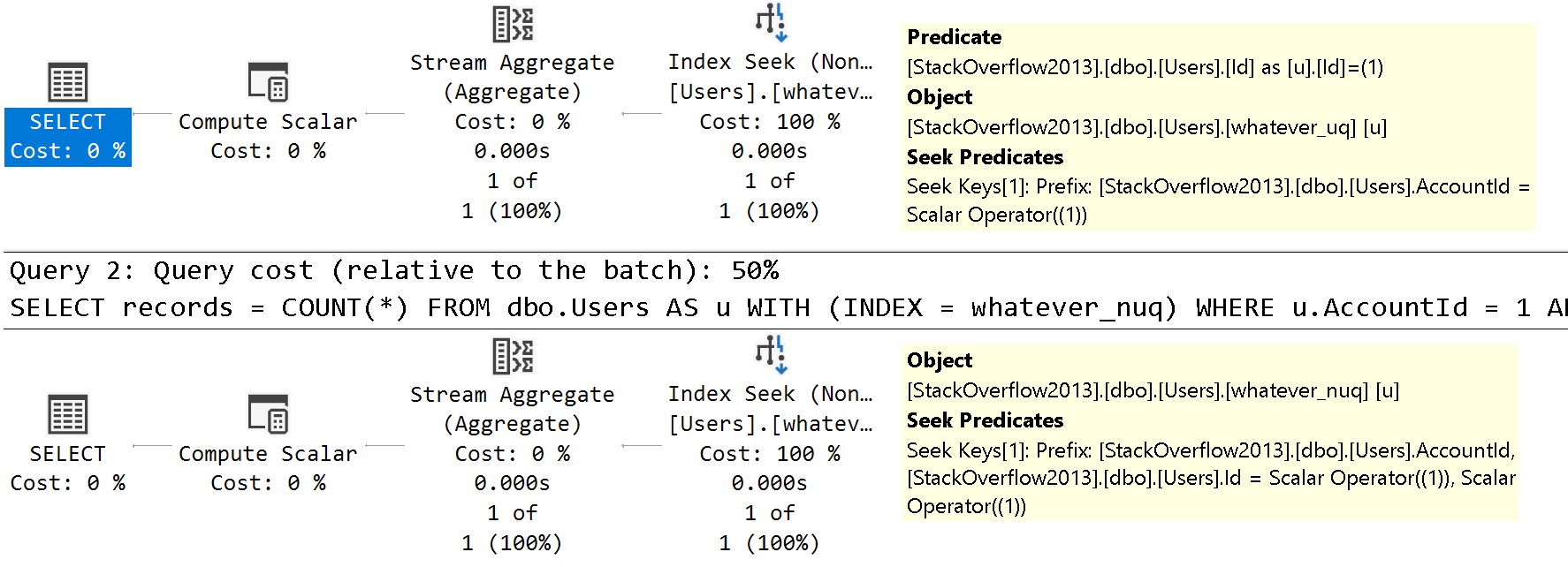

I’m starting to get Eminem syndrome. Anyway, exhausting. So I’ve got this index probably already created. I turn off IntelliSense because I get annoyed with it more often than not. So there’s that. But I’ve got this index on the users table in our stack overflow database on the column last access date. Now SQL Server, a lot of the times, you know, a big question is like, why didn’t SQL Server choose my index? Well, a lot of it has to do with costing. And I’m going to show you what I mean here. So if we let’s get estimated plans for these two queries. The first one performs a clustered index scan. The scan is hidden. For some reason, we are left in suspense with some ellipses. SSMS doesn’t have a way to show you the full object name, apparently.

And the second query does an index seek into our nonclustered index and then a key lookup back to the clustered index to retrieve all the columns that were in the star part of the query that were not included in the nonclustered index. So the clustered index scan way up yonder and the index seek way over yonder. So all very interesting stuff.

Now, let’s look at a couple things here. One, the clustered index scan is going to have the predicate that we supplied to the query in it as a scan, right? There’s no seeking here because last access date is not the leading key column in the clustered index.

The column called ID is, and we’re not searching on ID, so SQL Server has nothing there. Other columns in a table that aren’t part of the clustered index key are, of course, sort of like included columns. They’re only on those leaf-level data pages, depending on which way you draw your binary trees.

It’s either top or bottom or side or whatever. So we can’t seek to that, but we can scan the entire table and get all of the rows there that we care about. So this query has an estimated cost, and I’m going to depart from the point of the video a little bit to talk about costs.

Costs are estimated metrics. They are always estimates. There is no actual equivalent to a cost unit in SQL Server.

You’ll never see an actual execution plan that says the estimated cost was 5, but the actual cost was 50. There’s no way to translate those unitless metrics into actual factual numbers. This is why actual execution plans, when you run them, have operator times.

We’ll look at those in a little bit. So this thing has a cost of 36.2, let’s just call it 22 query bucks. I don’t need to keep all those extra decimal places in there.

And the query down the bottom has an estimated subtree cost of 12.18 query bucks, plus some, you know, query bitcoins or something, whatever they’re worth these days. Now, the reason why SQL Server didn’t use the nonclustered index for this first query, and what I’m going to do is actually change this a little bit, just so we can see things back to back.

Let’s add an index hint in here. Index equals whatever. And let’s look at the estimated plans for both of these.

So the cost of this query is, again, going to be 36, or estimated costs would be 36.22 query bucks. And the estimated cost of this query is 250 query bucks. So this is why SQL Server didn’t choose this plan.

When SQL Server was evaluating plans, making estimates about how much effort in CPU and I.O. it might cost to execute each of these queries, SQL Server just chooses the cheapest one. It has no insight into whether it’s fast or slow.

You can have a very slow, cheap query. You can have a very fast, expensive query. Personally, I don’t think we should refer to long-running or arduous queries as expensive anymore, because cost is just kind of useless when you think about things in that way.

Now, breaking down the percentages a little bit, this clustered index scan costs 100%. And down in the second query, this key lookup equates to 98% of the total plan cost. SQL Server says, when we scan the clustered index, this is where we do all the work.

And the key lookup plan seeking to this data will be 1% of the plan cost, and the key lookup will be 98% of the plan cost. If we look at the details, look at the tooltip. You can see that 246.438 query bucks is about 98% of the 250 point whatever the entire query was set to cost.

So this is why SQL Server didn’t choose the key lookup here. SQL Server has that choice. It’s basically a little bit of a comparison to the case.

It’s basically a little bit of a lookup. It’s basically a long list of ways to do that. It’s basically a little bit of a lookup. Part of the reason why lookups have such a high estimated cost to SQL Server is because the optimizer doesn’t really have a good understanding of modern hardware. SQL Server has this weird assumption that you are on crappy rotational storage or you’re on Azure storage.

I don’t know. Maybe that’s why Azure storage is just that bad. So maybe you’re on old spinning rust.

Maybe you’re on Azure Storage. I don’t know. I don’t know where you are. But the optimizer says, doing all that random IO, doing that lookup over and over again, that nested loops join is going to be expensive.

It’s going to be hard to do. You might have to move the disk head around. You might have to talk to an HTTP request in Azure. Who knows?

Who knows what will happen? It will be a wild time. So that is why SQL Server may sometimes choose a clustered index and why SQL Server may sometimes choose a lookup, as long as the plan for the lookup is less costly than the plan for the clustered index scan.

Good. We’ve got all that figured out now. So let’s create or alter.

Let’s do something with this stored procedure. Let’s do one of those things with it. Completed successfully. Wonderful. We did it.

We made it. Ma’s going to be so proud. And now that we’ve got this stored procedure, we’re going to talk about, I think, a more interesting lookup scenario than, you know, you’re just selecting a bunch of columns.

All these queries select a bunch of columns from the user’s table. Actually, select all the columns from the user’s table. Every single last one. That’s what star means. Give me all of them.

I don’t know if that’s true in regex. Actually, I could be wrong there. Anyway, let’s look at a sort of more interesting scenario with key lookups. We’re going to run our stored procedure with this set of parameters first.

So we’re going to be looking for a last access date of 2017-1122. Now, this is actually a good time to point something out about the data in the Stack Overflow 2013 database. All of the tables were deleted where the creation date of something was after 2013-1231 at the stroke of midnight.

There are a whole bunch of tables. I actually think there’s maybe just two tables that have a last access date or a last edit date column in them. A lot of those have dates way after.

Because the Stack Overflow data dump had data from way after 2000, well, the first of the year in 2014. But that’s not where the cutoff, that wasn’t the column used to decide the cutoff date. So fun things there, right?

So if we run our stored procedure, which is going to look for just a start date greater than 2017-1122. It’s going to add one day to that start date because we are looking for one day. And we just want anyone with a reputation that’s greater than one, which is going to be everybody.

Because you can’t have a reputation under one in the Stack Overflow database. There is a rule against that. And, well, I don’t know.

I mean, I guess you can try if you’re feeling particularly wily. So let’s go ahead and execute this and look at the query plan. Because the query plan is going to return data rather quickly.

So quickly, in fact, I’m so impressed with it. We finished this in three milliseconds. Good for us.

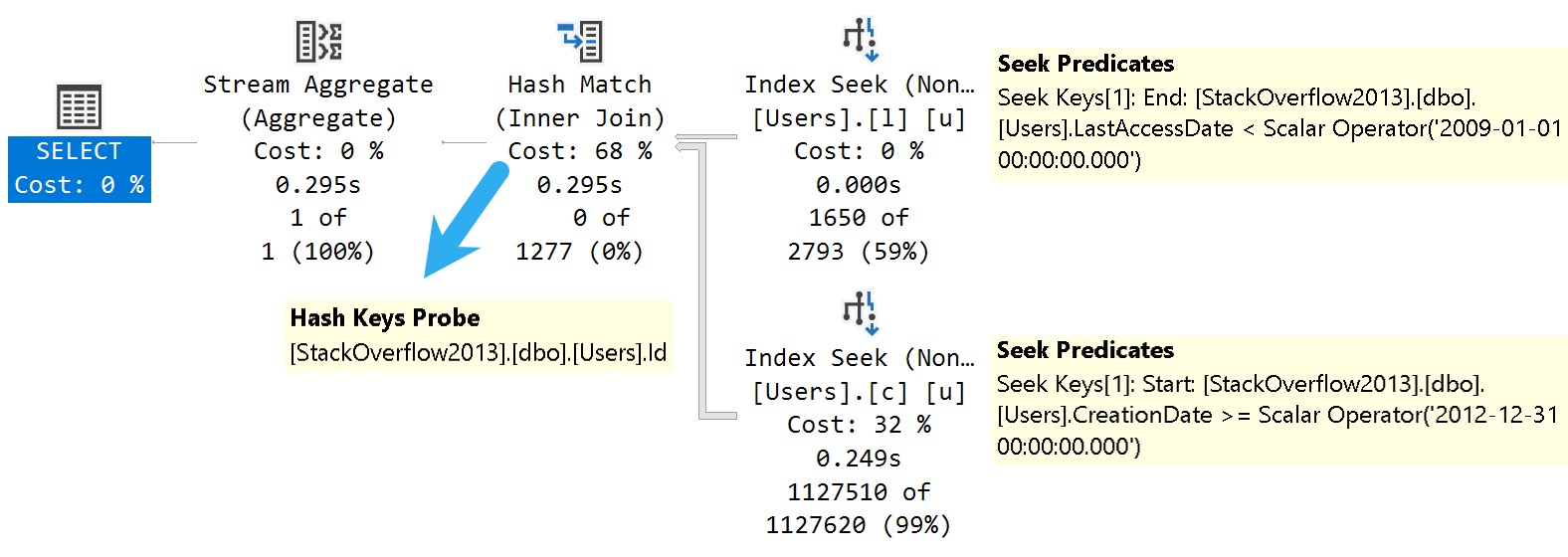

That’s a great, great, great query time there. We did it. We’re master query tuners. So we have the index seek. The index seek successfully evaluates our predicate looking for one day of data.

You know, we did pretty good when it comes to the actual number of rows. That’s 786 versus the estimated number of rows at 643. That’s a pretty good job there, right?

Especially for a range predicate, not an equality predicate. It’s a pretty good guess. Spot on there, SQL Server. But then we have this additional predicate on reputation. Where did that go?

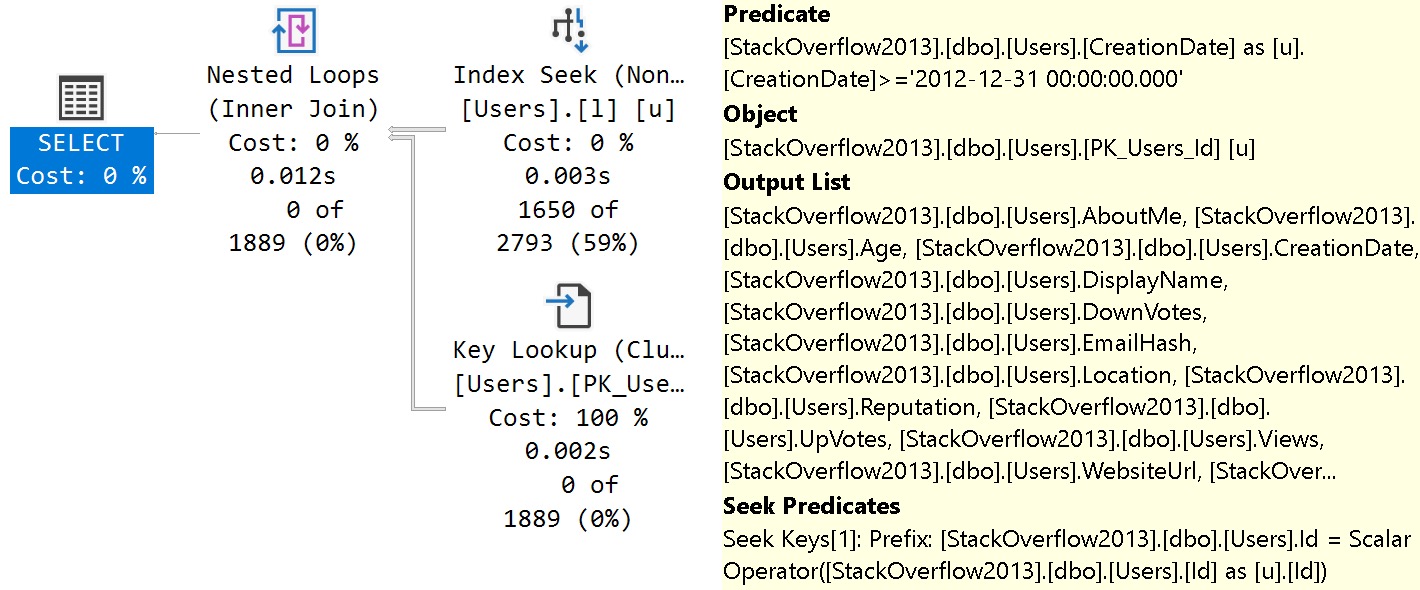

Well, key lookups can do more than just evaluate select list columns and bring those back for the query. We can also evaluate additional predicates when we do a key lookup. Down here, we’re going to see four parts of the tooltip that are sort of interesting.

We have down at the bottom, we have a seek predicate. You notice that this is on the ID column. The ID column is the clustered primary key for the table.

So this is the relationship between the non-clustered and clustered indexes. I talked a lot about this in another video called, like, how SQL Server indexes communicate with each other or something. You should watch that one if you have any questions about that relationship, why that relationship exists.

Then after that, we have this whole output list, which is all the columns that we selected out of the users table. That’s purely output columns. Then one step above that, we see the object that we touched to get all of those columns.

And then above that, we have a predicate. Now, coming back to something I said earlier, the reputation column is just hanging out along the leaf data pages of the clustered index because it’s not part of the clustered index key in a way that we can seek to it when we do this evaluation.

All right. Cool. Glad we got that figured out. So we have four things that we’re doing in the lookup.

We’re joining the two indexes together on the ID column. We’re outputting every column in the table. We’re getting that from the clustered primary key. And we’re also evaluating a predicate on reputation.

Now, I’m going to change the way this query executes a little bit. It’s not going to run for too, too long. But it is going to return a similar number of rows. It’s going to return 517 rows.

The next one should return neighborhood of 500 rows as well. But it’s going to look for data in a slightly different way. We’re going to have the same start date of 2017-11-22. But this time, we’re going to go back a full year.

Or we’re going to add 365 days to the start date and look for data after that. But this time, rather than just looking for a reputation over one, we’re going to look for Stack Overflow users who have a reputation over 110,000. There are a lot of them.

A lot of people with a lot of time on their hands. Or they had one semi-good answer in 2009. And it’s just been collecting upvote royalties ever since. Like thousands of.

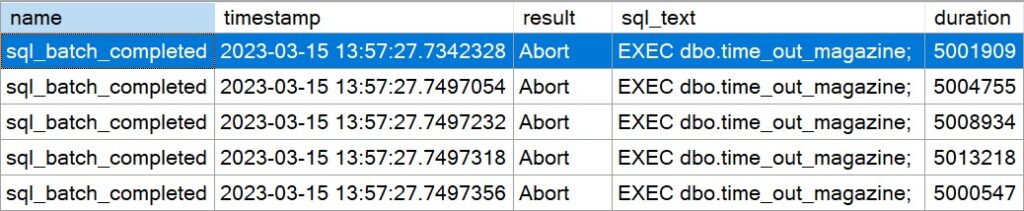

It’s every day. I can’t believe they knew that. I can’t believe they knew that answer. That’s amazing. Amazing. Anyway. If we execute this, it’s going to run for a little bit longer.

Not a lot longer. But long enough to notice. Again, this one returns 505 rows. And to get to those 505 rows, we now spent a lot more time in the lookup.

Rather than executing in 3 milliseconds, we get to about 1 point. Well, about a second and a half here, let’s call it. And that’s obviously not a very good scenario.

Now, part of why this happens is because even though we find a lot more rows up here, which, you know, for better or for worse. Like, honestly, that many key lookups isn’t going to bug me too much.

It’s not going to be terribly slow as long as you’re not on Azure Storage. But when you look at how much time we spent doing that lookup, that was a lot more time than before.

The seek to 668,000 rows didn’t take very long. It was 178 milliseconds. Where we spent the majority of the time was going between the lookup and the nested loops join. That’s where the most of the time was spent.

Now, a lot of the advice that gets given about key lookups, and there are very good reasons to avoid lookups, especially for parameter-sensitive queries like this one. This one isn’t like bad, bad, but it’s noticeable.

There’s also issues with key lookups under the default read committed isolation level, where during that lookup, modification queries will get blocked, because SQL Server may take an object-level shared lock on the clustered index, and other queries won’t be able to modify that index when they go to modify data in the table and other indexes that have those columns involved in the update, or the modification, rather.

It also may lead to read queries and modification queries deadlocking with each other under the read committed isolation level for almost the same reason. Almost the same set of reasons there.

I have blog posts about that. I will put those in the show notes. It’s not a show. It’s just a video. Get over yourself. So anyway, a lot of the advice that people may give you when it comes to avoiding lookups is to cover the whole entire query.

But in the case of select star queries, or even just sort of long select list queries, this isn’t terribly good advice. This is actually pretty terribly bad advice.

Having a lot of super wide indexes on your tables to cover all these queries, not really all that great. So what you can do in situations where you have an index that requires additional columns for filtering is make sure that SQL Server can at minimum apply all the filtering elements of a query to one index access.

You can still get the output columns from another index, but make sure that at minimum your where clause is all covered by one index. There are, of course, situations where you might want to have a couple nonclustered indexes to do that.

Again, we talked about that in the how indexes talk to each other video where we had an index union plan, if I’m remembering correctly, where that was a pretty good plan for using two nonclustered indexes and bringing those together. But let’s change our index up a little bit.

The order of columns here isn’t going to matter too much, but we have last access date and then reputation. So if we remake this index with reputation in it and we run this first query, this first query is still going to be very fast.

It’s still going to be just about at the three millisecond mark. We’re not going to see a big difference here. All right. But when we execute this query using the other set of parameters, this one executes much quicker. It’s no longer a second and a half.

It’s lingering just under the half-second mark. So we’re able to shave just about a second off this query by allowing SQL Server to do all of its filtering from one index. And I’ll show you what this looks like now.

If we go to the lookup, we no longer have that predicate up here where reputation was. That predicate on reputation now is, well, not only a seek predicate here, but we also have an additional residual predicate here.

We can seek to like the start of the range, but then that residual predicate up top evaluates the remainder of the range. Anyway, that was just a little bit about lookups, you know, kind of what they are, how they work. Why SQL Server sometimes hates them, how bad Azure storage can be, and how to fix lookups if they are sort of parameter sensitive and if they are causing performance issues.

Mainly that you want to pay extra attention to when you have lookups that involve predicates on the clustered index side there. Because that means that you have sort of an incomplete index for the starting filter and you need to adjust that.

Anyway, thanks for watching. Hope you learned something. Hope you enjoyed yourselves. And I will see you in another video. Again, I’ll remind you to thank my good friend, Drew, who has a podcast, if this turns out well. If not, I don’t know.

I don’t know. I don’t know. Just go on with your lives. Just be happy. That’s all I want. I want everyone to be happy. Thanks for watching.

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.