User Experience Under Different Isolation Levels In SQL Server

Thanks for watching!

Video Summary

In this video, I explore the nuances of isolation levels in SQL Server, specifically focusing on read committed and read committed snapshot (RCSI) isolation modes. Given my recent foray into unexpected hot weather, it’s clear that even my brain can get a bit foggy when things heat up! However, today’s content is anything but lukewarm. I delve into how these isolation levels behave differently under various conditions, particularly highlighting the challenges and inconsistencies you might encounter with read committed. Through a series of demonstrations, I show how queries running under read committed can be blocked or return jumbled results due to changes in the underlying data, even for short durations. By contrast, RCSI provides a more consistent snapshot view but still doesn’t reflect intermediate changes within transactions. This video aims to help you understand the trade-offs and choose the most suitable isolation level based on your application’s needs, ensuring better performance and fewer surprises.

Full Transcript

Erik Darling here with Darling Data on an unexpectedly hot and sticky day here in New York. So unexpectedly hot and sticky that I think parts of my brain have just ceased functioning. It’s just shut down. Nap time. We will siesta. We will siesta you tomorrow. That was bad. That was real bad. I should have thought about that before I said it out loud. I have that problem, though. I do have that problem. So today’s video is going to be sort of, I’ve said in a number of videos about isolation levels. I like talking about them because exploring isolation levels is an important part of any SQL Server journey because eventually you will hit strange concurrency phenomenons that you can’t explain or reproduce or even find out. any real evidence of. And so I want to talk about what I’ve said in these videos is that almost no isolation level is universally perfect for every workload. And it’s more about making a choice about you making a choice about how what you want your application to behave under certain circumstances. So we’re going to compare and contrast a little bit between read committed to the pessimistic isolation level and read committed snapshot isolation level. I know that I recorded a video sort of about this, but I came up with this demo that I like because it highlights a lot more of the weird changes that can take place while a query under read committed is unable to make progress or even is just making slow progress for some reason. Like in the case that I’m using, there’s some blocking involved. And we’re going to compare and see what I’m using.

And in real life, you might have a query that’s just like has to read from a big table or like scan along or seek along a big index. And maybe that index isn’t in memory. And maybe you have to maybe it take you a couple seconds. And a lot of stuff can change in a couple seconds. The point of this isn’t like, like, you know, begin trend, do a few things and like look at all the stuff that changed in like 10 seconds. It’s like a lot of this stuff can change very quickly. Like within like a few hundred milliseconds. And your query would still return just jacked up looking results. So I’m going to hit execute here to reload this table. I’ve got a table called read committed stinks. You know, perhaps a little overkill in the table name. You know, I’m willing to give read committed a little bit of credit. I’m getting a haircut tomorrow. And this like this patch here is just annoying the crap out of me. But if I had the wherewithal, I would probably just shave my head at this point. Nothing but trouble. So this table has like some standard like account information stuff.

You can ID and account ID, how much money you have in the account, your first name, your last name, when the account was created and the last time you were active in your account. And I couldn’t think of any good people names. So I just stuck a bunch of brunch menu items in the table. The prices do not reflect my respect for, adoration of, or preferences for these brunch items in any way.

I just rattled them off the top of my head. So please don’t try to infer anything about my brunch habits based on this table. And what I want to do is just give you a quick view of what this table currently looks like.

It pretty much looks like what I did up there, except now it’s a nice Excel format. All right. So that’s all null. That’s all like right now. We got first names, last names, values, everything.

Everything that you could want in a table. Really. Except maybe throw a nice XML or JSON column in there that just concatenates all that stuff together so that you can pull that out with your application instead.

I don’t know. People do dumb things all the time. So I want to make sure that recommitted snapshot isolation is off for the first run through. And what I’m going to do is, well, I’m not going to do it quite yet.

I’m going to have to hold on to those horses of yours for a couple seconds. So over in this window, I have a couple queries. This first query is doing a select from our knee-jerk table name.

And the idea is to look for anything with an account value greater than or equal to 1,000. You could put, you know what’s kind of funny about currency in SQL Server, is you could put any currency symbol in front of that 1,000, and it would find anything over 1,000.

SQL Server does not do currency conversion for you. So if I made that pound sign or whatever the euro is, it would just look for 1,000. It wouldn’t be like, oh, well, the pound is worth an extra 50 cents or something.

We’re going to look for anything that’s over 950. No, it just looks for anything over. You can put any currency you want in there.

You could put, like, I don’t know, whatever they use in Zimbabwe. If Zimbabwe is still a country, I’m not sure. You could put, like, 1,000 Zimbabwean dollars on there, and it would just be like, yeah, it’s over 1,000.

No problem. SQL Server does not do currency exchange rates for you. So you’d have to write a CLR function to go do a web call and check exchange rates and bring that back and then do some local conversion.

I don’t want to give you any worse ideas, though. So this query goes and looks for anything with an account value over 1,000. And if we think about what, you know, I put into the table up here, everything is over 1,000, right, or 1,000 or greater, right, 1,000 through 10,000.

And I’ve got this little decoder column because I’m going to make changes to the table that are not going to be reflected or might be extra reflected in the final results.

And I have go 2 after this because I want the first go. So this is going to execute this one query twice. I want the first go to get blocked to show you what happens when a query gets blocked.

Even for, again, like a couple hundred milliseconds, all this stuff could change in. And then run the query again afterwards because what I want you to see is that read committed, this pessimistic isolation level is not a snapshot of your point-in-time snapshot of your data, and that running the same query twice in a row can get you very different results.

And then I just have a query down at the end that just gives a select of everything in the table, right? So let’s come back up here a little bit, and let’s switch tabs, and let’s go and begin a transaction.

And what we’re going to do is we’re going to update the account value for ID 7, and we’re going to leave that hanging for a second. Now, again, just as I start this one off, again, like this isn’t stuff that has to go on for a long time in order for you to get mangled results from read committed, the pessimistic isolation level, because all of these queries that I’m going to run to make changes to the table, like, for instance, this is going to update three rows to set account value to 999.

If you remember, our original query is looking for anything with an account value greater than or equal to 1,000. And so these rows would no longer qualify.

We’re going to mess with a couple primary key values. And I realize that primary keys don’t often change in a database, but you might be working with other data that has more volatile keys.

You might be working with data that does not, like, that does change fairly often, like the key of an index that does change fairly often, right? Certain relational values might change from time to time.

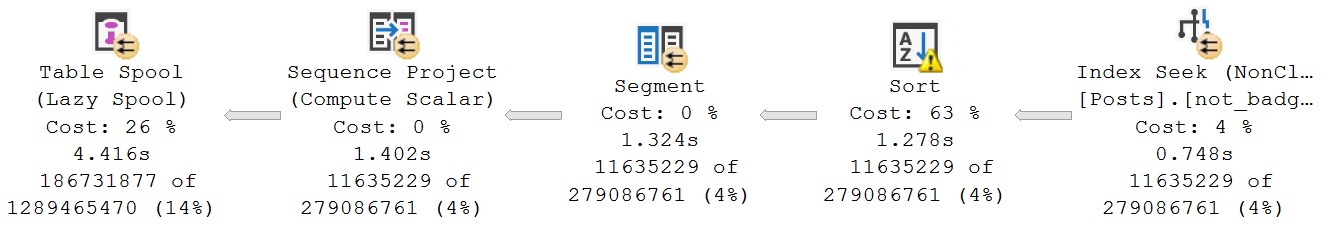

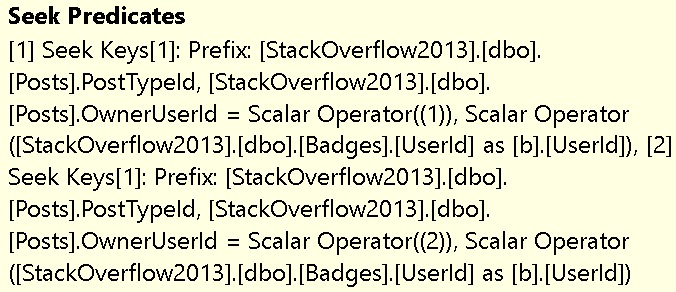

And so you might see weird things where rows get thrown around and just juggled all over results. Think about, like, the context of Stack Overflow, where you might get a hot network question, and your score might jump tremendously, very quickly.

Or you might get downvoted into oblivion very quickly if you give a bad answer or ask a bad question or something. All this stuff can change, rapid fire. And you could move around in results and queries that you, results you should have been and that you’re not, or query results that you shouldn’t be and that you are.

It’s terrible, right? So I’m going to delete some rows, and then I’m going to reinsert a couple rows. Now, what I’m doing here is, you know, again, kind of tricky. I’m just switching French toast and steak frites around, right?

So they’re going to both get values of 1,000, but I’m going to flip their primary key values and their user account values, I think. Yeah, that’s all flipping.

So, yeah, made a bunch of changes, right? And again, this doesn’t, I don’t know how long I’ve been talking about this for, 10, 20, 30 interminable, uncalculatable numbers of seconds. But now I’m going to commit all those changes.

So, bloop, you go and do something. And now this query is finished. So what we got in our results, this first chunk of results up here, and if this SSMS would be so kind as to let me drag things around, the first set of results, remember, we paused on ID 7, right?

ID 7 was where we paused, because that’s where we got blocked as we were setting the account value to 5,001. So we made a whole bunch of changes to this table that are either reflected because we moved things past 7 or not reflected because we made changes before the ID 7, right?

So we read up to ID 7, got stuck, and we made a bunch of changes all around ID 7 that made these results weird. So like ID 9 didn’t change. ID 11 used to be ID 6.

ID 6 got set to ID 11. ID 7 was where we were blocked. This row got deleted. This value, this value, and this value all got changed to 999.

This got deleted and reinserted with different values. This got deleted and reinserted with different values. And now we have some weird stuff in the table. So coming back over here, right? Remember the table definition where I don’t only have a primary key on ID, but I also have a unique constraint on account ID.

And if we come over and look at the initial set of results, right? We have stuff that’s kind of all over the place.

We have two account IDs, 1006. We have two scotch eggs in a row with different IDs, but the same account ID. We have two steak frites, right?

If we look here, where’s that other? Whoa, that wasn’t what I wanted to do. Yeah, we got stuff all over the place. Like here’s, zoom back in there. Come on, zoom it, work with me.

We got one steak frites here. We got another steak frites here. We got a bunch of rows with, you know, account values that changed, right? Like these got set down. This one got set down.

This is the first run. This is just the block query, right? So a bunch of stuff changed around that query that this read query was stuck. It did a bunch of reads and then went, oh, I don’t know. I don’t care if things change all around me.

All I know is that I’m stuck here, right? We’re just stuck on ID 7 and we made changes all around ID 7. And really the results should have looked like this. We should have only gotten six rows back and we should have gotten six rows back with these values, right?

Like this is the actual state of the table for accounts that have a value greater than or equal to 1,000 after all that stuff goes through, right? And this is just what the table looks like as a whole.

So you can see like this is all just janky. Like I think one of the things that like really sticks out is in the first result set, this last activity column, even though we made a bunch of changes and updated this for a bunch of rows, only two of the rows actually ended up with a last activity date, right?

Well, the rest of them are null. So this looks bad and weird, okay? Like it’s not great. Like if you think about like repeatable read or serializable, like sure you wouldn’t have necessarily those problems.

You would just have fun deadlocking problem because, you know, if you tried to like run an update in the transaction and then selected data with serializable and then you ran another update, it would just deadlock, right?

Just same with repeatable read. It’d be over with, through, kaput, finito. So let’s contrast that with, of course, RCSI. Now I’m not saying that the RCSI results are necessarily better because the RCSI results are, well, they’re more consistent.

I’ll give it that. But you’re still not getting back like the intermediate changes within all those things, right?

So if we, I think I have to rerun this because it’s going to tell me that, oh no, it didn’t work. Okay, good. All right. So let’s reload the table. And for some reason, that something weird opened up on my monitor.

So forget that. So let’s reload the table. And now we have the table set back to its original state. We have RCSI turned on. And now if we do this and we look at the table, we come over here and we run this, we’re just getting a snapshot of the table back before this change to 5001, right?

Because that first update that we run over here, we’re saying set account value equals 5001, where ID equals seven.

So we’re still getting back the last known good row for ID seven, right? This did not change to 5001. We did not have a last activity date. And the same will go for all the other stuff that happens within this transaction, right?

If we update these things and we come back over here and we run these queries again, nothing in this table has changed, right? Like everything is exactly the same as before things ended up being committed.

And if we run this and do the inserts and we run this, like we can still see that we’re not reflecting any changes, right? We’re not blocked, but we’re still not showing the changes from within this transaction yet.

If we commit this, of course, let’s just make sure that’s extra committed and we run this. Now we see all the changes, but that was a lot like when, like in the second query, sorry, the first demo, when this query got blocked up and like didn’t, like didn’t show a bunch of wrong stuff, like inconsistent state data from the table, like as things changed around it.

And then this one down here, which is, you know, essentially, like in the first query, this one was essentially like the right results. Like we just had to run the query again after the blocking resolved.

And this is sort of like the full state of the table. So yeah, basically, coming back to my original point, no isolation level is exactly perfect.

What you want to ask yourself when you’re designing applications and when you’re trying to choose things like isolation levels is what you would prefer. With read committed to pessimistic isolation level, if you, you know, remember that first set of queries that we did, we got blocked and our query returned bad results anyway.

So being blocked in SQL Server is not a pleasant user experience. It’s not fun for anybody. So our query got blocked and then still gave us crappy results at the end.

Under RCSI, like we didn’t get blocked. We didn’t see all of the reflected changes just yet. We didn’t get blocked though, right? The query results returned immediately. I’m not saying that users would be maybe satisfied with that because like, you know, they got back like that snapshot of the table where ID7 still hadn’t changed, right?

We did all that stuff around ID7 and we didn’t see any of those changes until the transaction committed. That might be right for you, but that might not be exactly what end users are expecting either.

So really, it’s just a matter of you asking yourself the question, do I want users to get, you know, like committed data back very quickly or do I want to risk users getting blocked and like getting like crappy data back after getting blocked for a long time?

For me, I would much, much rather prefer, I don’t think I need to rather prefer. I would much rather or I would much prefer, not much rather prefer, that’s absurd, I should smack myself, self-flagellate for using English so dumbly.

I would prefer that users get consistent data back until a transaction commits and then see the final result of everything that happened in that transaction rather than get blocked, get back some plum weird results and then have to rerun it again anyway.

So, you might not want that though. For some reason, you might hate your end users and you might want them to get blocked and you might want them to get janky results back and have to run the query again.

Maybe you charge them by the query, maybe you charge them by the CPU tick, maybe every month, at the beginning of every month, you record the number of CPU ticks and at the end of the month, you record the number of CPU ticks and whatever the difference is, you charge like a dollar a tick or something.

Right? This is a pretty good pricing. I think, wait, isn’t that a DTU? That’s interesting. Anyway, yeah, these are the application design questions that you should ask yourself.

Now, again, something that I think obviously bears repeating even though many of you, if you’ve been watching my videos, are already well aware of this. If we use no lock, everything would have been terrible.

Right? We would have seen not good stuff along the way. So, we’re not no locking. We’re not going to, we’re not even going to demo it because I don’t want people to say, oh, look, Eric did no, like, like, turn off the sound and just be like, oh, look, Eric did no lock in the demo and it didn’t get blocked and maybe that looks cool.

I don’t, I don’t, I don’t think that’s all right either. So, we’re going to not do that. What we’re going to do is take this, take this time to reflect upon the applications we’re developing and ask ourselves, what would we prefer users experience with our application?

You know, it might be okay for users to just hit refresh a few times and get eventually the right results, eventual consistency, or you might just want them to sit there and get blocked for a while and then get weird results back.

Start threatening to sue you or something. I don’t know. I don’t know how all that works. So, take this time, take this lovely, well, I mean, it’s Monday afternoon here.

I don’t know when you’re going to be watching this. So, take this, take this moment now, this moment, while you have it. This time is ticking and precious and fleeting and you’re never going to have this time back.

So, take this time to really think carefully about how you want your applications to function and how you want your end users to experience your applications. RCSI is usually a better choice for most applications and most application developers.

It takes away a lot of the headaches that come with having to troubleshoot blocking and deadlocking between readers and writers and it just generally gives you more consistent results without the concerns of no lock or read uncommitted.

So, yeah. Yeah. I think that’s about good there. Like I said, it’s hot and my brain is fried.

So, we’re going to end this one here. Thank you for watching. I hope you enjoyed yourselves. I hope you learned something. I hope you all go turn RCSI on immediately. I kid, but I don’t kid.

If you liked this video, there’s a little thumbs up you lucky button I’m told on YouTube that you can say thank you with. Comments are another nice way to say thank you.

And if you would like to see more videos like this immediately, hot off the presses as soon as Beer Gut Magazine finishes cutting me my check and shooting me up with my adrenochrome, I can talk through this, and I record the video, then you can subscribe to the channel and experience me in almost real time.

I guarantee you I’m quite a real time experience. You should do that. You and me. Subscribey buddies.

I’m going to go open windows and take a cold shower. Maybe not in that order, but in some order. Eventually that’ll be consistent too.

Anyway, thank you for watching.

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.