Five Reasons Why Your SQL Server Is Slow Right Now

Thanks for watching!

Video Summary

In this video, I delve into the reasons why your SQL Server might be experiencing performance issues right now. I start by setting up a clear environment using the remote DAC (Dedicated Administrative Connection) to ensure that you can run analysis scripts without interference from ongoing problems. I emphasize the importance of having useful scripts like `sp_whoisactive` and `sp_PressureDetector` at your disposal, as they provide critical insights into what’s happening on your server. The video then explores common issues such as CPU and memory pressure, long-running maintenance tasks, blocking, and parameter sniffing, offering practical advice on how to identify and address these problems before a restart clears out all the evidence. By sharing this knowledge, I aim to help you gather valuable information that can prevent future issues and improve your SQL Server’s performance.

Full Transcript

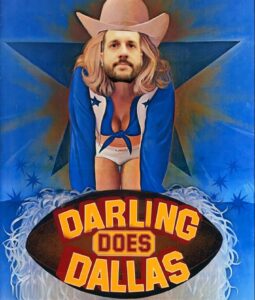

Erik Darling here with Darling Data. And in today’s video, we’re going to talk about why your SQL Server is slow right now. I’m a little blurry. Hang on a second. I gotta fix myself up a little bit. There we go. Now we’re nice and crisp. Recently voted by BeerGut Magazine as the most crisp SQL Server consultancy in the world. So it’s always nice to get an accolade from BeerGut Magazine. It’s a nice change of pace from when they’re threatening to sue or assassinate me. So, you know, ups and downs in relationships, restraining orders, stuff like that. So the things you’re going to need when your SQL Server is slow are typically the remote DAC. That is the dedicated administrative connection. And you’re going to want that because you’re going to want a way to connect the SQL Server and run your analysis queries in a way that is not impacted by whatever awful explosion of poop stuff is going on all around you. Right? You need to be able to, you want to be able to run your analysis scripts unfettered by the catastrophe unraveling on your SQL Server. You also need some useful analysis scripts like SP Who is Active and SP Pressure Detector. Those are very good things to use in order to figure out exactly why your SQL Server is active.

So, you know, the SQL Server is unhappy at the moment. Right? So, connect to the DAC. Use the scripts. Third-party monitoring tools are cool, but under sufficient pressure, they can’t collect data either. You don’t want your third-party monitoring tools connecting via the remote DAC to get stuff because then it won’t be available to you if you need to do anything. So, you know, it’s, well, it’s attempting, right? Because you’re like, oh, if I just have them connect to the DAC, they’ll never miss out on anything, they’ll never miss out on anything, it’ll be great. Unfortunately, you might want that for yourself. So, don’t, don’t, don’t do that. Don’t, please don’t. Great. But, yeah, like, you know, if you might see if, you know, SQL Server really hits the skids at some point, you just might see big gaps in your monitoring tool dashboard where it wasn’t able to collect data either. So, at best, it can confirm when things hit the fan, but not which things hit the fan. And the reason why I’m doing this video is to sort of put a few different things all together in one place because I still run into this a lot and there’s nothing more frustrating as an outside SQL Server observer than when someone says, hey, we had a problem three days ago and restarted SQL Server. Can you tell us what happened?

Because the answer is no. Once you restart SQL Server, you clear out everything useful that I could have looked at. Granted, I get it. Sometimes you’ve got to restart SQL Server to resume, you know, those business critical tasks like making money. All right. If you don’t make money, what do you do? All right. And I’m looking at you, CrowdStrike. Stink. Stink bombs you are. So, you know, restarting SQL Server, well, it might get things back on track. It removes, you know, just about anyone’s ability to, like, tell you what exactly happened at that time. It clears out all the useful stuff, you know, and it might also prevent some useful things from being logged.

Now, there may be some evidence of what happened in other places, like the error log, query store, maybe sometimes catches things assuming it’s query related and not like something different. But even then, the getting meaningful evidence out of query store after a tragic event is kind of, I mean, it’s a stretch, right? So, what I want you to be able to do is log in to SQL Server, use the remote DAC, run useful analysis scripts, maybe even take some screenshots, maybe even save some query plans, maybe, you know, get some stuff out of SQL Server before you reboot it and get business back on track.

You know, usually if you take an extra 30 seconds or a minute to get some additional information, it’s okay, right? Like, people might sweat it out a little bit more, but, you know, if you say, you know, this will help us avoid it in the future, you know, most people are going to be okay with you doing some, like, you know, forensic digging before the reboot. We’re going to leave aside some things that should be happening, you know, in a more controlled way.

So, things like hardware changes, index changes, and settings changes, those aren’t things that you should be doing while people are trying to make money. Those are things that you should be doing in, you know, low impact times, not business critical times, right? You should avoid those things then because you want to do those things when, like, you know, you have time to, like, make the change, look at SQL Server for a bit, kind of look around, get your palms a little sweaty, and figure out if that’s a change that is okay to stay or you need to roll back.

We’re also going to leave, like, the unexpected failover off this because, you know, the unexpected failover is sort of… Surprise failovers are almost like auto stats updates and they’re, like, sudden, like, kaboom! Why is everything awful? Ah, that’s why.

And let me tell you something. I am never going to troubleshoot a surprise failover. That’s not my deal. I know people who will do it, so if you need that, I can point you in the right direction. But if you want to know why your AG failed over, this is not the face you want on camera.

Surprise stats updates are tough, too, because there are so many of them that could happen. And there are so many different things that they could affect that it’s like, you know, well, was it one stats update that bonked everything? Or did, like, 15 different stats updates and, like, cause 15 different problems?

It’s very, very difficult to track that stuff down. And it’s well-nigh impossible to do it if you’ve restarted SQL Server. Now, for settings changes, if something happens out of band, right, you know, I’m not saying who did it.

Neither will SP Log Hunter, but let’s say SP Log Hunter will look through SQL Server’s error log, and it will track down what settings changed when, but not necessarily who did them, right? You might be able to figure out who did them, because someone might suddenly, like, set all their slack away messages and be like, Nope, out for a jog.

Couldn’t possibly have been changed in MacStop and CosRashold in the middle of the day. Wouldn’t be me. Nope. This video is more about figuring out kind of what’s currently happening and the best ways to focus in on different aspects of what might be happening. We’re going to cover CPU pressure, memory pressure, out-of-band, or long-running maintenance, blocking, and parameter sniffing.

So we’re going to, probably the five most common things I see when I’m troubleshooting SQL Server issues. There have, of course, been some really interesting problems that I’ve seen over the years. They’re just not common enough to put into a video because, you know, if they’re outlandish, wild, like once to half a dozen times in a consultant’s lifetime things, they’re probably not stuff that you’re going to run into often enough that you’re going to get any value out of it.

So let’s get started before I mess something up. And the first thing that we’re going to do is focus in on CPU pressure, right? So this is what this server looks like when everything is okay.

If we sort of focus in on this section here, we have 704 threads. There’s some background stuff running that’s used 82. So we have 622 available, nothing reserved, no threads waiting for CPU, no requests waiting for threads, and we have no current thread pool weights.

All good signs. Another good sign is there are no queries running down here. This section is completely empty. Grand for us.

So let’s stick this store procedure into… Oh, I already did that, and I’ve already got a thousand threads lined up for this thing. And I’m just going to kick this off, and I’m going to run SP pressure detector a few times just until we start seeing thread pool weights start to show up in here because that’s when we know things have gotten rather interesting.

And they should start piling up. There we go. All right. Now we’re cooking with gasolines. All right.

So I’m going to kill that just because we don’t need to make things any worse. So here’s what we need to… Here’s what you want to look for. Basically, remember when this was a positive number and not a negative number, and these were all zeros?

Those were much better times, weren’t they? Everything on our server was nice and hunky-dory, and we were having picanics together, and we were eating crepes.

We were Lady and the Tramping Crepes-Suzettes, and it was lovely. It was truly a magical time in our lives. That time has passed. And what happened was we had a bunch of queries all come in and beat the crap out of our server, and this was not a very good time.

For us, no. This is times of war, famine, and pestilence, plague. But enough about 2020.

So we had all these queries running, and we exhausted our available pool of worker threads. We went negative on the worker threads, and SQL Server was lining up queries to execute. Now, one thing that SQL Server will do when it runs low on worker threads is it will start downgrading queries to DOP1.

Now, if you notice that there is one query in here running at DOP16 still that has 80 parallel workers assigned to it. Wowee!

80? This must be some query. This thing has been, you know, this is just one query that’s running in parallel. The rest of these have all been downgraded to DOP1. Or actually, I mean, there’s a few other 16s in there that are sucking up resources.

So the SQL Server does sort of like have a protective mechanism in here. The funny thing is that you will see a parallel plan for all the DOP1 queries. They’re just, it’s a parallel query running on a single thread.

So have fun with that. So this is some of the stuff that you’ll see when things get bad, along with all these null session IDs just waiting on thread pool, right?

So this is the kind of stuff you’ll see when you’re under CPU pressure. Now, let’s look at what happens when SQL Server comes under memory pressure, because that’s an important thing to understand as well.

By default, SQL Server is willing to give any one query 25% of your server’s max server memory setting. And it’s willing to give any group of queries up to 75% of your server’s max server memory setting to run and do memory granty things.

Memory grants most often come from sorting and hashing. So I’m going to run, well, we don’t need a thousand of these. That’s just mean. We’re just going to do 10 of these.

And if we focus in on memory here, we’re going to see exactly kind of what happens when SQL Server runs out of available memory to give to queries. So here is where you can see what your memory grant settings are for SQL Server.

These come from resource governor. Whether resource governor is enabled or not, this is where the settings sort of come from. If you’re on enterprise edition, resource governor can be a great way to turn that 25 number down to a much lower number so that you don’t have to deal with queries asking for insane memory grants and not using them.

SQL Server is very aggressive when it comes to the memory granting. But what you’ll see under memory pressure is you’ll see probably something like this memory clerk SQL reservations number get real big, right?

That’s 50 gigs of memory grants. And you’ll see some numbers down here start to change too. So I’m going to rerun this after I talk about this stuff so you can see that these numbers do tank down when you’re under memory pressure.

So you’ll see we have three queries that have been granted memory, seven that are waiting for memory. We’ll see the 50.21 gigs that we saw before, right, of granted memory going out the door. And these numbers in here will change, right?

So these numbers in here will get lower, especially the available memory column. And if I give SP pressure detector a run with nothing going on, you’ll see that these total and available memory columns will float right back to 60 gigs, right?

So one thing that I want to say real quickly, though, is if you don’t know what’s happening on the server, you don’t have to worry about running SP pressure detector with any parameters. If you run it with nothing, it’ll give you all the results and you’ll be able to pick out from there what’s going wonky, right?

So just like a bare but naked run of SP pressure detector will give you weight stats, disk stats, what do you call them, perfmon counters, tempDB info, memory info, like all the stuff that we’ve seen with the CPU and memory.

Like it just returns everything. So you get all that stuff back at once and you can pick and choose from what’s happening. So under CPU pressure, you’ll hit a lot of thread pool weights. Under memory pressure, you’ll most likely hit resource semaphore weights and maybe even resource semaphore query compile weights, depending on how that memory is getting booted out the door.

So that’s a couple of signs of like physical pressure on the server, physical hardware pressure on the server. Another thing that you might run into is runaway maintenance. So I’m going to focus on dbcccheckdb.

But you know, it’s kind of funny. I sure do wish that when I saw runaway maintenance, it was dbcccheckdb. Usually when I see runaway maintenance, it’s some buffoon rebuilding and reorging indexes at 5% and 30% because they read it somewhere in 2003 from a blog post that doesn’t even have like work in code formatting or a bunch of broken picture links.

So as much as I wish that folks would listen to me and stop doing useless index maintenance, it still happens a lot and I still have to talk people out of it. And some people will cargo cult around this useless facility until eventually someone pulls them kicking and screaming away from SQL Server.

I don’t, you just can’t help some people. It’s something that I’ve learned after about 10 years of consulting is you just can’t help some people.

They will just do whatever they want to do. They find their comfort zone and they stick to it. Nothing can get them out of it. So I ran dbcccheckdb before I started recording this so that we would have a log of how long it took, which is 24 seconds.

I promise we’ll get back under my armpit there. 24 seconds for dbcccheckdb. Now, you know, you can think what you want about the 24 second number. Doesn’t make much difference to me.

But if we run, now if I ran this query workload without dbcccheckdb also running, I want to say it took like 15, 20 seconds. But if I run, if I kick off dbcccheckdb, here, and I start running this here and go boop, both of these, both of these will suffer.

Right? dbcccheckdb is going to take longer and this query workload is going to take longer because they’re happening at the same time.

Now, again, very simple and easy way to see if this is what’s affecting your server. Run spwho is active because you’ll see if dbcccheckdb or any other maintenance task is running. Now, things that I would absolutely check for, checkdb, index maintenance, and full and differential backups.

If I would expect to see log backups happening pretty often during the day, because if it’s important business data, well, guess what? Log backups are a good idea. Huh?

Yeah, they’re great. Log backups. Who would have thunk it? Amazing things. You see those running during the day? Okay. It’s fine. Checkdb, full backups, differential backups, and of course, any form of index maintenance could be a pretty tough sell there.

I would even say that unless you specifically schedule statistics maintenance to happen during the day, I would be also very surprised to see stats maintenance happening during the day.

So, just to qualify what I said about useless index maintenance, statistics maintenance is not useless. Do that. It is good for you. It is a part of a balanced breakfast. But now, coming back to what I was saying, with the query workload running and Checkdb also running, well, Checkdb took an extra 10 seconds down here, right?

Look at that. 35 seconds. Not anything outlandish, because, you know, this does have to sort of fit into a video that someone will watch.

And the query workload over here took 18 seconds. Okay. So, this didn’t change too badly, but the DBCC Checkdb took longer. But one thing that I would look for, I mean, that is about, I think, five seconds worse than it was without it.

But anyway, one thing that I would absolutely look for is runaway maintenance tasks, because, you know, DBCC Checkdb is not a blocking problem. DBCC Checkdb is like a resource usage problem.

You know, index maintenance very much so could cause lots of blocking problems, right? Even with online equals on, you can still see blocking from that stuff. So, be very careful there.

Moving right along, another very common reason why SQL Server might be slow right now would be blocking. So, let’s come over here, and let’s begin a transaction, and let’s do a very small update just to take out enough of a lock to cause a problem.

And let’s copy this, and let’s stick this in here. And we don’t really need to, you know, give this a lot of threads in order to show you what’s happening.

But we do need to run spwhoisactive in a very specific way. So, we are going to run spwhoisactive. We’re going to use findBlockLeaders equals one. My finger disappears here, apparently.

So that we get the blocked session count column in our output. We’re also going to use getPlans, because getting execution plans for these things can often help us figure out why blocking was going on for so long.

Might be a very inefficient modification query. Worth looking at. You might also be able to figure out if someone was running some sort of modification query when they shouldn’t have been.

Maybe they did the old forget-aware clause trick. I don’t know. But anyway, this is still kicking excellent. So let’s run this.

And what you’ll see is very useful material. I’m just going to roll this back so we don’t have to, I don’t forget about doing that later. But if we look at spwhoisactive, we’ll see a bunch of queries experiencing lock weightness. And we’ll see over here, we’ll see these blocking session ID columns populated with spids.

They will often correlate with session IDs you see over here, which is a wonderful thing, because then you know who’s doing what. And the real magical part of the way that I ran spwhoisactive was that the results, instead of getting ordered by the duration column, the ddmmhs whatever column all the way to the left, we ordered by blocked session count so we knew who was at the very top of the blocking chain and who was suffering underneath them.

But it is a little bit misleading to see this select query right here as a blocker for the other select queries. That’s just sort of the way it looks in the dmbs. And, you know, that’s not really anything that’s, you know, going to change your life.

You just need to make sure you understand it. The reason this query has nine blocked sessions behind it, because this query was blocking this query, right?

So this select query isn’t really at fault. It’s this update query. And it just kind of looks like this one was doing something that the rest of them needed to do. So blocking, very, very common reason why you might see a server having problems. Depending on what the lead blocker is, you know, you might be able to just kill the lead blocker and, you know, let everything else move on.

There are a lot of, you know, you do have to be careful about rollbacks, because rollbacks can, you know, and if you don’t have accelerated database recovery enabled, rollbacks are awful long, can be awful long running single threaded log reading tasks that you don’t want to deal with, because they can just make your blocking problems go on longer.

So just be very careful if you decide to start killing lead blockers, what ends up happening to them, right? If the lead blocker is, you know, not a query, if it’s some other weird thing, again, just be careful with what you’re doing there.

Another thing that can happen quite a bit, and that I see quite a lot in my consulting, is of course the old parameter sensitivity. And I’m going to create a couple indexes here.

And once these finish, I’m going to run this store procedure a whole bunch of times in here. And for this one, I’m just going to do 100 threads. And even for 100 threads, this finish is incredibly fast, right?

Let’s, even if I do 1,000 threads, this will finish very fast, right? This store procedure runs very quickly most of the time. Now, if I dial this back to, let’s just say 10 threads so that we don’t, we don’t overwhelm ourselves, and I run this store procedure with a different parameter, all of a sudden things will look slow.

And we’re going to run spwhoisactive with a different set of parameters than before. We’re going to use the getAverageTime equals 1. And what this will do is it’ll look for prior executions of this store procedure where, in the plan cache, and say, well, how long do you usually run for?

Oh, that long. Well, that’s abnormal, isn’t it? And so what you’ll see is a couple columns in here. This one is how long things are actually running for.

And this one is how long things are usually run for. And that’s where you need to be careful. So, anyway, let’s wrap this up. These are common reasons why SQL Server might be slow.

Thank you for watching. Hope you learned something. Hope you enjoyed yourselves. If you like this video, give it a thumbs up or a comment. If you like this video a lot, subscribe to my channel.

I love you. Thank you. Also, hire me to figure this stuff out for you because I’m pretty good at it. Bye.

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.