Outline

In SQL Server 2019, a few cool performance features under the intelligent query processing umbrella depend on cardinality estimation.

- Batch Mode For Row Store (which triggers the next two things)

- Adaptive Joins

- Memory Grant Feedback

If SQL Server doesn’t estimate > 130k(ish) rows are gonna hop on through your query, you don’t get the Batch Mode processing that allows for Adaptive Joins and Memory Grant feedback. If you were planning on those things helping with parameter sniffing, you now have something else to contend with.

Heft

Sometimes you might get a plan with all that stuff in it. Sometimes you might not.

The difference between a big plan and little plan just got even more confusing.

Let’s say you have a stored procedure that looks like this:

CREATE OR ALTER PROCEDURE dbo.lemons(@PostTypeId INT)

AS

BEGIN

SELECT OwnerUserId,

PostTypeId,

SUM(Score * 1.0) AS TotalScore,

COUNT_BIG(*) AS TotalPosts

FROM dbo.Posts AS p

JOIN dbo.Users AS u

ON p.OwnerUserId = u.Id

WHERE PostTypeId = @PostTypeId

AND u.Reputation > 1

GROUP BY OwnerUserId,

PostTypeId

HAVING COUNT_BIG(*) > 100;

END

GO

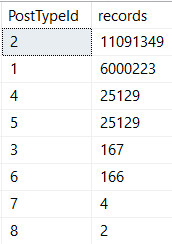

There’s quite a bit of skew between post types!

Which means different parameters will get different plans, depending on which one comes first.

EXEC dbo.lemons @PostTypeId = 4; EXEC dbo.lemons @PostTypeId = 1;

Fourry Friends

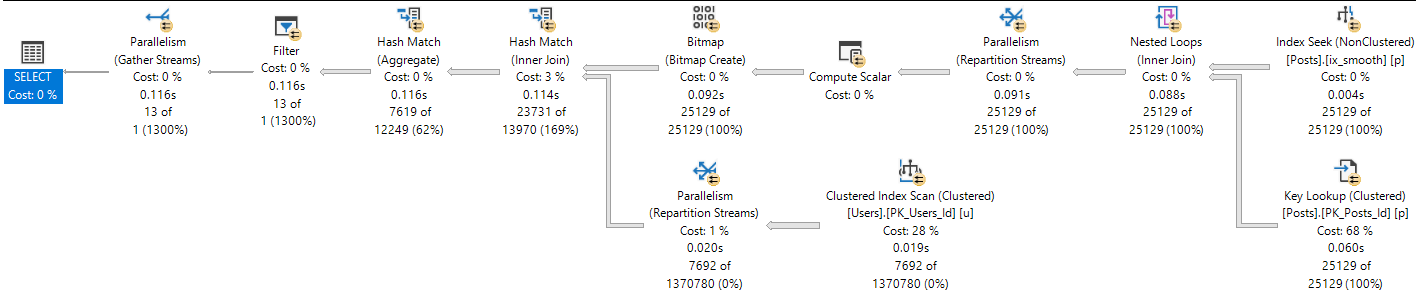

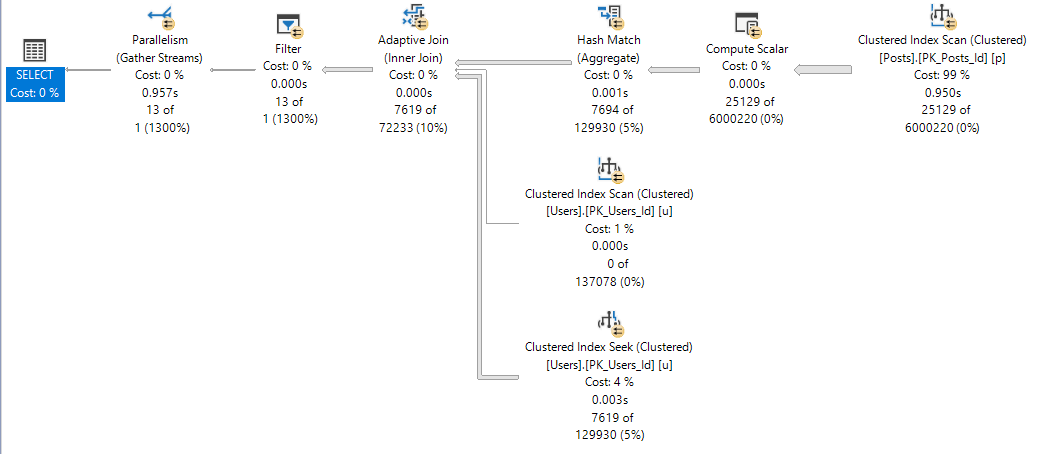

When we run four first, this is our plan:

It’s not “bad”. It finishes in 116 ms.

But when we run 1 next, it’s fewer well.

Less gooder?

You decide.

At 12 seconds, one might accuse our query of sub-par performance.

One and Lonely

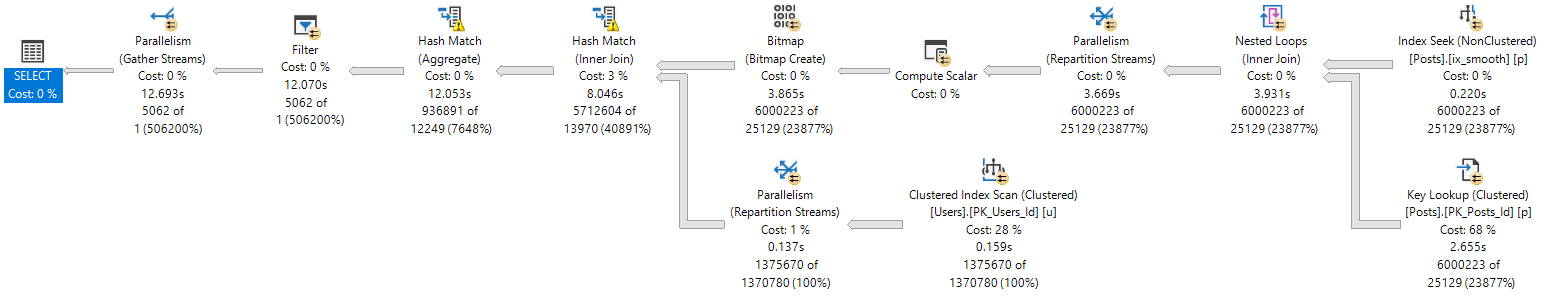

When one runs first, the plan is insanely different.

It’s about 10 seconds faster. And the four plan?

Not too shabby.

We notice the difference between 116ms and 957ms in SSMS.

Are application end users aware of ~800ms? Sometimes I wonder.

Alma Matters

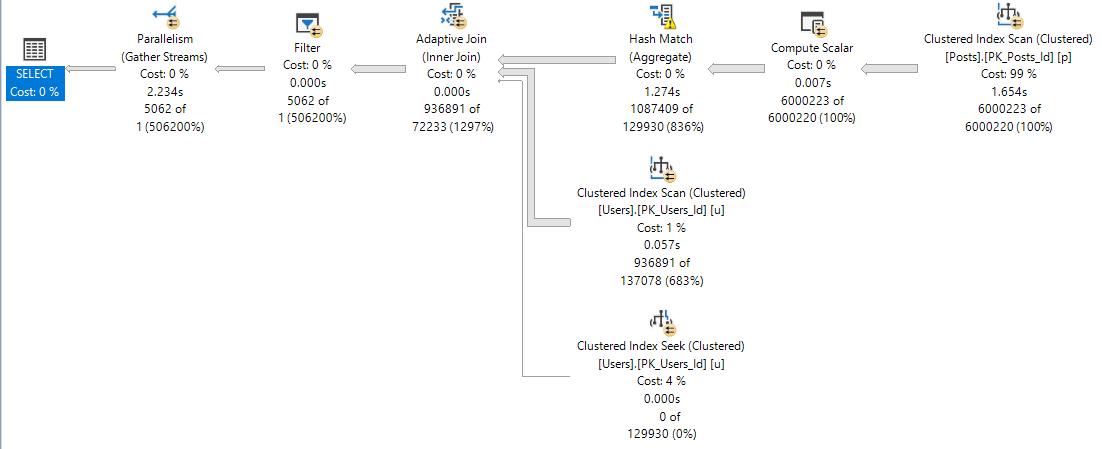

The adaptive join plan with batch mode operators is likely a better plan for a wider range of values than the small plan.

Batch mode is generally more efficient with larger row counts. The adaptive join means no one who doesn’t belong in nested loops hell will get stuck there (probably), and SQL Server will take a look at the query in between runs to try to find a happy memory grant medium (this doesn’t always work splendidly, but I like the effort).

Getting to the point, if you’re going to SQL Server 2019, and you want to get all these new goodies to help you avoid parameter sniffing, you’re gonna have to start getting used to those OPTIMIZE FOR hints, and using a value that results in getting the adaptive plan.

This has all the same pitfalls of shooting for the big plan in older versions, but with way more potential payoff.

I wish there was a query hint that pushed the optimizer towards picking this sort of plan, so we don’t have to rely on potentially changing values to optimize for.

Thanks for reading!

Going Further

If this is the kind of SQL Server stuff you love learning about, you’ll love my training. Blog readers get 25% off the Everything Bundle — over 100 hours of performance tuning content. Need hands-on help? I offer consulting engagements from targeted investigations to ongoing retainers. Want a quick sanity check before committing to a full engagement? Schedule a call — no commitment required.

Related Posts

- Join Me At Data Platform Summit 2020!

- SQL Server 2022 CTP 2.1 Improvements To Parameter Sensitive Plan Optimization

- SQL Server 2022 Parameter Sensitive Plan Optimization: Does Not Care To Fix Your Local Variable Problems

- SQL Server 2022 Parameter Sensitive Plan Optimization: Sometimes There’s Nothing To Fix